The C++ memory model is a formal specification, introduced in C++11, that defines how threads interact through shared memory. It answers three fundamental questions: What is a data race and why does it cause undefined behavior? What does it mean for one operation to “happen before” another across threads? And how do the memory ordering options on std::atomic control the visibility of memory writes between threads? The memory model is what makes multithreaded C++ programs portable across CPUs with different hardware memory models (x86, ARM, RISC-V).

Introduction

Modern CPUs and compilers perform optimizations that seem perfectly safe when viewed by a single thread — but can produce surprising behavior when multiple threads interact. A compiler may reorder two independent stores because neither depends on the other’s result. A CPU may execute instructions out of order, completing a later write before an earlier one. A core’s cache may buffer a write, making it invisible to other cores for milliseconds.

For single-threaded programs, these optimizations are invisible: the “as-if” rule guarantees that the observable behavior matches what the sequential source code describes. But for multithreaded programs, these optimizations can produce outcomes that look impossible from the source code perspective — data written in one thread is not visible to another thread even though the write “happened first” in source code order.

Before C++11, the C++ language specification had no concept of threads. The standard described a single-threaded abstract machine. Multithreaded programs relied on platform-specific extensions (POSIX pthreads, Windows threads, compiler intrinsics) whose interaction with the C++ abstract machine was undefined. This meant that technically, any multithreaded C++ program was undefined behavior — the compiler could, and sometimes did, optimize across threading boundaries in catastrophic ways.

C++11 fixed this by introducing a formal memory model: a set of rules that defines exactly what is and is not permitted in concurrent programs, what constitutes a data race (and why it produces undefined behavior), and how std::atomic operations establish ordering guarantees that are portable across all conforming hardware and compilers.

This article builds a complete understanding of the C++ memory model. You will understand the abstract machine, sequenced-before and happens-before relations, what a data race formally is, why it produces undefined behavior, and how the memory ordering options on atomic operations control the strength of the guarantees you get. This is the conceptual foundation that makes everything from the previous three articles click into place.

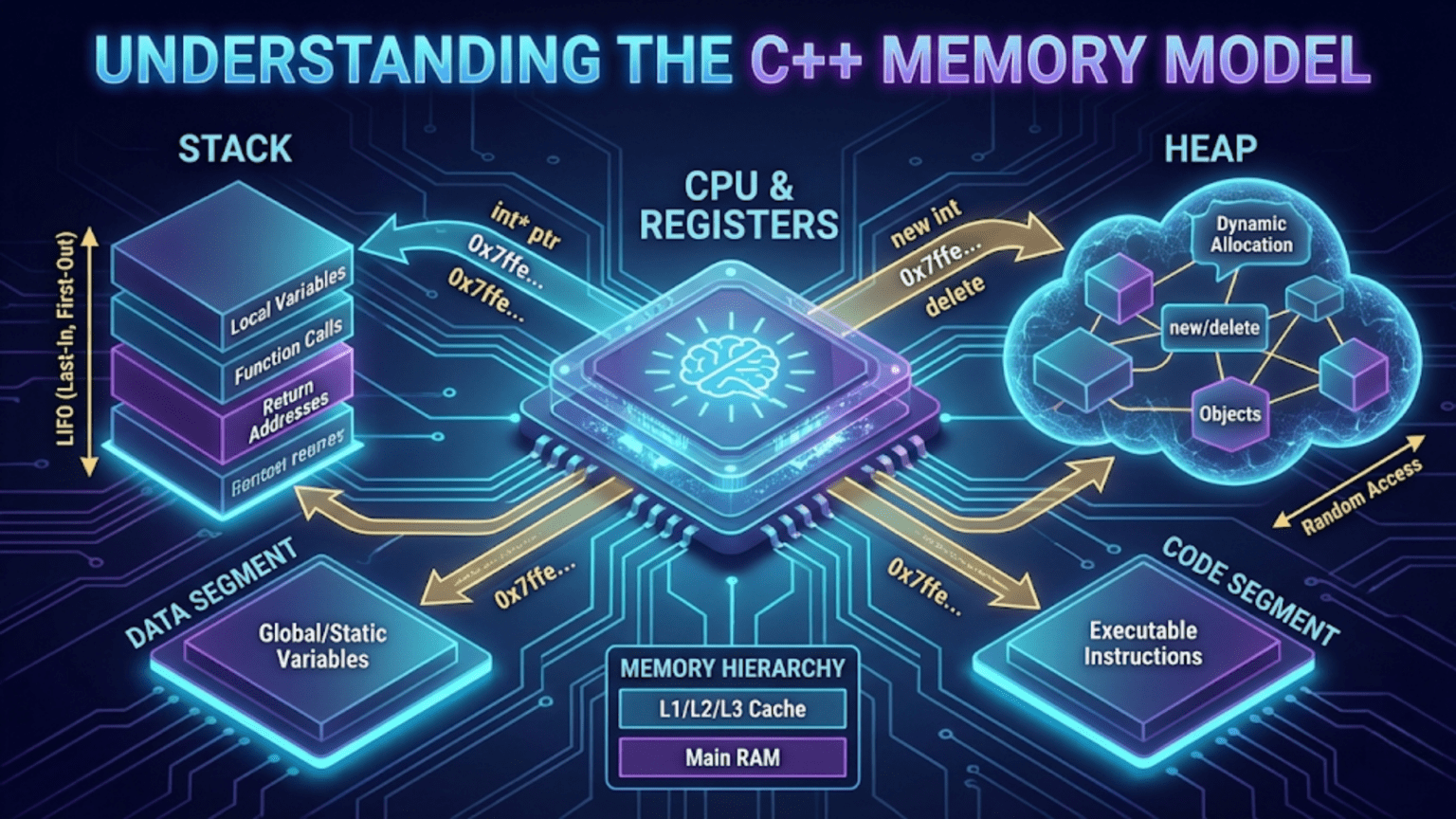

The Abstract Machine: What C++ Actually Specifies

The C++ standard does not specify “run on x86” or “run on ARM.” Instead, it specifies an abstract machine — a hypothetical computer whose behavior the program must match. The compiler is free to generate any machine code it wants, as long as the observable behavior of the resulting program matches what the abstract machine would produce.

For a single-threaded program, the “observable behavior” is the sequence of I/O operations (reads and writes to volatile objects, calls to I/O functions). This is the as-if rule: the compiler may reorder, eliminate, and transform operations as long as the observable output is the same.

For a multithreaded program, the memory model extends the abstract machine with rules about how threads interact. The key insight is that without these rules, multithreading is simply undefined:

// Without the C++11 memory model, this was technically undefined behavior

// even on platforms that supported threads:

int x = 0;

bool ready = false;

// Thread 1:

x = 42; // No defined relationship with Thread 2

ready = true; // No defined relationship with Thread 2

// Thread 2:

if (ready) {

cout << x; // What is x? The standard said nothing before C++11

}C++11 gave this code meaning — and specifically said it is undefined behavior as written (a data race on both x and ready). It also gave the tools to fix it, which we will see throughout this article.

Sequenced-Before: Ordering Within a Thread

The simplest ordering relationship is sequenced-before — it describes the ordering of operations within a single thread. In a single thread, statements execute in program order (with compiler-permitted transformations that don’t change observable behavior).

#include <iostream>

using namespace std;

int main() {

int a = 1; // Statement S1

int b = 2; // Statement S2 — sequenced after S1

int c = a + b; // Statement S3 — sequenced after S2

cout << c; // Statement S4 — sequenced after S3

return 0;

}The sequenced-before relation is:

- S1 is sequenced before S2

- S2 is sequenced before S3

- S3 is sequenced before S4

By transitivity: S1 is sequenced before S4.

This is the straightforward, intuitive order. Within a single thread, sequenced-before matches program order (with some exceptions in expressions with multiple side effects, which the standard carefully specifies with sequence points/sequencing rules).

What a compiler is allowed to do:

The compiler can reorder or eliminate operations as long as sequenced-before ordering is maintained for observable effects:

int x = 5;

int y = 10; // Compiler may swap these two stores if neither is observable

int z = x + y;

cout << z; // Only this matters — the final output must be 15The compiler may generate code that computes y = 10 before x = 5 because neither has observable side effects and the final result is identical. This reordering is invisible in a single-threaded program — but in a multithreaded program, another thread observing x and y might see the reordered values.

Data Races: Formal Definition and Undefined Behavior

A data race in C++11 is precisely defined: it occurs when two threads access the same memory location, at least one access is a write, and the two accesses are not ordered by a happens-before relationship.

When a data race occurs, the behavior of the entire program is undefined — not just the value of the racy variable. The compiler and hardware are permitted to assume data races don’t happen, and this assumption enables optimizations that can produce completely unexpected results.

#include <iostream>

#include <thread>

using namespace std;

int shared = 0; // Not atomic — plain int

void writer() { shared = 42; }

void reader() { cout << shared << endl; }

int main() {

thread t1(writer);

thread t2(reader);

t1.join();

t2.join();

// Data race! writer and reader access 'shared' with no ordering between them

// Possible outputs: 0, 42, garbage, program crash, demons flying out of nose

// The standard says: undefined behavior

return 0;

}Why undefined behavior instead of “just a possibly-wrong value”?

Compilers exploit the absence of data races for optimization. Consider:

// A compiler sees this code in a single-threaded context:

bool flag = false;

// ... later ...

while (!flag) { /* spin */ }

// Work...The compiler may hoist flag into a register, generating:

// Compiled as:

if (!flag) { while (true) {} }

// Work...Because in a data-race-free program, if flag is false when we first check it and nothing in this thread changes it, it must still be false on every subsequent check — so why re-read it from memory? This is a valid optimization for single-threaded code. But if another thread sets flag = true, this thread never sees it — an infinite loop.

This is not a compiler bug. The data race made the behavior undefined, and the compiler legitimately assumed it doesn’t happen.

The three conditions for a data race:

- Two threads access the same memory location

- At least one access is a write (two simultaneous reads are never a race)

- The accesses are not happens-before ordered relative to each other

Happens-Before: The Core Ordering Relation

The happens-before relation is the central concept of the C++ memory model. It defines when the effects of one operation are guaranteed to be visible to another.

If operation A happens-before operation B, then:

- All memory writes made by the thread executing A (up to and including A itself) are visible to the thread executing B when it executes B

- The compiler and hardware are prohibited from reordering A and B

Happens-before is built from three simpler relations:

1. Sequenced-Before (within a thread)

Within a single thread, sequenced-before implies happens-before: if A is sequenced before B in the same thread, then A happens-before B.

2. Synchronizes-With (between threads via atomics)

An atomic release store in Thread A synchronizes-with an atomic acquire load in Thread B that reads the stored value. This synchronizes-with relationship creates a happens-before edge between threads.

#include <atomic>

#include <thread>

#include <iostream>

using namespace std;

int data = 0; // Non-atomic

atomic<bool> ready{false}; // Atomic — the synchronization point

void threadA() {

data = 42; // (1) Write data

ready.store(true, memory_order_release); // (2) Release store: publishes everything

// done before (2) to any acquire load

}

void threadB() {

while (!ready.load(memory_order_acquire)) {} // (3) Acquire load: syncs with (2)

cout << data << endl; // (4) Read data — guaranteed to see 42

}

// Happens-before chain:

// (1) sequenced-before (2) [within thread A]

// (2) synchronizes-with (3) [release-acquire pair across threads]

// (3) sequenced-before (4) [within thread B]

// Therefore: (1) happens-before (4) — data=42 is visible at (4)

int main() {

thread a(threadA), b(threadB);

a.join(); b.join();

return 0;

}Output: 42 (always, on any conforming implementation)

Step-by-step explanation:

data = 42in Thread A is sequenced beforeready.store(..., release). The release store creates a “publish point” — all writes before it are part of the published package.- The

while (!ready.load(memory_order_acquire))loop in Thread B spins until it readstruefromready. The moment it reads the value written by Thread A’s release store, a synchronizes-with relationship is established. - By transitivity: (1) sequenced-before (2) synchronizes-with (3) sequenced-before (4) — this chain creates a happens-before from (1) to (4). Therefore

data = 42is guaranteed visible when Thread B readsdataat (4). - Without the release/acquire pair — if both used

relaxedordering — no synchronizes-with relationship exists, no happens-before chain is established, and readingdataat (4) would be a data race.

3. Inter-Thread Happens-Before

Happens-before is transitive: if A happens-before B and B happens-before C, then A happens-before C. This transitivity is what allows complex chains of synchronization across multiple threads.

Thread 1: write X → release A

Thread 2: acquire A → release B

Thread 3: acquire B → read XEven though Thread 3 doesn’t synchronize directly with Thread 1, the chain Thread1→Thread2→Thread3 establishes that Thread 1’s write to X happens-before Thread 3’s read of X.

Visualizing the Memory Model: A Concrete Scenario

Let’s walk through a concrete scenario to make the abstract concepts tangible. This is the “message passing” pattern — the most common inter-thread communication idiom.

#include <atomic>

#include <thread>

#include <cassert>

#include <iostream>

using namespace std;

// Scenario: Thread A prepares a message and signals Thread B to read it

struct Message {

int id;

string content;

double value;

};

Message msg; // Non-atomic message data

atomic<bool> msgReady{false}; // Atomic flag: the synchronization point

void sender() {

// Step 1: Prepare the message (non-atomic writes)

msg.id = 1;

msg.content = "Hello from Thread A";

msg.value = 3.14159;

// Step 2: Publish with a release store

// This guarantees that all writes above are visible to any thread

// that performs an acquire load and reads 'true'

msgReady.store(true, memory_order_release);

}

void receiver() {

// Step 3: Wait for the flag with an acquire load

while (!msgReady.load(memory_order_acquire)) {

this_thread::yield();

}

// Step 4: The acquire-release pair guarantees:

// - msg.id == 1 (not 0 or garbage)

// - msg.content == "Hello from Thread A" (not empty or partially written)

// - msg.value == 3.14159 (not 0.0 or garbage)

assert(msg.id == 1);

assert(msg.content == "Hello from Thread A");

assert(msg.value == 3.14159);

cout << "Received: [" << msg.id << "] "

<< msg.content << " = " << msg.value << endl;

}

int main() {

thread s(sender), r(receiver);

s.join(); r.join();

return 0;

}Output: Received: [1] Hello from Thread A = 3.14159 (always correct)

What would happen without the release/acquire:

// BROKEN version: both use relaxed

void sender_broken() {

msg.id = 1;

msg.content = "Hello";

msg.value = 3.14;

msgReady.store(true, memory_order_relaxed); // No publish guarantee

}

void receiver_broken() {

while (!msgReady.load(memory_order_relaxed)); // No sync established

// UNDEFINED BEHAVIOR: msg writes may not be visible yet

// On ARM: may see msg.id=0, msg.content="", msg.value=0.0

// On x86: usually works due to hardware TSO, but still UB

cout << msg.id << " " << msg.content << endl;

}On x86 (which has Total Store Order — a relatively strong hardware memory model), the broken version often works anyway. This is why memory model bugs are so insidious: they hide on development machines (often x86) and surface on production hardware (ARM servers, mobile devices, embedded systems) or after a compiler upgrade that exploits the UB.

The Four Memory Ordering Levels in Depth

Now that you understand happens-before and synchronizes-with, the memory ordering options make precise sense.

Relaxed (memory_order_relaxed)

No synchronization, no ordering constraints beyond atomicity. The operation is atomic (no torn reads/writes), but establishes no happens-before relationship with anything.

// Valid use: statistics counter — only the final total matters

atomic<int> hitCount{0};

void recordHit() {

hitCount.fetch_add(1, memory_order_relaxed);

// No need to synchronize with anything else

// The final value after all threads complete will be correct

}

// Valid use: sequence number generation — each thread gets a unique number

atomic<int> nextId{0};

int allocateId() {

return nextId.fetch_add(1, memory_order_relaxed);

// IDs are unique and each fetch_add is atomic

// But no ordering guarantee relative to other operations

}Rule: Use relaxed when you need atomicity but not synchronization — when the value is self-contained and doesn’t “guard” access to other data.

Acquire-Release (memory_order_acquire / memory_order_release)

The most important and commonly needed ordering. A release store pairs with an acquire load to create a synchronizes-with edge, establishing happens-before across threads.

// The canonical pattern: protect non-atomic data with an atomic flag

atomic<Data*> publishedData{nullptr};

// Producer: writes data, then publishes pointer with release

void produce(Data* data) {

// Fill in data...

data->value = computeExpensive();

// Release: everything above is visible to anyone who acquires this pointer

publishedData.store(data, memory_order_release);

}

// Consumer: acquires pointer, then reads data

void consume() {

Data* data;

while ((data = publishedData.load(memory_order_acquire)) == nullptr) {

this_thread::yield();

}

// Acquire: data->value is guaranteed visible here

process(data->value);

}For read-modify-write operations: Use memory_order_acq_rel, which combines acquire (for the read part) and release (for the write part). Used in operations like fetch_add when the result must synchronize:

// Mutex implementation needs acq_rel on the lock operation

atomic<bool> locked{false};

void lock() {

bool expected = false;

while (!locked.compare_exchange_weak(expected, true, memory_order_acq_rel)) {

expected = false;

this_thread::yield();

}

// Acquire part: all writes by previous lock-holder are now visible

}

void unlock() {

locked.store(false, memory_order_release);

// Release: all writes while holding the lock are now visible to next locker

}Sequential Consistency (memory_order_seq_cst)

The default ordering when none is specified. All seq_cst operations form a single total order that is consistent across all threads — every thread agrees on the order of all seq_cst operations globally.

atomic<bool> x{false}, y{false};

atomic<int> z{0};

// Thread 1:

x.store(true); // seq_cst store

// Thread 2:

y.store(true); // seq_cst store

// Thread 3:

if (x.load()) { // seq_cst load

if (!y.load()) { // seq_cst load

z++;

}

}

// Thread 4:

if (y.load()) { // seq_cst load

if (!x.load()) { // seq_cst load

z++;

}

}

// With seq_cst: z can be 0 or 1, but NEVER 2

// Because the global order ensures x.store and y.store have a consistent order

// All threads agree on which happened first.

// With acquire-release only: z COULD be 2 (each thread might see different orders)Sequential consistency is the strongest (and safest) model and the one programmers intuitively expect. Its cost is a full memory fence on every seq_cst operation on architectures with weak memory models (ARM requires DMB — Data Memory Barrier). On x86, seq_cst stores require a MFENCE instruction or XCHG (which implies a lock prefix).

The tradeoff: seq_cst is easiest to reason about but generates more expensive memory fences on weak-memory architectures. For most application code, the performance difference is negligible. In performance-critical hot paths, carefully considered acquire/release with relaxed for pure statistics is the optimization.

The Happens-Before Table

Here is a complete summary of what establishes happens-before in C++:

| What creates happens-before | Description |

|---|---|

| Sequenced-before (same thread) | Statements in program order within one thread |

| Release store → Acquire load | Thread A’s release store synchronizes-with Thread B’s acquire load of the same value |

seq_cst store → seq_cst load | All seq_cst ops form a total order; earlier in that order → later |

mutex::unlock() → mutex::lock() | A mutex unlock synchronizes-with the next lock of the same mutex |

thread::join() in A → statements after join | Everything in joined thread happens-before statements after join() |

thread::launch in A → first statement in B | Thread creation happens-before first statement in the new thread |

notify_all/one() → wait() return | Condition variable notification synchronizes-with wait return |

promise::set_value() → future::get() | Setting a promise value happens-before reading via future |

Cache Coherence vs. Memory Ordering: Two Different Things

A common misconception is that “once a CPU writes to memory, all other CPUs immediately see it.” Modern CPUs do maintain cache coherence — every cache line has a single “true” value at any moment, maintained by the MESI protocol or similar. But cache coherence and memory ordering are different things.

Cache coherence guarantees that if Thread A writes to address X and Thread B later reads address X, Thread B will eventually see Thread A’s write (or a later write). It prevents two caches from disagreeing on the value of an address indefinitely.

Memory ordering controls when “eventually” becomes “now” — and critically, the order in which writes to different addresses become visible.

Thread A writes to address X, then Y: A: store X=1, store Y=1

Thread B reads from Y, then X: B: load Y, load XEven with cache coherence, Thread B might see Y=1 but X=0. How? Thread A’s store to X was buffered in A’s store buffer. Thread A’s store to Y completed first (X was in a different cache line, still being fetched). Thread B reads Y=1 (already in cache) then X=0 (still the old value).

This is the store buffer problem on weakly-ordered architectures. A release barrier before writing Y would flush the store buffer, ensuring X’s write reaches the cache before Y’s write propagates — establishing the ordering Thread B needs.

x86 (TSO model): x stays in store buffer, y store propagates

Without barrier: B may see Y=1, X=0

ARM (weaker model): Even more reordering possible — loads can pass stores

Without barrier: B may see Y=1, X=0 or many other combinations

With release/acquire:

Thread A: store X=1, [release barrier], store Y=1

Thread B: load Y, [acquire barrier], load X

If B's load of Y sees 1, then acquire barrier ensures X=1 is visibleThis is why memory_order_relaxed is insufficient for the message-passing pattern, and why code that works on x86 may fail on ARM — x86’s TSO provides more implicit ordering than ARM’s weaker model.

Practical Mental Model: Three Rules

For everyday concurrent C++ programming, you do not need to reason about every detail of the memory model. These three rules cover the vast majority of cases:

Rule 1: No data races. Every shared mutable variable must be either protected by a mutex or be std::atomic. If two threads access the same non-atomic, non-mutex-protected variable and at least one writes, it is a data race and UB.

Rule 2: Protect data with release-acquire. When one thread prepares data and another reads it, use a release store of an atomic flag after preparing data, and an acquire load before reading data. This establishes happens-before and makes all writes visible.

Rule 3: Use seq_cst by default, optimize only with measurement. The default (no explicit ordering) gives you seq_cst, which is correct and easy to reason about. Only replace with acquire/release/relaxed if profiling shows the overhead is significant and you are confident in the semantics.

// Rule 1: No data races

atomic<int> safeCounter{0}; // OK: atomic

mutex mtx; int protectedData = 0; // OK: mutex-protected

// Rule 2: Release-acquire for message passing

atomic<bool> ready{false};

// Producer:

data = prepare();

ready.store(true, memory_order_release);

// Consumer:

while (!ready.load(memory_order_acquire)) {}

use(data); // Safe: happens-before chain established

// Rule 3: Default is seq_cst — always safe

atomic<int> x{0};

x.store(42); // seq_cst — correct on all hardware

x.fetch_add(1); // seq_cst — correct on all hardwareReal-World Implication: Double-Checked Locking

Double-checked locking is a classic pattern that was broken before C++11 precisely because there was no memory model. Understanding the memory model explains why the C++11 version works.

#include <atomic>

#include <mutex>

#include <memory>

#include <iostream>

using namespace std;

class Singleton {

public:

// C++11 double-checked locking — CORRECT

static Singleton* getInstance() {

// First check: fast path, no lock if already initialized

// acquire: if we see non-null, all writes to *instance_ are visible

Singleton* p = instance_.load(memory_order_acquire);

if (p == nullptr) {

lock_guard<mutex> lock(mtx_);

// Second check: in case another thread initialized between checks

p = instance_.load(memory_order_relaxed);

if (p == nullptr) {

p = new Singleton();

// release: all writes to *p are visible to threads doing acquire loads

instance_.store(p, memory_order_release);

}

}

return p;

}

void doWork() { cout << "Singleton working" << endl; }

private:

Singleton() { cout << "Singleton created" << endl; }

static atomic<Singleton*> instance_;

static mutex mtx_;

};

atomic<Singleton*> Singleton::instance_{nullptr};

mutex Singleton::mtx_;

// Pre-C++11 BROKEN version (for historical reference only):

// static Singleton* instance_ = nullptr; // Non-atomic

// ...

// if (instance_ == nullptr) { // Data race!

// lock_guard<mutex> lock(mtx_);

// if (instance_ == nullptr) { // Data race!

// instance_ = new Singleton(); // Data race!

// } // Problem: compiler may reorder steps of 'new':

// } // 1. Allocate memory

// // 2. Store pointer to instance_ <- reordered first

// // 3. Construct object <- reordered after

// // Another thread sees non-null pointer to

// // unconstructed object = crash

int main() {

// Multiple threads calling getInstance simultaneously

vector<thread> threads;

for (int i = 0; i < 5; i++) {

threads.emplace_back([]() {

Singleton::getInstance()->doWork();

});

}

for (auto& t : threads) t.join();

return 0;

}Output:

Singleton created

Singleton working

Singleton working

Singleton working

Singleton working

Singleton workingStep-by-step explanation:

- The pre-C++11 version was broken because the compiler could reorder the three steps of

new Singleton()— allocate memory, store pointer, call constructor. Another thread could see the non-null pointer before the constructor ran, accessing an unconstructed object. - The C++11 version uses

releaseon the store:instance_.store(p, memory_order_release)— this ensures the constructor completes before the pointer is published. Any thread that reads a non-null value through anacquireload will see the fully constructed object. - The

acquireon the outer load:instance_.load(memory_order_acquire)— if this returns non-null, all writes that happened before the release store (including the constructor) are visible. - The inner load inside the lock uses

relaxed— it is protected by the mutex, which itself provides the necessary happens-before guarantees through its lock/unlock synchronization. - Note: C++11 also guarantees that static local variable initialization is thread-safe, making

static Singleton instance;insidegetInstance()the simplest correct singleton. DCLP is shown here for its educational value regarding memory ordering.

Common Memory Model Pitfalls

Pitfall 1: Assuming x86 behavior is portable. x86 has Total Store Order (TSO) — a relatively strong hardware memory model. Code with release/acquire bugs often works on x86 but fails on ARM or RISC-V. Always write code that is correct per the C++ memory model, not just “correct on x86.”

Pitfall 2: Using volatile for threading. volatile prevents compiler caching of a variable (re-reads from memory each time). It does NOT provide atomicity, does NOT prevent data races, and does NOT establish happens-before. It is for memory-mapped I/O and signal handlers — not for inter-thread communication.

volatile int flag = 0; // WRONG for threading

// This does NOT prevent data races

// This does NOT establish happens-before

atomic<bool> flag{false}; // CORRECTPitfall 3: Forgetting that non-atomic operations can be reordered around atomics with relaxed ordering.

int data = 0;

atomic<bool> ready{false};

// Producer (WRONG):

data = 42;

ready.store(true, memory_order_relaxed); // No ordering — data=42 may not be visible!

// Producer (CORRECT):

data = 42;

ready.store(true, memory_order_release); // Ensures data=42 is visiblePitfall 4: Thinking mutex::lock() alone is sufficient without unlock().

The happens-before relationship from mutexes comes from the unlock → lock pair — Thread A’s unlock synchronizes-with Thread B’s subsequent lock. If Thread A never unlocks (e.g., due to an exception), there is no synchronization and Thread B may not see Thread A’s writes. This is why RAII lock guards are so important — they guarantee the unlock happens.

Pitfall 5: Acquiring a lock and checking a stale value.

// Even with a mutex, this pattern can be wrong:

mutex mtx;

int value = 0;

bool updated = false;

void checker() {

lock_guard<mutex> lock(mtx);

if (updated) {

// value is only guaranteed visible if THIS is the lock acquisition

// that happened-after the lock release where updated was set to true

cout << value << endl;

}

}

// This is actually correct IF updated is only set while holding mtx.

// The happens-before chain: set value under lock → release → acquire in checkerConclusion

The C++ memory model is the formal foundation that makes concurrent C++ programs portable, predictable, and well-defined. Before C++11, multithreaded C++ existed in a specification vacuum — relying on platform-specific behavior and programmer luck. C++11’s memory model changed this by defining precisely what constitutes a data race (and why it causes undefined behavior), what the happens-before relation is and how it is established, and what the six memory orderings mean in terms of the ordering guarantees they provide.

The central insight is that compilers and CPUs optimize freely within the rules. They reorder instructions, buffer stores, and eliminate redundant reads — all valid in data-race-free code. A data race gives the optimizer permission it should not have: to assume the racing variable is not modified by another thread, leading to infinite loops, incorrect values, and crashes that appear unrelated to the threading code.

The acquire-release model — the practical workhorse of the memory model — establishes happens-before across threads through synchronizes-with: a release store in one thread synchronizes with an acquire load of the same value in another thread, making all writes preceding the release visible after the acquire. This is the mechanism behind mutexes, condition variables, shared_ptr reference counting, and every well-written lock-free algorithm.

For daily concurrent C++ programming: use mutexes and condition variables for most synchronization needs (they handle the memory ordering for you), use std::atomic with the default seq_cst ordering when you need atomic operations without a mutex, and apply acquire/release/relaxed only when profiling shows the overhead is significant and you are confident in the semantics. The memory model exists to make your programs correct — understanding it deeply is what makes you confident they are.