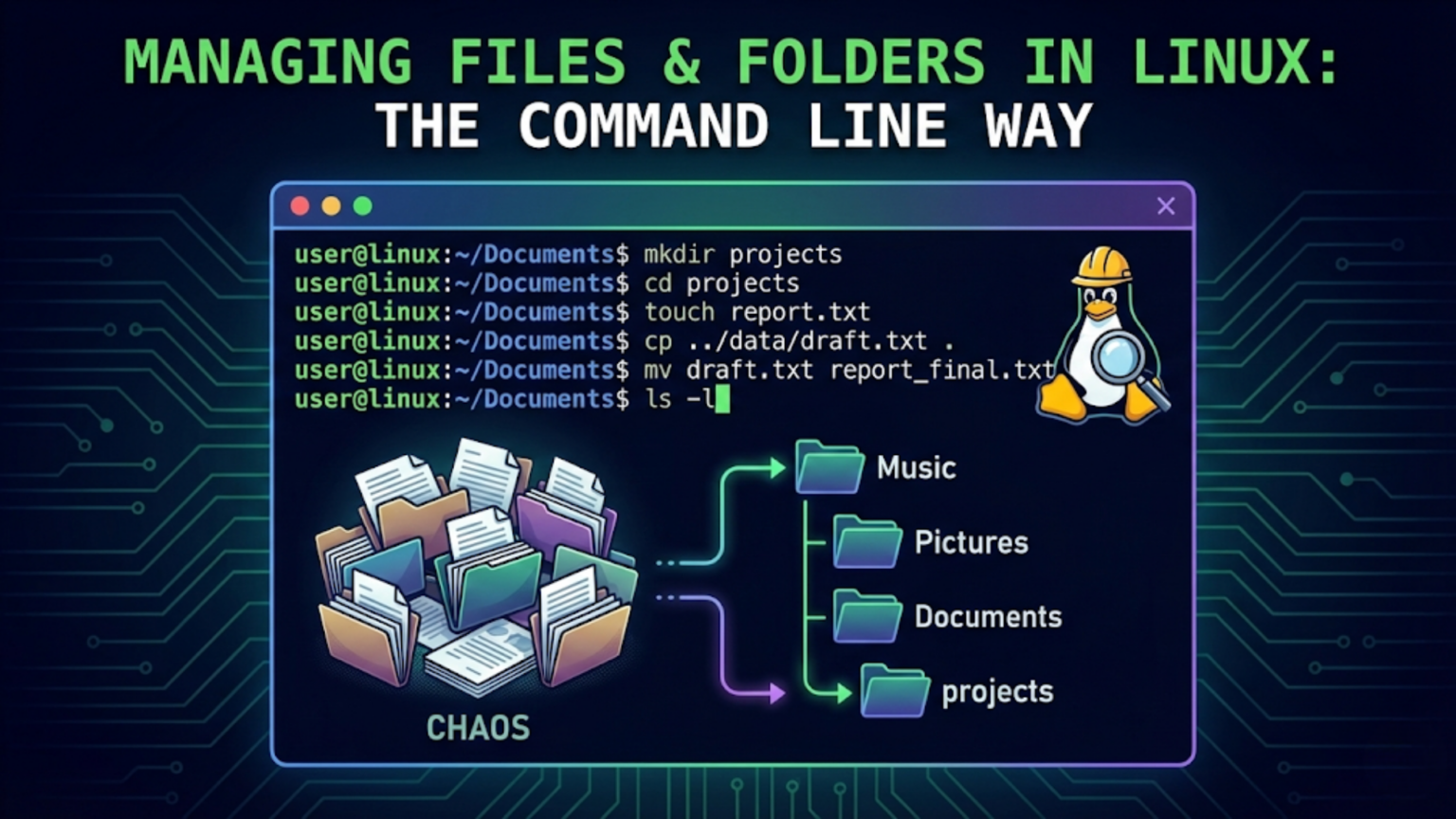

Managing files and folders in Linux from the command line uses a core set of commands: mkdir creates directories, touch creates files, cp copies them, mv moves or renames them, rm deletes them, and find locates them anywhere on the system. Combined with wildcards and pipes, these commands give you precise control over your file system — handling in seconds what would take minutes through a graphical file manager, especially when working with large numbers of files.

Introduction: The Command Line File Manager

Every Linux user eventually discovers that the terminal is the most powerful file manager available — not despite its text-based nature, but because of it. A graphical file manager excels at visual browsing, drag-and-drop operations, and simple single-file tasks. But when you need to rename 500 files according to a pattern, find every log file older than 30 days across an entire directory tree, copy only JPEG files from dozens of subfolders into one location, or delete all files matching a specific name pattern — the command line is faster, more precise, and more capable than any graphical alternative.

This article covers file and folder management from the Linux terminal comprehensively. We start with the fundamental commands introduced in the basic commands article and go deeper — exploring their full capabilities, their most powerful options, the wildcard patterns that multiply their usefulness, and the combinations that make complex file operations elegant and efficient.

By the end of this article, you will be able to handle any file management task from the terminal with confidence: organizing project directories, performing bulk operations on groups of files, searching the entire file system for specific content or attributes, working with archives, and understanding the nuances of copying versus moving, linking versus copying, and finding versus locating.

Foundation Review: The Core File Commands

Before advancing to more sophisticated techniques, let us briefly consolidate the core commands from a file management perspective, focusing on their practical depth rather than just their basics.

mkdir: Creating Directory Structures

You already know that mkdir creates directories. In real-world file management, the -p option is what makes it truly useful because it allows creating entire directory hierarchies in a single command.

$ mkdir -p ~/projects/2026/client-alpha/reportsThis creates all four levels of directories at once, even if none of them existed before. Without -p, you would need to create each level separately: first projects, then projects/2026, then projects/2026/client-alpha, and finally reports.

Creating multiple sibling directories in one command:

$ mkdir -p ~/projects/website/{html,css,javascript,images,fonts}The curly braces with comma-separated values (called brace expansion) tell the shell to create all listed directories inside website/. One command creates six directories simultaneously. The same project structure that would require six separate mkdir commands — or six drag-and-drop operations in a file manager — is accomplished in a single keystroke.

$ ls ~/projects/website/

css fonts html images javascriptcp: Copying with Purpose

The copy command has considerably more depth than beginners typically use.

Copy and preserve all attributes:

$ cp -a source destinationThe -a (archive) flag is a shortcut for -dR --preserve=all. It copies directories recursively, preserves symbolic links as links (rather than copying their targets), and preserves all file attributes — permissions, ownership, timestamps. This is the correct flag for making true backup copies where you want the destination to be identical to the source in every way.

Copy only newer files:

$ cp -u source/* destination/The -u (update) flag copies a file only if the source is newer than the destination or the destination does not exist. This makes it an efficient way to synchronize a folder — only changed files are copied, leaving unchanged files untouched.

Show what is being copied:

$ cp -rv source/ destination/The -v (verbose) flag prints each filename as it is copied. Combined with -r for recursive directory copying, this gives you a running progress report for large copy operations.

Copy multiple files to a directory:

$ cp file1.txt file2.txt file3.txt destination_directory/Avoid overwriting existing files:

$ cp -n source.txt destination.txtThe -n (no-clobber) flag prevents cp from overwriting an existing destination file. Useful when you want to copy files into a directory that already contains some files without risking overwriting newer versions.

Interactive mode — ask before overwriting:

$ cp -i source.txt destination.txtThe -i flag prompts for confirmation before overwriting any existing file. A useful safety habit when copying into directories that may already contain files.

mv: Moving and Renaming

mv is simultaneously the move command and the rename command — renaming is simply moving a file to a different name in the same directory.

Rename a file:

$ mv old_report.docx final_report_v2.docxMove a file to a directory:

$ mv report.pdf ~/Documents/reports/Move multiple files to a directory:

$ mv *.pdf ~/Documents/reports/Move and rename simultaneously:

$ mv ~/Downloads/document.pdf ~/Documents/projects/client_proposal.pdfPrevent accidental overwriting:

$ mv -i source.txt destination.txtMove only if destination does not exist or source is newer:

$ mv -u source.txt destination/Show what is being moved:

$ mv -v *.jpg ~/Pictures/vacation/One important difference between cp and mv: when moving files within the same filesystem, mv is nearly instantaneous regardless of file size — it simply updates the directory entry rather than copying data. Moving a 10 GB file within the same drive takes milliseconds. Copying that same file takes significant time. When moving across different filesystems (different drives or partitions), mv must actually copy the data and then delete the original, so it takes as long as a copy operation.

rm: Removing Safely

Deletion in the terminal is permanent and immediate. Developing careful habits around rm is essential.

Delete a single file:

$ rm filename.txtAlways use interactive mode when uncertain:

$ rm -i *.log

rm: remove regular file 'error.log'? y

rm: remove regular file 'access.log'? n

rm: remove regular file 'debug.log'? yWith -i, you are prompted for each file — powerful when you want to selectively remove files from a pattern match.

Delete a directory and its contents:

$ rm -r directory/Verify before a dangerous rm — use ls first:

$ ls *.tmp

cache.tmp session.tmp temp_data.tmp

$ rm *.tmpPreviewing with ls using the same pattern before rm is an excellent safety habit. You see exactly what will be deleted before committing.

Using trash instead of rm: On systems with the trash-cli package installed:

$ trash filename.txtFiles sent to trash can be restored. Install with sudo apt install trash-cli and use trash instead of rm for file deletions where recovery might matter.

Wildcards: Multiplying Command Power

Wildcards are patterns used in the shell to match multiple filenames at once. They are one of the most powerful features of the Linux command line for file management, allowing you to operate on groups of files that share naming characteristics without listing each file individually.

The * Wildcard (Asterisk)

* matches any sequence of characters (including no characters at all) in a filename.

List all files with a .txt extension:

$ ls *.txt

notes.txt readme.txt todo.txtCopy all JPEG images to a backup folder:

$ cp *.jpg ~/Pictures/backup/Delete all files starting with “temp”:

$ rm temp*Match files anywhere in a path:

$ ls ~/projects/*/reports/*.pdfThis matches any PDF file in a reports subfolder of any project directory.

The ? Wildcard (Question Mark)

? matches exactly one character.

Match files with a single character before the extension:

$ ls file?.txt

file1.txt file2.txt fileA.txtThis would match file1.txt but not file10.txt (which has two characters after “file”).

Match files with exactly 8-character names:

$ ls ????????.log

access01.log error_db.logThe [] Wildcard (Character Classes)

[characters] matches any single character from the specified set.

Match files ending in a number:

$ ls *[0-9].txt

report1.txt report2.txt report9.txtMatch files starting with a vowel:

$ ls [aeiouAEIOU]*

audio.mp3 event.log output.txtMatch files starting with letters a through f:

$ ls [a-f]*

archive.tar backup.sh config.conf data.csv error.log favicon.icoNegate a character class with !:

$ ls [!0-9]*Matches files that do NOT start with a digit.

Brace Expansion

Brace expansion {option1,option2,option3} generates multiple strings, making it possible to operate on multiple specific items in one command:

$ mkdir -p project/{src,tests,docs,config,build}Creates five directories at once.

$ cp config.{json,yaml,toml} ~/backup/Copies three specific files at once.

$ mv report_{draft,final}.pdf ~/Documents/Moves two specifically named files.

Finding Files: locate and find

The ability to find specific files anywhere in the file system is one of the terminal’s most powerful capabilities.

locate: Fast Keyword Search

locate searches a pre-built data.base of file paths for matches to your search term. Because it searches a data.base rather than the live file system, it is extremely fast.

$ locate filename.txt

/home/sarah/Documents/filename.txt

/home/sarah/backup/filename.txt$ locate -i "readme"The -i flag makes the search case-insensitive.

$ locate "*.conf" | grep nginx

/etc/.nginx/nginx.conf

/etc/.nginx/sites-available/default.confLimitation of locate: The data.base is updated periodically (typically once daily via a cron job), not in real time. Files created or deleted since the last data.base update will not appear correctly in results. Update the data.base manually:

$ sudo updatedblocate may not be installed by default on all distributions:

$ sudo apt install mlocate # Ubuntu/Debian

$ sudo dnf install mlocate # Fedorafind: Comprehensive Real-Time Search

find searches the live file system in real time, with far more filtering capabilities than locate. It is slower but current and extraordinarily flexible.

Basic syntax:

$ find [starting_directory] [criteria] [action]Find files by name:

$ find ~ -name "report.txt"

/home/sarah/Documents/report.txtFind files by name with wildcards (quote the pattern):

$ find ~ -name "*.pdf"Case-insensitive name search:

$ find /var -iname "*.log"Find only files (not directories):

$ find ~/Documents -type f -name "*.txt"Find only directories:

$ find ~ -type d -name "backup*"Find files by size:

$ find ~ -size +100M # Files larger than 100 megabytes

$ find ~ -size -1k # Files smaller than 1 kilobyte

$ find ~/Downloads -size +500M # Large files in DownloadsFind files modified in the last N days:

$ find ~ -mtime -7 # Modified in the last 7 days

$ find /var/.log -mtime +30 # Not modified in 30+ days (old logs)Find files by permissions:

$ find ~ -perm 644 # Files with exactly 644 permissions

$ find /tmp -perm /o+w # Files writable by others (security check)Find files owned by a specific user:

$ find /home -user sarahFind and execute a command on results:

The -exec option runs a command on each file found. The {} placeholder represents the found file, and \; ends the command:

$ find ~/old_project -name "*.tmp" -exec rm {} \;Finds and deletes all .tmp files in old_project.

$ find ~/Documents -name "*.txt" -exec cp {} ~/backup/ \;Copies all .txt files found in Documents to a backup folder.

Using + instead of \; passes all matching files to the command at once rather than one at a time (faster):

$ find ~/Documents -name "*.txt" -exec cp {} ~/backup/ +Find empty files and directories:

$ find ~ -empty -type f # Empty files

$ find ~ -empty -type d # Empty directoriesCombine multiple criteria:

$ find ~/Downloads -type f -name "*.zip" -size +10M -mtime -30Finds ZIP files larger than 10MB in Downloads that were modified in the last 30 days.

Exclude directories from find:

$ find ~ -name "*.log" -not -path "*/node_modules/*"Finds .log files while skipping node_modules directories.

Working with File Content from the Command Line

Beyond basic file operations, managing file content directly from the terminal is a core skill.

Creating Files with Content

Create a file and write content immediately:

$ cat > shopping.txt

Milk

Eggs

Bread

ApplesPress Ctrl+D on a new line to save and exit.

Append content to an existing file:

$ cat >> shopping.txt

Coffee

ButterPress Ctrl+D to finish.

Create a file with a single line using echo:

$ echo "This is a note" > note.txt

$ echo "Additional line" >> note.txtThe > operator creates (or overwrites) the file; >> appends to it.

Viewing and Comparing Files

View the differences between two files:

$ diff file1.txt file2.txt

2c2

< old content on line 2

---

> new content on line 2

4d3

< this line was deleteddiff output shows which lines differ between the files. Lines preceded by < come from the first file; lines preceded by > come from the second. This is invaluable when you have two versions of a configuration file and need to see exactly what changed.

Side-by-side comparison:

$ diff -y file1.txt file2.txtCompare directories:

$ diff -r directory1/ directory2/Shows all differences between files in two directory trees — useful for finding what changed between a backup and the current version.

Searching Within Files

We covered grep in the basic commands article. For file management, a few additional grep patterns are particularly useful:

Find files containing specific text:

$ grep -rl "database_password" ~/projects/The -r flag searches recursively through all files in the directory; -l outputs only the filenames of matching files rather than the matching lines. This finds every file that contains the text “database_password” — useful for security audits or finding where configuration values are set.

Search for a pattern and show surrounding context:

$ grep -n -B 2 -A 2 "error" /var/.log/syslog | tail -30-n shows line numbers, -B 2 shows 2 lines before the match, and -A 2 shows 2 lines after — giving context around each error message. tail -30 limits to the last 30 lines of results.

Symbolic Links: Pointers in the File System

Symbolic links (symlinks) are one of the Linux file system’s most useful features — they are files that point to another file or directory, creating an alias or shortcut that can be placed anywhere in the file system.

Creating Symbolic Links

$ ln -s /path/to/target /path/to/linkThe -s flag creates a symbolic link (without it, ln creates a hard link, which is different).

Practical example — link a configuration directory:

$ ln -s ~/projects/website/config ~/.config/websiteNow accessing ~/.config/website is equivalent to accessing ~/projects/website/config. The actual files live in one place; the link provides an alternative path to reach them.

Linking a frequently used directory to a convenient location:

$ ln -s /var/.log/nginx ~/logs

$ ls ~/logs

access.log error.logNow ~/logs provides quick access to the nginx log directory from your home folder.

Identifying symlinks:

$ ls -la

lrwxrwxrwx 1 sarah sarah 14 Feb 18 15:22 logs -> /var/.log/nginxThe l at the beginning of the permissions string indicates a symlink, and the -> shows what it points to.

Removing a symlink:

$ rm linknameUse rm to remove the link itself. Do not use rm -r — that would follow the link and delete the target directory’s contents.

Hard Links vs. Symbolic Links

A symbolic link is a file that contains a path to another file. If the target file is deleted, the symlink is broken (dangling). Symlinks can point to files on different filesystems and can point to directories.

A hard link is another name for the same file — both names point directly to the same data on disk. Deleting one hard link does not remove the data; the data remains until all hard links to it are removed. Hard links cannot span filesystems and cannot link to directories.

For most practical purposes, symbolic links are what you want. They are more visible (clearly shown as links by ls -l), more flexible (can cross filesystems, can link to directories), and more intuitive.

Archiving and Compressing Files

Archiving creates a single file from multiple files and directories. Compression reduces file sizes. Linux provides powerful tools for both.

tar: The Archiving Standard

tar (tape archive) is the standard Linux archiving tool. Despite its age, it remains the universal choice for creating archives on Linux.

Create a compressed tar archive (.tar.gz or .tgz):

$ tar -czvf archive.tar.gz directory/Breaking down the options:

-c— create a new archive-z— compress with gzip-v— verbose (show files being added)-f archive.tar.gz— the output filename

Extract a .tar.gz archive:

$ tar -xzvf archive.tar.gz-x— extract-z— decompress with gzip-v— verbose-f archive.tar.gz— the archive to extract

Extract to a specific directory:

$ tar -xzvf archive.tar.gz -C /destination/path/List contents without extracting:

$ tar -tzvf archive.tar.gzCreate a .tar.bz2 archive (better compression, slower):

$ tar -cjvf archive.tar.bz2 directory/Create a .tar.xz archive (best compression, slowest):

$ tar -cJvf archive.tar.xz directory/Working with ZIP Files

ZIP is the most common archive format from Windows. Linux can create and extract ZIP files natively.

Create a ZIP archive:

$ zip -r archive.zip directory/Extract a ZIP archive:

$ unzip archive.zipExtract to a specific directory:

$ unzip archive.zip -d /destination/path/List ZIP contents without extracting:

$ unzip -l archive.zipgzip, bzip2, and xz: Compressing Individual Files

These tools compress single files (unlike tar, which archives multiple files into one):

$ gzip filename.txt # Creates filename.txt.gz, deletes original

$ gzip -d filename.txt.gz # Decompress

$ gzip -k filename.txt # Compress but keep original (-k flag)$ bzip2 filename.txt # Creates filename.txt.bz2

$ bzip2 -d filename.txt.bz2 # Decompress$ xz filename.txt # Creates filename.txt.xz (best compression)

$ xz -d filename.txt.xz # DecompressBatch File Operations: Power in Practice

The real advantage of command-line file management emerges in batch operations — performing the same operation on many files at once.

Renaming Multiple Files

The rename command (may need installation: sudo apt install rename) renames files using Perl regular expressions:

Change all .txt extensions to .md:

$ rename 's/\.txt$/.md/' *.txtConvert all filenames to lowercase:

$ rename 'y/A-Z/a-z/' *Add a prefix to all files:

$ rename 's/^/2026_/' *.jpgRenames all .jpg files to start with “2026_”.

For simpler renames without the rename command, a bash loop works on any system:

Add a prefix using a loop:

$ for file in *.jpg; do mv "$file" "vacation_${file}"; doneRemove spaces from filenames (replace with underscores):

$ for file in *\ *; do mv "$file" "${file// /_}"; doneCopying Files by Type from a Deep Directory Tree

$ find ~/Music -name "*.flac" -exec cp {} ~/Music/flac_backup/ \;Finds every FLAC file anywhere under ~/Music and copies it to a backup folder.

Moving Old Files to Archive

$ find ~/Downloads -mtime +90 -type f -exec mv {} ~/Archives/old_downloads/ \;Finds all files in Downloads not modified in 90 days and moves them to an archive folder — keeping Downloads clean without permanent deletion.

Deleting Empty Directories

$ find ~/projects -type d -empty -deleteFinds and deletes all empty directories within ~/projects — cleans up after moving files out of folders.

Counting Files in Subdirectories

$ find ~/Pictures -type d | while read dir; do

count=$(find "$dir" -maxdepth 1 -type f | wc -l)

echo "$count $dir"

done | sort -rn | head -10Shows the 10 directories in Pictures with the most files — useful for finding which event or year has the most photos.

rsync: The Powerful Synchronization Tool

rsync is the standard Linux tool for synchronizing directories — copying only what has changed between a source and destination. It is faster than cp for incremental copies and has powerful options for controlling exactly what gets synchronized.

Basic rsync — synchronize source to destination:

$ rsync -av ~/Documents/ ~/backup/Documents/-a— archive mode (preserves permissions, timestamps, symlinks)-v— verbose output

Rsync with progress indicator:

$ rsync -av --progress ~/Documents/ ~/backup/Documents/Preview what rsync would do (dry run):

$ rsync -av --dry-run ~/Documents/ ~/backup/Documents/The --dry-run flag shows exactly what would be copied or deleted without actually doing it — invaluable before running a major synchronization.

Delete files from destination that no longer exist in source:

$ rsync -av --delete ~/Documents/ ~/backup/Documents/Without --delete, rsync only adds and updates files in the destination — files deleted from the source remain in the backup. With --delete, the backup becomes a true mirror of the source.

Exclude files or directories:

$ rsync -av --exclude="*.tmp" --exclude=".cache/" ~/Documents/ ~/backup/Documents/rsync over SSH (copy to a remote server):

$ rsync -av ~/Documents/ user@remotehost:~/backup/Documents/rsync over SSH is one of the most practical tools for Linux users who manage remote servers or want to maintain synchronized copies of files between computers.

Disk Usage Analysis: Finding Space Hogs

When disk space runs low, quickly identifying what is consuming the most space is essential.

du with Sorting

$ du -sh ~/Downloads/* | sort -rh | head -20This sequence:

du -sh ~/Downloads/*— shows the size of each item in Downloadssort -rh— sorts in reverse human-readable order (largest first)head -20— shows only the top 20 results

The result is a ranked list of the 20 largest items in your Downloads folder.

Find the largest directories in your home folder:

$ du -sh ~/*/ | sort -rh | head -10Find all files larger than 1 GB anywhere in your home directory:

$ find ~ -type f -size +1G -exec ls -lh {} \;ncdu: Interactive Disk Usage Analyzer

ncdu (ncurses disk usage) provides an interactive, terminal-based disk usage analyzer that makes browsing large directory trees easy:

$ sudo apt install ncdu

$ ncdu ~It presents a navigable tree showing directories sorted by size. Use arrow keys to navigate, Enter to drill into a directory, and d to delete selected items. It is one of the most useful tools for understanding and managing disk space.

File Permissions in File Management Context

File permissions control who can read, write, and execute files and directories. While permissions are covered in depth in a later article, understanding them in the context of file management is important.

Checking Permissions

$ ls -la

-rw-r--r-- 1 sarah sarah 1024 Feb 18 09:22 notes.txt

drwxr-xr-x 3 sarah sarah 4096 Feb 17 14:31 DocumentsThe permission string (-rw-r--r--) has three groups:

- Owner permissions:

rw-(read and write, no execute) - Group permissions:

r--(read only) - Others permissions:

r--(read only)

Changing Permissions

$ chmod 644 notes.txt # Owner: rw, Group: r, Others: r

$ chmod 755 script.sh # Owner: rwx, Group: rx, Others: rx

$ chmod +x script.sh # Add execute permission for all

$ chmod -w notes.txt # Remove write permission for allChanging Ownership

$ sudo chown sarah:sarah filename.txt # Change owner and group

$ sudo chown -R sarah:sarah directory/ # Recursive ownership changeThese commands become important when files end up owned by root after being created with sudo, or when moving files between user accounts.

Practical File Management Scenarios

Scenario 1: Organizing a Downloads Folder

A Downloads folder that has accumulated hundreds of mixed files needs organizing:

$ cd ~/Downloads

# Create organized subdirectories

$ mkdir -p organized/{documents,images,videos,archives,installers}

# Move files by type

$ mv *.pdf *.docx *.xlsx *.txt organized/documents/

$ mv *.jpg *.jpeg *.png *.gif *.webp organized/images/

$ mv *.mp4 *.mkv *.avi *.mov organized/videos/

$ mv *.zip *.tar.gz *.tar.bz2 *.7z organized/archives/

$ mv *.deb *.rpm *.AppImage organized/installers/

# See what remains

$ ls -laScenario 2: Creating a Project Backup

$ project="my-website"

$ backup_dir="~/backups/${project}_$(date +%Y%m%d)"

$ mkdir -p "$backup_dir"

$ rsync -av --exclude=".git/" --exclude="node_modules/" \

~/projects/$project/ "$backup_dir/"

$ tar -czvf "${backup_dir}.tar.gz" "$backup_dir/"

$ rm -rf "$backup_dir"

$ echo "Backup created: ${backup_dir}.tar.gz"Scenario 3: Finding and Cleaning Old Log Files

# Find log files older than 7 days in /var/.log

$ find /var/.log -name "*.log" -mtime +7 -type f

# Compress rather than delete (safer)

$ find /var/.log -name "*.log" -mtime +7 -type f -exec gzip {} \;

# Confirm compressed versions exist

$ find /var/.log -name "*.log.gz" | head -5Quick Reference: File Management Commands

| Task | Command | Example |

|---|---|---|

| Create directory | mkdir | mkdir -p projects/2026/jan |

| Create empty file | touch | touch notes.txt |

| Copy file | cp | cp -a source/ dest/ |

| Move/rename | mv | mv old.txt new.txt |

| Delete file | rm | rm -i *.tmp |

| Delete directory | rm -r | rm -r old_folder/ |

| Find files (fast) | locate | locate "*.conf" |

| Find files (precise) | find | find ~ -name "*.pdf" -size +1M |

| Compare files | diff | diff file1.txt file2.txt |

| Create symlink | ln -s | ln -s /var/.log/nginx ~/logs |

| Create tar archive | tar -czvf | tar -czvf backup.tar.gz dir/ |

| Extract tar archive | tar -xzvf | tar -xzvf backup.tar.gz |

| Create zip archive | zip -r | zip -r archive.zip dir/ |

| Extract zip archive | unzip | unzip archive.zip -d dest/ |

| Sync directories | rsync -av | rsync -av src/ dest/ |

| Disk usage summary | du -sh | du -sh ~/Downloads/* |

| Largest items sorted | du -sh * | sort -rh | du -sh ~/*/ | sort -rh |

Conclusion: The Terminal as Your Most Powerful File Manager

The Linux command line gives you file management capabilities that no graphical tool can match — not because graphical tools are poorly designed, but because the text-based, scriptable nature of the terminal enables operations on files at a scale and with a precision that point-and-click interfaces fundamentally cannot achieve.

The commands covered in this article — from basic copy and move operations through wildcard patterns, find searches, symbolic links, archiving tools, and rsync synchronization — form a complete toolkit for any file management task you will encounter as a Linux user. Some of these tools you will use daily; others you will reach for occasionally when a specific situation calls for them.

The investment in learning this toolkit pays continuous dividends. Each file management task you accomplish from the terminal reinforces your command vocabulary, builds intuition about how the file system works, and adds another technique to your problem-solving repertoire. Over time, what begins as deliberate effort becomes fluid competence — and you will find yourself accomplishing in seconds what once required minutes of graphical navigation.