Linux is a free, open source operating system kernel created by Linus Torvalds in 1991, which forms the foundation of a vast family of operating systems called Linux distributions. Unlike proprietary systems such as Windows or macOS, Linux allows anyone to view, modify, and distribute its source code freely. Today, Linux powers everything from personal computers and smartphones to the world’s most powerful supercomputers and the majority of web servers on the internet.

Introduction: The Operating System That Changed the World

When most people think of operating systems, they think of Microsoft Windows or Apple macOS — the polished, commercial platforms that ship pre-installed on laptops and desktops. But there is a third major player that, while less visible to the average consumer, quietly runs the infrastructure of the modern digital world: Linux. It is the operating system behind Android smartphones, Netflix streaming servers, NASA spacecraft computers, the New York Stock Exchange, and over 90 percent of all cloud computing infrastructure.

Yet despite its enormous footprint, many people have never heard of Linux, and those who have may find it mysterious or intimidating. The truth is that Linux is not just for hackers or computer scientists. It is a robust, flexible, and powerful operating system that anyone can learn to use — and one that offers remarkable insight into how computers actually work at a fundamental level.

This article will take you on a comprehensive journey through Linux: what it is, where it came from, how it works, why it matters, and how it has grown from a bedroom project into the backbone of global technology. Whether you are a curious beginner or someone considering switching to Linux for the first time, this guide is designed to give you everything you need to understand the open source operating system that powers the world.

What Exactly Is an Operating System?

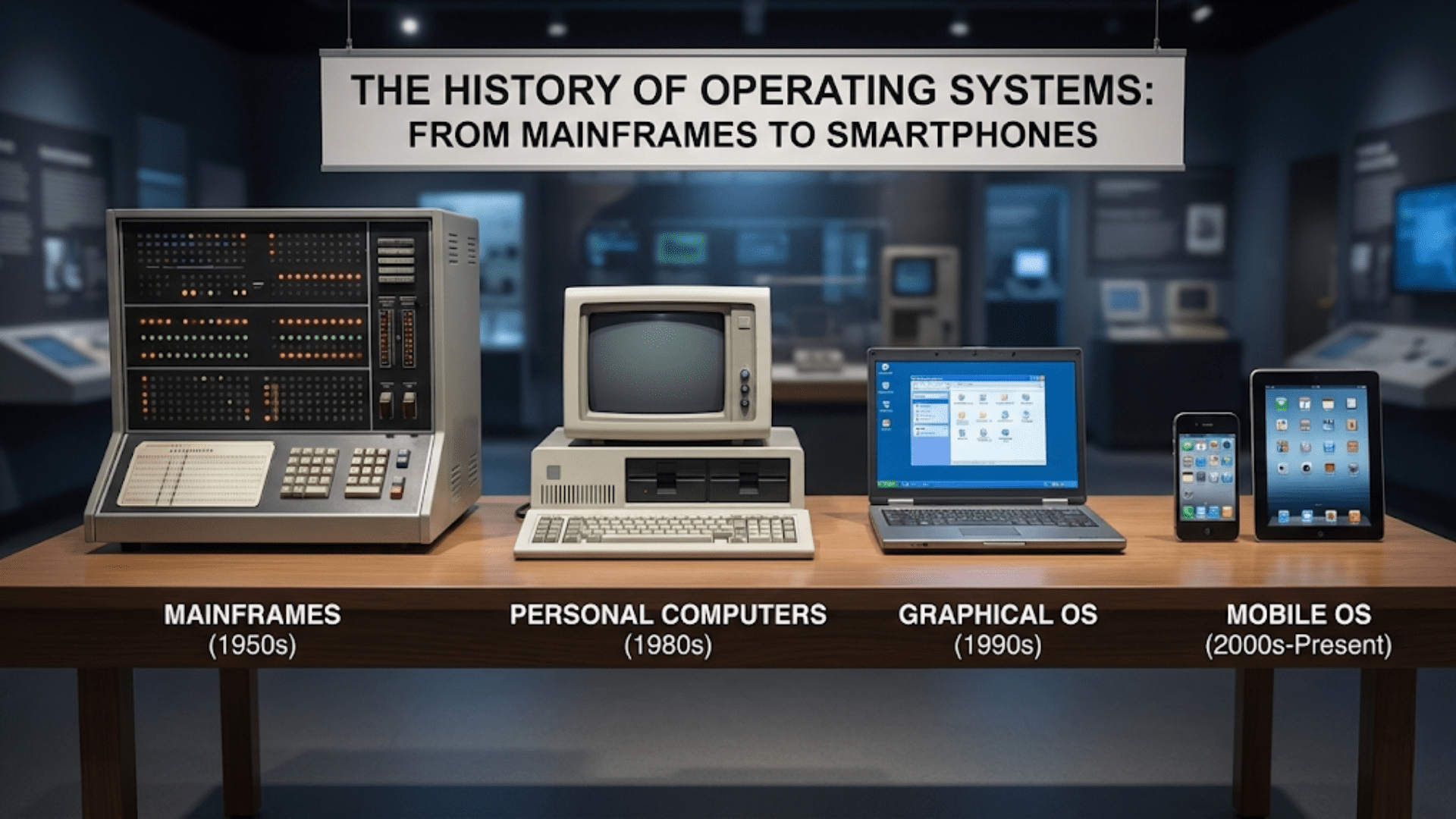

Before diving into Linux specifically, it helps to understand what an operating system is and why every computer needs one. An operating system, commonly abbreviated as OS, is the fundamental software layer that sits between a computer’s hardware and the applications that run on it. Without an operating system, a computer is essentially an expensive collection of electronic components with no way to communicate or coordinate.

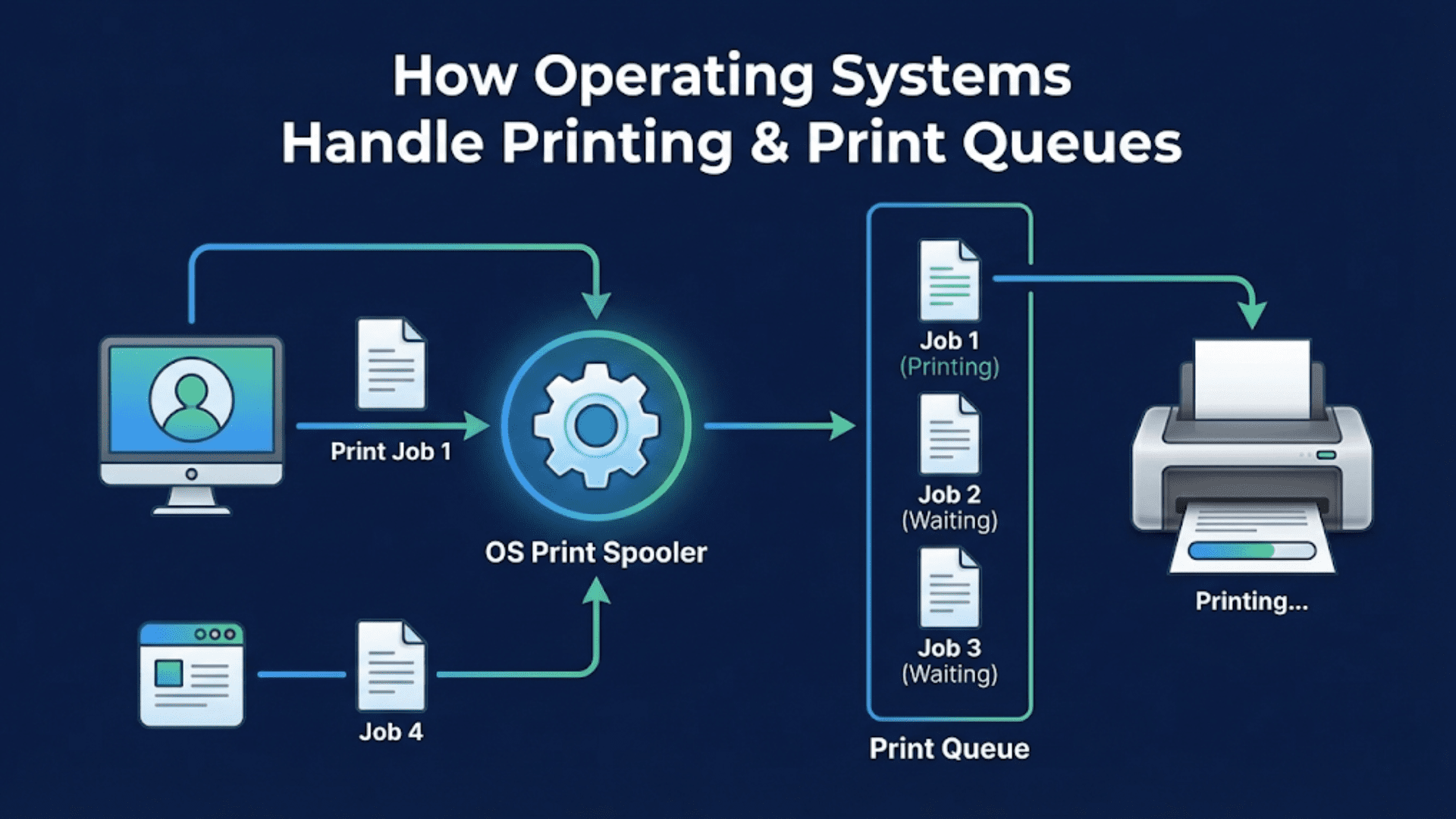

The operating system performs several critical functions. It manages the central processing unit by scheduling which programs get to use it and for how long. It controls memory allocation, deciding which applications get access to RAM and how much. It handles input and output operations, translating signals from keyboards, mice, and other devices into data that programs can understand. It manages files stored on hard drives and solid-state drives, organizing them into directories and ensuring data integrity. And it provides a user interface — either graphical or text-based — through which humans can interact with the machine.

Think of the operating system as the manager of a large office building. The building itself (the hardware) contains all the physical resources: offices (memory), work areas (the processor), filing cabinets (storage), and doorways (input/output ports). The manager (the OS) decides who gets which office, ensures the filing system stays organized, coordinates communication between departments, and makes sure the building runs smoothly. Without the manager, the building exists but nothing gets done efficiently.

Windows, macOS, Android, iOS, and Linux are all operating systems — each with different philosophies, strengths, and intended use cases. Linux is unique among them because of its open nature, its community-driven development model, and its extraordinary versatility.

The Origin Story: How Linux Was Born

To understand Linux, you need to understand a little history — because Linux did not emerge from a corporation with a product roadmap. It emerged from the curiosity and frustration of a 21-year-old Finnish computer science student.

The Unix Influence

The story of Linux really begins with Unix, an operating system developed at Bell Labs by Ken Thompson, Dennis Ritchie, and colleagues starting in 1969. Unix was a revolutionary system that introduced many concepts still used in modern operating systems: a hierarchical file system, multi-user support, pipes for connecting programs, and a philosophy of small, modular tools that each do one thing well.

Unix became enormously influential in universities and research institutions throughout the 1970s and 1980s. However, Unix was proprietary software — it cost money to use, and the source code was not freely available. This created a tension in the computing community, particularly among academics who believed software should be open and shareable.

GNU and the Free Software Movement

In 1983, a programmer named Richard Stallman at MIT launched the GNU Project with an ambitious goal: to create a complete, free Unix-like operating system. Stallman also founded the Free Software Foundation and articulated the philosophy that users should have the freedom to run, study, share, and modify software. He called this idea “free software” — using “free” in the sense of freedom, not price.

Over the following years, the GNU Project produced many essential components of an operating system: text editors, compilers, shell programs, and utility tools. But the project was missing one critical piece — the kernel, the core component that communicates directly with hardware. GNU had a kernel project called GNU Hurd, but it was incomplete and not ready for practical use.

Linus Torvalds and the Linux Kernel

Enter Linus Torvalds, a Finnish student at the University of Helsinki. In 1991, Torvalds was using a computer running MINIX, a small Unix-like operating system created for educational purposes by professor Andrew Tanenbaum. Frustrated by MINIX’s limitations and the restrictions on modifying and distributing it, Torvalds began writing his own operating system kernel.

On August 25, 1991, Torvalds made a famous post to an online newsgroup that began: “I’m doing a (free) operating system (just a hobby, won’t be big and professional like gnu) for 386(486) AT clones.” It was a humble announcement. Torvalds had no idea that his weekend hobby project would eventually change the world.

By September 1991, Torvalds released the first version of his kernel — called Linux, a combination of his first name “Linus” and “Unix” — publicly on the internet, inviting others to contribute. The timing was perfect. The GNU Project had all the tools but lacked a kernel. Linux was a kernel without surrounding tools. When combined with GNU’s software, the result was a complete, free, Unix-like operating system.

The response from the global programming community was immediate and enthusiastic. Developers from around the world began contributing code, fixing bugs, and adding features. Linux grew rapidly through this collaborative model, demonstrating a new way of developing software — what would later be called open source development.

Understanding Open Source: The Philosophy Behind Linux

The term “open source” is central to understanding what makes Linux fundamentally different from Windows or macOS. To grasp this, you need to understand what source code is and why making it open matters.

What Is Source Code?

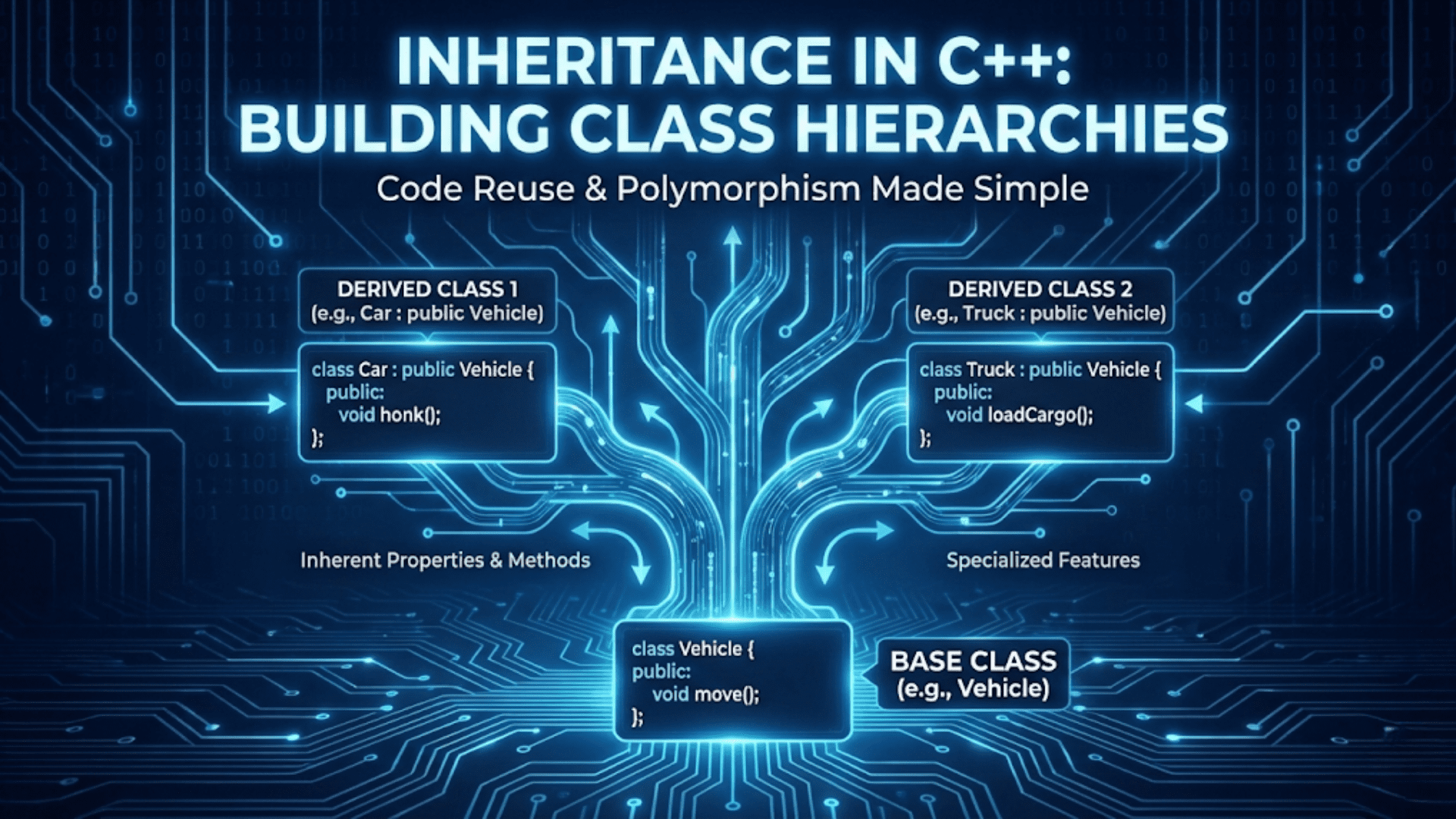

Every software program is written in human-readable programming languages — like C, Python, Java, or hundreds of others. The instructions written in these languages are called source code. Source code tells the computer exactly what to do, in terms humans can read and understand. For example, a few lines of source code might say “if the user clicks this button, open a new window and display this message.”

Before source code can run on a computer, it typically needs to be compiled — translated from human-readable form into machine code, which is the binary language of ones and zeros that processors understand. The compiled version (called a binary or executable) is what most commercial software companies distribute. When you install a program, you are installing the compiled binary, not the underlying source code.

Closed vs. Open Source

With proprietary, closed-source software, only the developers have access to the source code. Users can run the compiled binary, but they cannot examine how it works, modify it, or share modified versions. This is the model used by Microsoft Windows and Apple macOS. You can use these operating systems, but their inner workings remain hidden.

With open source software like Linux, the source code is publicly available. Anyone can download it, read it, understand it, modify it, and distribute their modifications, subject to the terms of the software license. This openness has profound implications for security, customization, learning, and community collaboration.

The Linux License: GNU GPL

Linux is released under the GNU General Public License, commonly known as the GPL. This license grants users four fundamental freedoms: the freedom to run the program for any purpose, the freedom to study how it works, the freedom to redistribute copies to help others, and the freedom to distribute modified versions. Crucially, the GPL includes what is called a “copyleft” provision: any modified version of Linux that is distributed must also be released under the GPL. This ensures that improvements to Linux remain freely available to everyone.

This licensing model has created a virtuous cycle. Because Linux is free to use and modify, millions of developers worldwide have contributed improvements. Because improvements must be shared back, everyone benefits from everyone else’s work. The result is an operating system that has been refined by some of the world’s best programmers over three decades, at no cost to users.

The Linux Kernel: The Heart of the System

When people say “Linux,” they are often referring to a complete operating system experience. But technically, Linux refers specifically to the kernel — the core component that performs the most fundamental functions of the operating system.

What Does the Kernel Do?

The kernel is the layer of software that has direct, privileged access to the computer’s hardware. It manages the processor, allocating CPU time to different running programs and switching between them so rapidly that it appears multiple programs are running simultaneously. It manages RAM, deciding which programs get memory and how much. It handles all input and output — reading from keyboards, writing to screens, communicating with network adapters, and reading from and writing to storage devices. It also provides security boundaries, ensuring that one program cannot access another program’s memory or interfere with critical system functions.

The Linux kernel is written primarily in the C programming language and is extraordinarily sophisticated — the current kernel contains over 27 million lines of code. Despite this complexity, it is designed to be efficient and portable, running on everything from tiny embedded microcontrollers to massive multi-processor server systems.

Kernel Architecture

Linux uses what is called a monolithic kernel architecture with modular capabilities. In a purely monolithic kernel, all operating system functions run in a single, unified block of code in privileged memory space. This makes for efficient communication between components but means the entire kernel must be reloaded if any part changes. Linux adds a modular twist: most hardware drivers and optional features are implemented as loadable modules that can be inserted into or removed from the running kernel without rebooting. This gives Linux the efficiency of a monolithic design with some of the flexibility of a microkernel.

This modular approach is why Linux can support such a vast range of hardware. When you plug in a new USB device, the kernel can dynamically load the appropriate driver module to communicate with it, without any user action or system restart required.

Linux Distributions: The Many Faces of Linux

If Linux is just a kernel, what is it that people actually install on their computers? The answer is a Linux distribution, commonly called a “distro.” A distribution is a complete operating system built around the Linux kernel, bundled with a software package management system, a user interface, applications, and configuration tools.

Hundreds of Linux distributions exist, each with a different philosophy, target audience, and selection of included software. Understanding why there are so many distributions — and how they differ — is key to understanding the Linux ecosystem.

Why So Many Distributions?

Because Linux is open source and free to modify, anyone can take the Linux kernel, combine it with their preferred software, and create a new distribution. This freedom has led to incredible diversity. Some distributions are designed for ease of use by beginners. Others are engineered for maximum security. Some are optimized for servers that never display a graphical interface. Others are built for specific hardware, like Raspberry Pi single-board computers or Android-based smartphones.

This diversity is both a strength and potential source of confusion for newcomers. Rather than a single “Linux,” there is an ecosystem of options, each with its own strengths. Choosing the right distribution for your needs is an important first step when coming to Linux.

Major Distribution Families

Most Linux distributions are not built entirely from scratch. Instead, they are derived from a smaller number of foundational “parent” distributions, inheriting their package management systems, base configurations, and philosophies. The three most important families are Debian, Red Hat, and Arch.

The Debian family is one of the oldest and most influential. Debian itself is known for stability and strict adherence to free software principles. Ubuntu, perhaps the most beginner-friendly Linux distribution, is based on Debian. Linux Mint, another popular beginner-friendly option, is itself derived from Ubuntu. These distributions use the dpkg package management system and APT (Advanced Package Tool) for installing and managing software.

The Red Hat family centers on Red Hat Enterprise Linux (RHEL), a commercial distribution used extensively in enterprise servers. Fedora is the community-driven counterpart, where new features are tested before making their way into RHEL. CentOS historically provided a free, enterprise-grade alternative to RHEL, though it has recently been replaced by CentOS Stream. These distributions use RPM packages and the DNF (or YUM) package managers.

The Arch family champions a minimalist, “do-it-yourself” philosophy. Arch Linux ships with almost nothing pre-installed, giving users complete control to build exactly the system they want. It targets experienced users comfortable with the command line. Manjaro is a more accessible derivative that brings Arch’s flexibility to a wider audience.

Linux Distribution Comparison: Choosing the Right Distro

The following table compares the most popular Linux distributions across several key criteria to help you understand their differences and intended use cases:

| Distribution | Family | Difficulty | Best For | Package Manager | Release Model |

|---|---|---|---|---|---|

| Ubuntu | Debian | Beginner | General use, beginners | APT | Fixed release |

| Linux Mint | Debian/Ubuntu | Beginner | Windows switchers | APT | Fixed release |

| Fedora | Red Hat | Intermediate | Developers, new tech | DNF | Fixed release |

| Debian | Debian | Intermediate | Stability-focused | APT | Fixed release |

| CentOS Stream | Red Hat | Intermediate | Enterprise/servers | DNF | Rolling-ish |

| Arch Linux | Arch | Advanced | Custom builds | Pacman | Rolling |

| Manjaro | Arch | Intermediate | Arch with easier setup | Pacman | Rolling |

| openSUSE | Independent | Intermediate | Desktop & servers | Zypper | Both options |

What Makes Linux Different from Windows and macOS?

Understanding Linux requires comparing it to the operating systems most people already know. The differences go far beyond aesthetics or which programs are available — they reflect fundamentally different philosophies about who should control software.

Cost and Licensing

Windows licenses cost money — a new Windows license for a personal computer typically costs between $100 and $200. macOS is technically free to download, but it only legally runs on Apple hardware, which carries a significant price premium. Linux is free. You can download and install virtually any Linux distribution at no cost, on as many computers as you like, without any licensing restrictions. For individuals, small businesses, schools, and governments in developing countries, this distinction is enormously significant.

Transparency and Security

Because Linux source code is public, security researchers worldwide can examine it for vulnerabilities. When flaws are found, they are typically patched very quickly — often within hours or days — because thousands of developers worldwide are watching. With proprietary operating systems, you must trust that the company has examined its own code thoroughly and has no hidden backdoors or data-collection mechanisms. With Linux, you can verify this yourself, or rely on the community of scrutineers who do.

This transparency is one reason Linux is preferred for security-sensitive applications. Government agencies, financial institutions, and defense contractors often choose Linux precisely because they can audit every line of code.

Customization and Control

Linux gives users an extraordinary degree of control over their computing environment. You can choose your desktop environment, file manager, text editor, media player, and virtually every other component of the system. You can strip away everything you do not need for a minimal, fast system, or add rich graphical effects and productivity tools. You can even modify the kernel itself if you need custom hardware support or specialized behavior.

Windows and macOS, by contrast, give users relatively little ability to change fundamental aspects of how the operating system looks and behaves. Microsoft and Apple make design decisions that apply to all users, and there is limited ability to override those choices.

Hardware Support and System Requirements

Modern versions of Windows 11 require relatively recent hardware, including a TPM 2.0 security chip, and refuse to install on older machines. Linux distributions are available for virtually any hardware, including computers from the 1990s. Lightweight distributions like Puppy Linux or Lubuntu can breathe new life into old hardware that cannot run modern Windows.

Conversely, Linux also scales upward to the largest computing installations in the world. All 500 of the world’s top 500 supercomputers run Linux. The same operating system that can revive a decade-old laptop also runs the most powerful machines humanity has ever built.

The Command Line

One of the most distinctive aspects of Linux for newcomers is the emphasis on the command line — a text interface where users type commands rather than clicking icons. While modern Linux distributions all offer graphical interfaces comparable to Windows or macOS, the command line remains central to the Linux experience and provides capabilities that graphical interfaces simply cannot match.

Through the command line, a Linux user can accomplish complex tasks in seconds that might take minutes through a graphical interface. System administrators can manage hundreds of servers simultaneously using scripts. Developers can automate complex workflows. Data scientists can process massive datasets. Learning the Linux command line is a significant investment, but it pays dividends throughout a career in technology.

The Linux Desktop: What You See and How You Interact

When most people first encounter Linux, they encounter it through a desktop environment — the graphical layer that provides windows, menus, icons, and a taskbar or panel. Linux desktop environments are separate from the kernel itself and can be mixed and matched in ways that are impossible in Windows or macOS.

GNOME

GNOME (GNU Network Object Model Environment) is currently the most popular Linux desktop environment and is the default on Ubuntu, Fedora, and several other major distributions. GNOME takes a clean, minimalist approach with a single top panel and an activities overview that shows all open applications. It prioritizes simplicity and a distraction-free workflow. GNOME is particularly popular among developers and power users who prefer keyboard-driven workflows.

KDE Plasma

KDE Plasma is GNOME’s primary rival and takes a very different approach. Where GNOME emphasizes simplicity, KDE emphasizes customization and configurability. KDE looks and feels more similar to Windows than GNOME does, with a taskbar at the bottom, a start menu-style application launcher, and system trays. Almost every aspect of KDE’s appearance and behavior can be customized. KDE is popular among users who want maximum control over their desktop experience.

Other Desktop Environments

Beyond GNOME and KDE, Linux users can choose from many other desktop environments. Xfce is a lightweight, fast desktop designed for older hardware or users who prefer minimal resource usage. LXDE and LXQt are even lighter, consuming very little memory and CPU time. Cinnamon, developed by the Linux Mint project, offers a traditional desktop layout similar to Windows 7 and is particularly welcoming to users switching from Windows. Each environment reflects a different vision of how a desktop should work.

Where Linux Runs: The Real-World Footprint

Understanding how pervasive Linux is in the modern world helps explain why it matters — even if most people never directly interact with it.

Web Servers and the Internet

If you have used the internet today — and you almost certainly have — you have interacted with Linux. According to web server surveys, approximately 96 percent of the top one million web servers run Linux. When you access Google, Facebook, Amazon, Wikipedia, or virtually any major website, your request is processed by Linux servers. The internet as we know it runs on Linux.

Cloud Computing

Cloud computing platforms like Amazon Web Services, Microsoft Azure, and Google Cloud Platform all run primarily on Linux. Even Azure, operated by the company behind Windows, uses Linux for the majority of its cloud infrastructure. When companies “move to the cloud,” they are almost invariably running their workloads on Linux virtual machines.

Android and Mobile

Android, the most popular mobile operating system in the world with billions of users, is built on the Linux kernel. When you use an Android smartphone or tablet, you are using Linux, even if the user experience looks nothing like a traditional Linux desktop. The Linux kernel handles all the fundamental hardware management beneath Android’s colorful interface.

Supercomputers

As mentioned earlier, Linux runs every single one of the world’s top 500 most powerful supercomputers. These machines — used for climate modeling, drug discovery, nuclear simulation, and artificial intelligence research — demand the most reliable, efficient, and flexible operating system available. Linux provides all three.

Embedded Systems

Linux runs in many devices you might not think of as computers: smart televisions, automotive infotainment systems, networking routers, IoT sensors, digital cameras, and even some household appliances. The Linux kernel’s ability to be customized and stripped to the bare essentials makes it ideal for embedded systems that need an operating system but have limited memory and processing power.

Personal Desktops

Desktop Linux adoption, while growing, remains the area where Linux has historically been weakest relative to Windows and macOS. Estimates suggest that approximately 4 to 5 percent of personal computers run Linux. However, this percentage has been growing, partly driven by the Steam gaming platform’s investment in Linux compatibility and the release of the Steam Deck, a handheld gaming PC that runs a customized Linux distribution called SteamOS.

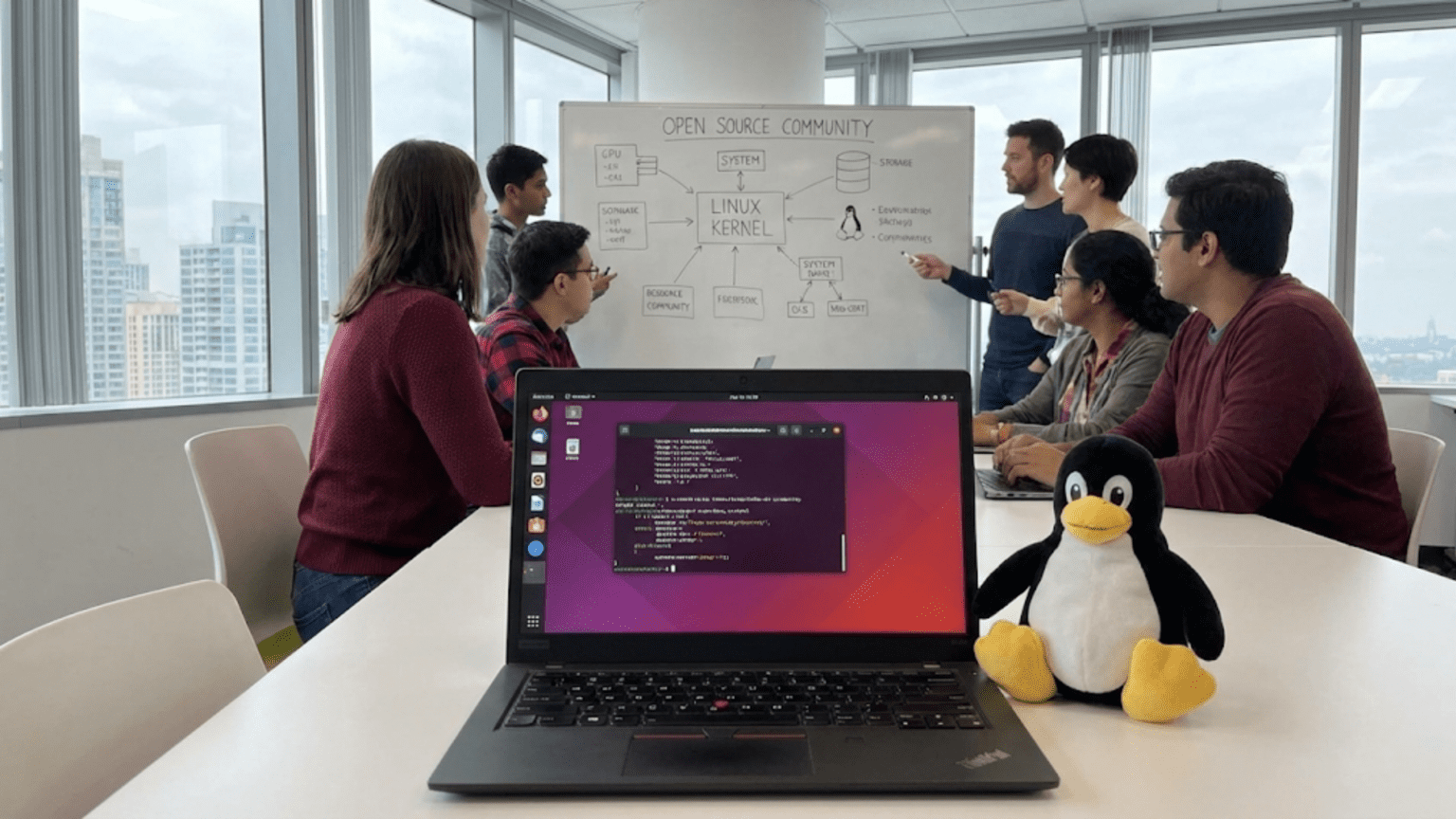

The Linux Community: Open Source in Practice

Linux is maintained and developed not by a single company but by a vast, distributed community of contributors. Understanding this community helps explain both Linux’s strengths and some of its challenges.

The Kernel Development Community

The Linux kernel itself is maintained through a highly organized process despite having no central corporate owner. Linus Torvalds remains the project’s original creator and holds the final authority over what code enters the official kernel, though he is assisted by a team of senior maintainers who oversee specific subsystems. Thousands of developers from hundreds of companies contribute to each kernel release. Major contributors include Intel, Red Hat, Google, Samsung, NVIDIA, and many other technology companies — even Microsoft contributes Linux kernel code for its Azure cloud platform.

New kernel releases appear approximately every 10 to 12 weeks, each incorporating thousands of individual changes. The development process relies on email-based code review and a sophisticated version control system called Git, which was itself created by Linus Torvalds for managing Linux kernel development.

Distribution Communities

Each Linux distribution also has its own community of contributors, testers, documentation writers, translators, and support volunteers. Ubuntu has a large forum community and commercial support through Canonical. Arch Linux has the Arch User Repository (AUR), a community-maintained collection of thousands of additional software packages. These communities are an important part of what makes Linux viable — the human infrastructure of knowledge and support that helps users get problems solved.

Getting Started with Linux: Your First Steps

If you are interested in trying Linux for yourself, you have more options than ever before. You do not need to commit to replacing your current operating system to experiment with Linux.

Live Boot: Try Before You Install

Most Linux distributions can be run as “live” systems from a USB drive, without installing anything on your computer. You simply download the distribution’s ISO image, write it to a USB drive using a tool like Rufus or Etcher, boot your computer from the USB drive, and you are running Linux. Your hard drive is untouched. This is an excellent way to experience Linux and make sure your hardware is compatible before committing to installation.

Virtual Machines: Linux Inside Windows or macOS

Another option is to run Linux in a virtual machine — software that simulates a complete computer within your existing operating system. Programs like VirtualBox (free) or VMware allow you to run Linux in a window on your Windows or macOS desktop. This approach is slightly slower than running Linux natively, but it offers a completely safe environment for learning, with no risk to your main system.

Dual Booting: Two Operating Systems on One Machine

For a more committed approach, dual booting allows you to install Linux alongside your existing operating system on the same computer. When you start your computer, you choose which OS to boot into. This gives you full native performance for Linux while keeping your existing environment available. However, it does require partitioning your hard drive and carries some risk if done incorrectly.

Windows Subsystem for Linux

Microsoft has also made Linux more accessible to Windows users through the Windows Subsystem for Linux (WSL), which allows you to run a Linux command-line environment directly within Windows 10 or 11. While WSL does not provide a full graphical Linux desktop, it offers the Linux command line and most Linux tools without leaving Windows. This is particularly popular among developers who work in Windows but need Linux tools.

Linux for Different Audiences: Who Uses It and Why

System Administrators

System administrators who manage servers and network infrastructure are among Linux’s most committed users. The command line tools available in Linux are unparalleled for managing large numbers of systems efficiently. Scripts can automate repetitive tasks, SSH allows secure remote management, and the open source nature of Linux means administrators can troubleshoot at every level of the system.

Developers and Programmers

Software development on Linux benefits from a rich ecosystem of development tools, most of which are free and open source. Compilers, debuggers, version control systems, database servers, and web development environments are all available and often easier to configure on Linux than on Windows. Many web applications are developed on Linux because the production servers that will run them are also Linux systems — eliminating a common source of bugs that arise from differences between development and production environments.

Security Professionals

The cybersecurity field has embraced Linux enthusiastically. Specialized security distributions like Kali Linux come preloaded with hundreds of penetration testing and security analysis tools. The transparency of open source code is essential for security researchers, and the low-level control Linux provides allows security professionals to conduct analysis at depths not possible on proprietary systems.

Students and Educators

Linux is an excellent educational platform for learning how computers and operating systems work. Because all the source code is available, students can examine real-world implementations of the concepts they study in textbooks. Many universities use Linux for computer science courses, and learning Linux is strongly valued in technology careers.

Everyday Users

Modern Linux distributions like Ubuntu and Linux Mint are genuinely accessible to everyday users. They come with web browsers, office suites, media players, and many other applications pre-installed. For users whose primary computing activities are browsing the web, writing documents, and watching videos, a modern Linux desktop can fully meet their needs while offering greater privacy and security than commercial alternatives.

Common Misconceptions About Linux

Several persistent myths about Linux continue to discourage people from trying it. Addressing these directly can help set realistic expectations.

Myth: Linux Is Only for Experts

This was more true 20 years ago than it is today. Modern distributions like Ubuntu and Linux Mint have graphical installers, automatic hardware detection, and user-friendly interfaces comparable to Windows and macOS. You can install and use Ubuntu without ever opening a terminal. The command line is powerful but not mandatory for basic use.

Myth: Linux Has No Software

Linux has an enormous software ecosystem. Most major open source applications — Firefox, LibreOffice, VLC, GIMP, Blender, and thousands more — run natively on Linux. The Linux gaming landscape has improved dramatically through Steam’s Proton compatibility layer, which allows many Windows games to run on Linux. Web-based applications that run in a browser work the same on Linux as on any other operating system. The main gap is Adobe Creative Suite applications and some specialized professional tools, though alternatives exist for most.

Myth: Linux Is Not Secure

The opposite is actually closer to the truth. Linux’s open source nature allows security flaws to be found and fixed quickly. The permission model that limits what non-root users can do provides strong protection against malware. The diversity of Linux systems means that a virus written to exploit one distribution will likely not work on others. Linux malware does exist, but it is far less common than Windows malware.

Myth: You Have to Choose One Linux Forever

Trying different Linux distributions is encouraged. The community concept of “distro hopping” — installing and experimenting with different distributions — is common and entirely normal. Since distributions are free, there is no cost to exploration.

The Future of Linux

Linux continues to grow and evolve. Several developments suggest its trajectory in the coming years.

Gaming on Linux has improved dramatically, driven by Valve’s investment in the Steam Deck and the Proton compatibility layer. As more games become playable on Linux, the barrier to adoption for everyday users continues to lower. Developers report that an increasing percentage of Steam users now play on Linux.

In cloud computing, Linux’s dominance appears likely to grow. The rise of containerization technologies like Docker and Kubernetes — both of which run on Linux — has made Linux infrastructure even more central to modern software deployment. These technologies allow applications to be packaged with their dependencies and run consistently across any Linux system.

The Linux kernel itself continues to be actively developed, with improvements to power efficiency, graphics support, security, and support for new hardware architectures. RISC-V, an open source processor architecture gaining momentum as an alternative to ARM and x86, is being supported in Linux, potentially opening new categories of devices to Linux.

On the desktop, the introduction of Wayland as a modern replacement for the aging X Window System is gradually completing, bringing better security, smoother graphics, and improved support for high-resolution displays to Linux desktops.

Conclusion: Why Linux Matters

Linux represents more than just an operating system. It represents a proof of concept — a demonstration that collaborative, open development can produce software that rivals or exceeds the quality of products from the world’s largest corporations. It proves that transparency and community engagement can create more secure, more reliable, and more innovative technology than proprietary closed development.

For anyone working in technology, understanding Linux is not optional — it is essential. Whether you are a developer deploying applications to cloud servers, a system administrator managing infrastructure, a security researcher analyzing vulnerabilities, or simply a curious user who wants to understand how computers really work, Linux provides a window into the fundamental mechanics of modern computing.

For everyday users, Linux offers a compelling alternative to commercial operating systems: free, open, private, secure, and deeply customizable. The investment in learning Linux pays dividends throughout a technology career and provides a degree of computing literacy and control that proprietary systems simply cannot offer.

From its humble origins as a Finnish student’s hobby project to its current position as the foundation of the world’s digital infrastructure, Linux has traveled an extraordinary journey. Understanding Linux means understanding not just an operating system, but one of the most remarkable collaborative achievements in human history — a project that succeeded precisely because it was built on openness, freedom, and the belief that great software belongs to everyone.