Overview

Software development in 2026 has entered a qualitatively new phase, with AI coding agents capable of planning, executing, and iterating across complex multi-step programming tasks now deeply integrated into everyday developer workflows at a level that was theoretical two years ago. The change is visible not only in the tooling itself — with Claude Code, OpenAI Codex, GitHub Copilot Agent Mode, and Cursor all shipping agentic capabilities — but in the ways software teams are organising work, reviewing code, and thinking about what “writing code” means in an era where AI can do much of the execution layer autonomously.

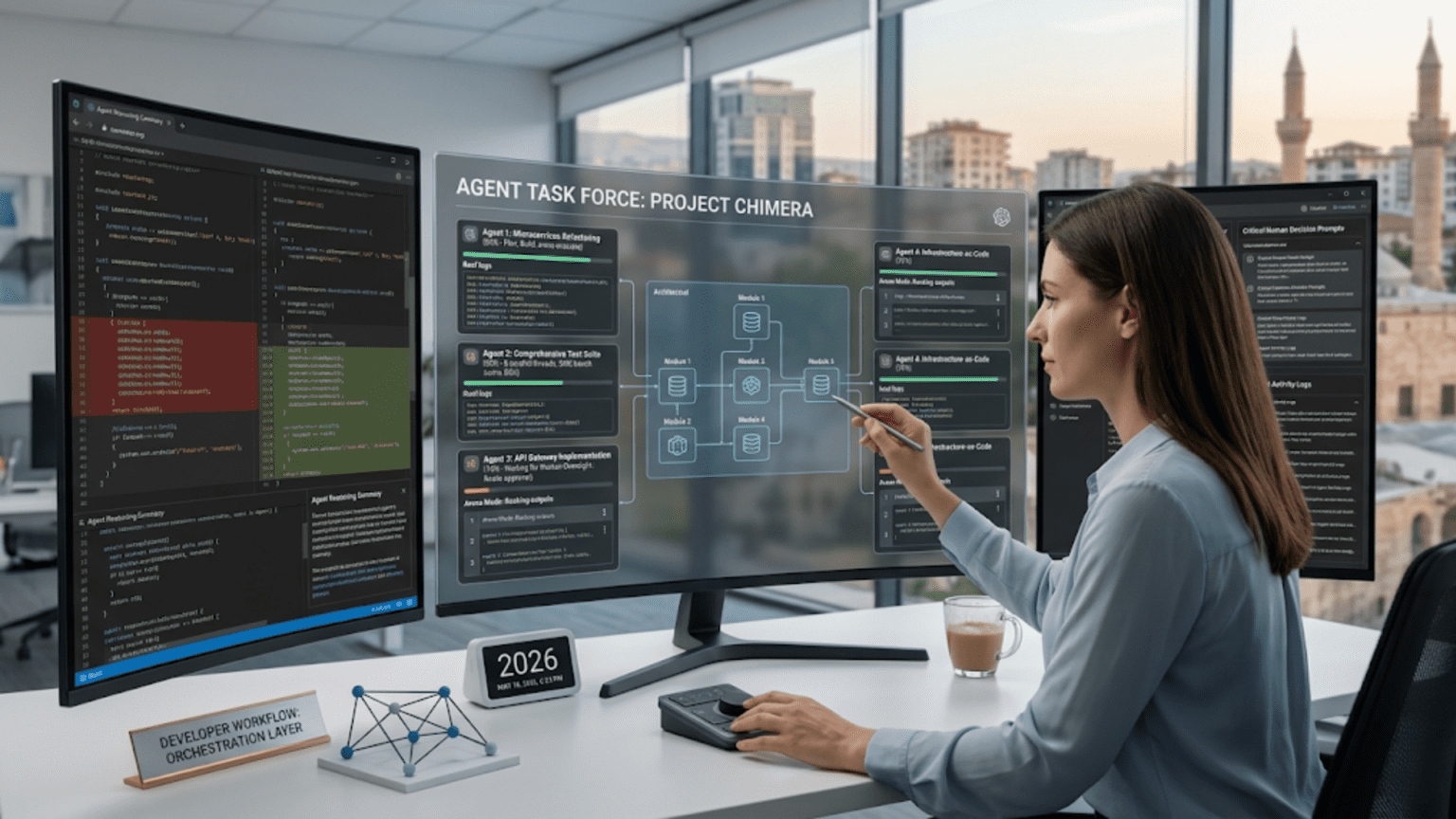

What Agentic Programming Actually Looks Like

A developer using an AI coding agent in 2026 does not simply prompt the model for a code snippet and paste it into a file. The workflow is considerably more complex and more powerful. The developer specifies a task — “migrate this service from REST to GraphQL” or “add comprehensive test coverage to this authentication module” — and the agent plans the work, executes it across multiple files and directories, runs tests, interprets failures, and iterates until the task is complete. The developer reviews diffs, approves changes, and redirects the agent when it drifts — a fundamentally supervisory role rather than an authoring one.

Practitioners who have adopted this workflow are reporting dramatic productivity improvements for the kinds of large-scale, mechanically complex changes that previously consumed weeks of developer time. Codebase migrations, API versioning, test suite expansion, and documentation generation are all tasks where agentic execution has proven particularly effective.

Migrating Large Codebases into AI-Native Workflows

One of the most discussed trends in developer communities this week is the growing number of teams attempting to migrate large existing codebases — some exceeding 10,000 lines — into AI-native workflows. This is not simply about using AI tools alongside existing code; it involves restructuring codebases to be more legible and navigable for AI agents, including adding richer inline documentation, clearer module boundaries, and more explicit type annotations that give agents better context for reasoning about code changes.

The Skills Shift

The emergence of agentic programming is changing which developer skills matter most. Raw code-writing speed has become less important. The ability to write precise, unambiguous task specifications — effectively, the ability to direct and manage AI agents well — is becoming a core competency. Code review skills are being elevated, as developers spend more time evaluating agent-generated changes than producing their own. And architectural judgment — knowing what to build, how to structure it, and where complexity lives — remains resolutely human. Developers who develop strong agent-direction skills while maintaining deep architectural understanding are finding themselves extraordinarily productive.