Introduction: The Power of Visual Understanding

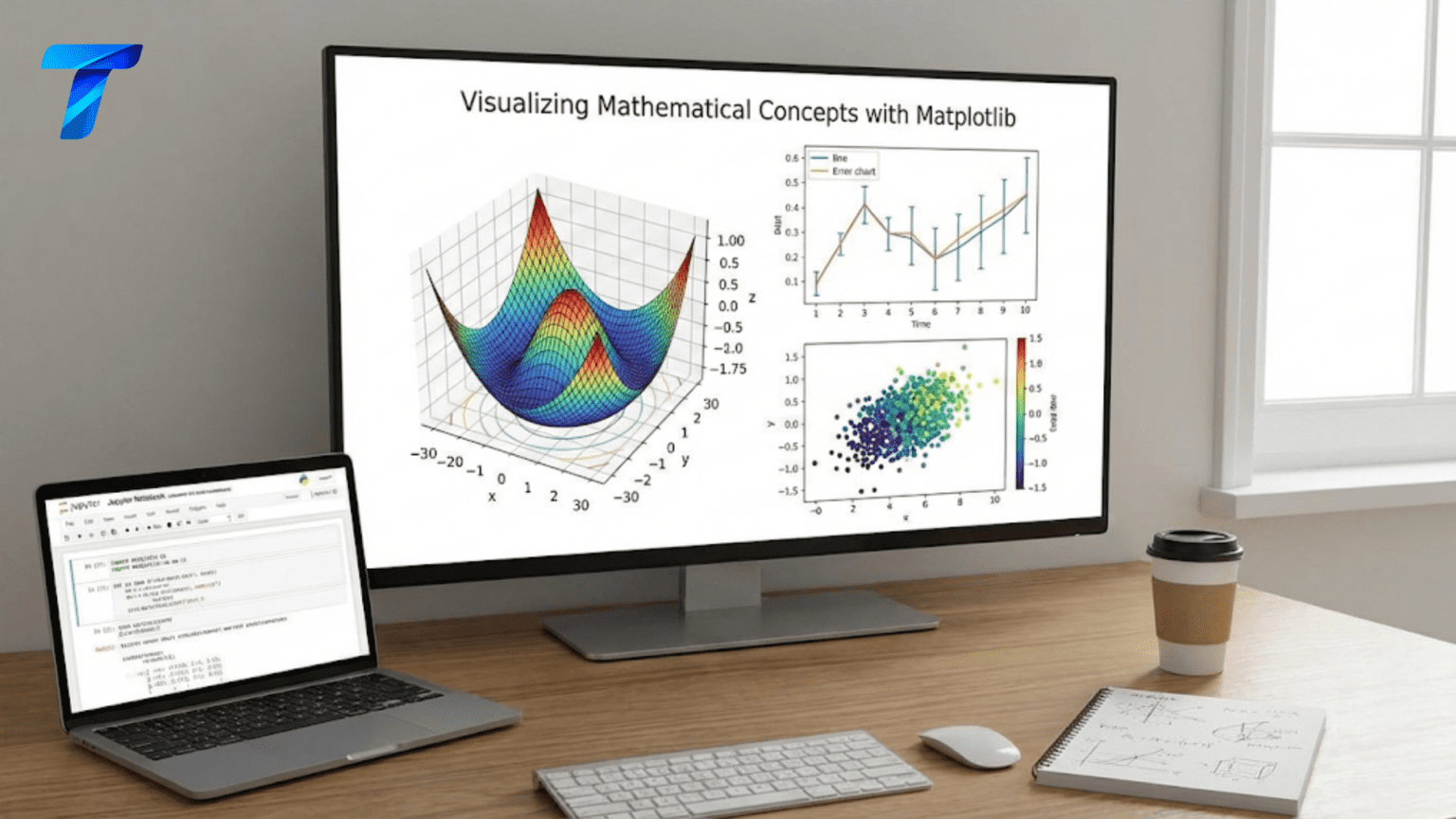

Visualization transforms abstract numbers and mathematical concepts into intuitive, visual forms that the human brain can process rapidly and deeply. A well-crafted visualization can reveal patterns, outliers, trends, and relationships that might remain hidden in raw numerical data. In machine learning, visualization serves multiple critical purposes throughout the entire workflow from initial data exploration through model evaluation and communication of results.

Matplotlib is Python’s foundational plotting library, providing comprehensive tools for creating static, animated, and interactive visualizations. Created by John Hunter in 2003 to enable MATLAB-style plotting in Python, Matplotlib has evolved into the de facto standard for scientific visualization in Python. Every major data science and machine learning library integrates with Matplotlib, making it an essential skill for practitioners at all levels.

The library operates through two distinct interfaces. The pyplot module provides a state-based interface similar to MATLAB, convenient for interactive work and quick plots. The object-oriented interface offers more control and flexibility, particularly valuable when creating complex figures with multiple subplots or when embedding plots in applications. Understanding both approaches and knowing when to use each is key to effective visualization.

This comprehensive guide will develop your visualization skills from fundamental concepts through advanced techniques specific to machine learning applications. We will start with basic plotting mechanics, understanding how to create and customize plots effectively. We will explore different plot types suited to various data and purposes. We will dive deep into visualizing mathematical functions, probability distributions, and optimization landscapes that form the theoretical foundations of machine learning. We will examine techniques for exploratory data analysis, model evaluation visualization, and communicating results effectively. Throughout, we will build practical skills through extensive code examples that you can adapt to your own projects.

Getting Started with Matplotlib: Basic Plotting Mechanics

Understanding the structure of Matplotlib is essential before diving into specific visualizations. A figure is the entire window or page that holds your visualization. Within a figure, you create one or more axes, which are individual plotting areas where you draw data. Each axes object contains the actual plot elements like lines, markers, labels, and legends.

Let’s begin with fundamental plotting concepts and build up to more sophisticated visualizations:

import numpy as np

import matplotlib.pyplot as plt

# Create simple data

x = np.linspace(0, 10, 100)

y = np.sin(x)

# Method 1: Simple pyplot interface

plt.figure(figsize=(10, 5))

plt.plot(x, y)

plt.xlabel('x')

plt.ylabel('sin(x)')

plt.title('Simple Sine Wave')

plt.grid(True, alpha=0.3)

plt.show()

# Method 2: Object-oriented interface (recommended for complex plots)

fig, ax = plt.subplots(figsize=(10, 5))

ax.plot(x, y, linewidth=2, color='blue', label='sin(x)')

ax.set_xlabel('x', fontsize=12)

ax.set_ylabel('y', fontsize=12)

ax.set_title('Sine Wave - Object-Oriented Approach', fontsize=14, fontweight='bold')

ax.grid(True, alpha=0.3)

ax.legend()

plt.tight_layout()

plt.show()

# Multiple plots on same axes

fig, ax = plt.subplots(figsize=(12, 5))

x = np.linspace(0, 2*np.pi, 100)

ax.plot(x, np.sin(x), label='sin(x)', linewidth=2)

ax.plot(x, np.cos(x), label='cos(x)', linewidth=2)

ax.plot(x, np.sin(x)**2, label='sin²(x)', linewidth=2, linestyle='--')

ax.set_xlabel('x (radians)', fontsize=12)

ax.set_ylabel('y', fontsize=12)

ax.set_title('Trigonometric Functions', fontsize=14, fontweight='bold')

ax.legend(loc='upper right', fontsize=11)

ax.grid(True, alpha=0.3)

ax.axhline(y=0, color='black', linewidth=0.5)

ax.axvline(x=0, color='black', linewidth=0.5)

plt.tight_layout()

plt.show()

# Customizing appearance

fig, axes = plt.subplots(2, 2, figsize=(12, 10))

# Different line styles

x = np.linspace(0, 5, 50)

styles = ['-', '--', '-.', ':']

for i, (ax, style) in enumerate(zip(axes.flat, styles)):

y = np.exp(-x/(i+1)) * np.sin(5*x)

ax.plot(x, y, linestyle=style, linewidth=2, color='darkblue')

ax.set_title(f'Line style: {style}', fontsize=12)

ax.grid(True, alpha=0.3)

plt.suptitle('Different Line Styles', fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

print("Basic Plotting Concepts")

print("=" * 60)

print("Figure: The entire window/page containing plots")

print("Axes: Individual plotting area within figure")

print("pyplot: State-based interface (MATLAB-style)")

print("Object-oriented: More control via fig and ax objects")

print("\nKey customization options:")

print("- linewidth: thickness of lines")

print("- color: line/marker color")

print("- linestyle: solid, dashed, dotted patterns")

print("- label: for legend entries")

print("- alpha: transparency (0=transparent, 1=opaque)")The object-oriented approach using fig, ax = plt.subplots() is generally preferred because it provides explicit control over which axes you are modifying, making code clearer and more maintainable, particularly with complex multi-plot figures.

Visualizing Mathematical Functions: From Simple to Complex

Mathematical functions form the theoretical foundation of machine learning algorithms. Visualizing these functions builds intuition about their behavior and properties.

import numpy as np

import matplotlib.pyplot as plt

# Polynomial functions

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

x = np.linspace(-3, 3, 200)

# Linear function

axes[0, 0].plot(x, 2*x + 1, linewidth=2, color='blue')

axes[0, 0].set_title(r'Linear: $f(x) = 2x + 1$', fontsize=12, fontweight='bold')

axes[0, 0].grid(True, alpha=0.3)

axes[0, 0].axhline(y=0, color='black', linewidth=0.5)

axes[0, 0].axvline(x=0, color='black', linewidth=0.5)

# Quadratic function

axes[0, 1].plot(x, x**2, linewidth=2, color='red', label=r'$f(x) = x^2$')

axes[0, 1].plot(x, -x**2, linewidth=2, color='green', label=r'$f(x) = -x^2$')

axes[0, 1].set_title('Quadratic Functions', fontsize=12, fontweight='bold')

axes[0, 1].grid(True, alpha=0.3)

axes[0, 1].axhline(y=0, color='black', linewidth=0.5)

axes[0, 1].axvline(x=0, color='black', linewidth=0.5)

axes[0, 1].legend()

# Cubic and higher polynomials

axes[1, 0].plot(x, x**3, linewidth=2, label=r'$x^3$')

axes[1, 0].plot(x, x**3 - 2*x, linewidth=2, label=r'$x^3 - 2x$')

axes[1, 0].set_title('Cubic Polynomials', fontsize=12, fontweight='bold')

axes[1, 0].grid(True, alpha=0.3)

axes[1, 0].axhline(y=0, color='black', linewidth=0.5)

axes[1, 0].axvline(x=0, color='black', linewidth=0.5)

axes[1, 0].legend()

axes[1, 0].set_ylim(-10, 10)

# Multiple polynomials

for degree in range(1, 6):

axes[1, 1].plot(x, x**degree, linewidth=2, label=f'$x^{degree}$', alpha=0.7)

axes[1, 1].set_title('Polynomial Functions of Different Degrees', fontsize=12, fontweight='bold')

axes[1, 1].grid(True, alpha=0.3)

axes[1, 1].axhline(y=0, color='black', linewidth=0.5)

axes[1, 1].axvline(x=0, color='black', linewidth=0.5)

axes[1, 1].legend()

axes[1, 1].set_ylim(-5, 5)

plt.tight_layout()

plt.show()

# Activation functions used in neural networks

fig, axes = plt.subplots(2, 3, figsize=(16, 10))

x = np.linspace(-5, 5, 200)

# Sigmoid function

sigmoid = 1 / (1 + np.exp(-x))

axes[0, 0].plot(x, sigmoid, linewidth=2, color='blue')

axes[0, 0].set_title(r'Sigmoid: $\sigma(x) = \frac{1}{1 + e^{-x}}$', fontsize=11, fontweight='bold')

axes[0, 0].grid(True, alpha=0.3)

axes[0, 0].axhline(y=0, color='black', linewidth=0.5)

axes[0, 0].axhline(y=1, color='black', linewidth=0.5, linestyle='--', alpha=0.5)

axes[0, 0].axvline(x=0, color='black', linewidth=0.5)

axes[0, 0].set_ylabel('Output', fontsize=10)

# Tanh function

tanh = np.tanh(x)

axes[0, 1].plot(x, tanh, linewidth=2, color='red')

axes[0, 1].set_title(r'Tanh: $\tanh(x) = \frac{e^x - e^{-x}}{e^x + e^{-x}}$', fontsize=11, fontweight='bold')

axes[0, 1].grid(True, alpha=0.3)

axes[0, 1].axhline(y=0, color='black', linewidth=0.5)

axes[0, 1].axhline(y=1, color='black', linewidth=0.5, linestyle='--', alpha=0.5)

axes[0, 1].axhline(y=-1, color='black', linewidth=0.5, linestyle='--', alpha=0.5)

axes[0, 1].axvline(x=0, color='black', linewidth=0.5)

# ReLU function

relu = np.maximum(0, x)

axes[0, 2].plot(x, relu, linewidth=2, color='green')

axes[0, 2].set_title(r'ReLU: $f(x) = \max(0, x)$', fontsize=11, fontweight='bold')

axes[0, 2].grid(True, alpha=0.3)

axes[0, 2].axhline(y=0, color='black', linewidth=0.5)

axes[0, 2].axvline(x=0, color='black', linewidth=0.5)

# Leaky ReLU

alpha_leaky = 0.1

leaky_relu = np.where(x > 0, x, alpha_leaky * x)

axes[1, 0].plot(x, leaky_relu, linewidth=2, color='purple')

axes[1, 0].set_title(rf'Leaky ReLU: $f(x) = \max({alpha_leaky}x, x)$', fontsize=11, fontweight='bold')

axes[1, 0].grid(True, alpha=0.3)

axes[1, 0].axhline(y=0, color='black', linewidth=0.5)

axes[1, 0].axvline(x=0, color='black', linewidth=0.5)

axes[1, 0].set_xlabel('Input', fontsize=10)

axes[1, 0].set_ylabel('Output', fontsize=10)

# ELU (Exponential Linear Unit)

alpha_elu = 1.0

elu = np.where(x > 0, x, alpha_elu * (np.exp(x) - 1))

axes[1, 1].plot(x, elu, linewidth=2, color='orange')

axes[1, 1].set_title(r'ELU: $f(x) = \begin{cases} x & x > 0 \\ \alpha(e^x - 1) & x \leq 0 \end{cases}$',

fontsize=11, fontweight='bold')

axes[1, 1].grid(True, alpha=0.3)

axes[1, 1].axhline(y=0, color='black', linewidth=0.5)

axes[1, 1].axvline(x=0, color='black', linewidth=0.5)

axes[1, 1].set_xlabel('Input', fontsize=10)

# Softplus

softplus = np.log(1 + np.exp(x))

axes[1, 2].plot(x, softplus, linewidth=2, color='brown')

axes[1, 2].set_title(r'Softplus: $f(x) = \ln(1 + e^x)$', fontsize=11, fontweight='bold')

axes[1, 2].grid(True, alpha=0.3)

axes[1, 2].axhline(y=0, color='black', linewidth=0.5)

axes[1, 2].axvline(x=0, color='black', linewidth=0.5)

axes[1, 2].set_xlabel('Input', fontsize=10)

plt.suptitle('Common Neural Network Activation Functions', fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

# Loss functions visualization

fig, axes = plt.subplots(1, 3, figsize=(16, 5))

# Mean Squared Error

y_true = 0

y_pred = np.linspace(-3, 3, 200)

mse = (y_true - y_pred)**2

axes[0].plot(y_pred, mse, linewidth=2, color='blue')

axes[0].set_title(r'MSE: $L(y, \hat{y}) = (y - \hat{y})^2$', fontsize=12, fontweight='bold')

axes[0].set_xlabel(r'Prediction $\hat{y}$', fontsize=11)

axes[0].set_ylabel('Loss', fontsize=11)

axes[0].grid(True, alpha=0.3)

axes[0].axvline(x=y_true, color='red', linewidth=2, linestyle='--', label='True value')

axes[0].legend()

# Mean Absolute Error

mae = np.abs(y_true - y_pred)

axes[1].plot(y_pred, mae, linewidth=2, color='green')

axes[1].set_title(r'MAE: $L(y, \hat{y}) = |y - \hat{y}|$', fontsize=12, fontweight='bold')

axes[1].set_xlabel(r'Prediction $\hat{y}$', fontsize=11)

axes[1].set_ylabel('Loss', fontsize=11)

axes[1].grid(True, alpha=0.3)

axes[1].axvline(x=y_true, color='red', linewidth=2, linestyle='--', label='True value')

axes[1].legend()

# Binary Cross-Entropy

y_true_class = 1

y_pred_prob = np.linspace(0.01, 0.99, 200)

bce = -(y_true_class * np.log(y_pred_prob) + (1 - y_true_class) * np.log(1 - y_pred_prob))

axes[2].plot(y_pred_prob, bce, linewidth=2, color='red')

axes[2].set_title(r'BCE: $L = -[y\log(\hat{y}) + (1-y)\log(1-\hat{y})]$', fontsize=12, fontweight='bold')

axes[2].set_xlabel(r'Predicted Probability $\hat{y}$', fontsize=11)

axes[2].set_ylabel('Loss', fontsize=11)

axes[2].grid(True, alpha=0.3)

axes[2].axvline(x=y_true_class, color='blue', linewidth=2, linestyle='--', label='True class')

axes[2].legend()

plt.tight_layout()

plt.show()

print("\nActivation Functions in Neural Networks:")

print("=" * 60)

print("Sigmoid: Squashes values to (0,1), historically popular")

print("Tanh: Squashes to (-1,1), zero-centered")

print("ReLU: Most popular, simple and effective")

print("Leaky ReLU: Prevents dying neurons")

print("ELU: Smooth negative values")

print("Softplus: Smooth approximation of ReLU")These visualizations make abstract mathematical concepts concrete. Seeing how activation functions transform inputs helps understand why certain functions work better for specific tasks. Visualizing loss functions shows how different losses penalize prediction errors differently, informing the choice of appropriate loss for your problem.

Scatter Plots and Data Distribution Visualization

Scatter plots reveal relationships between variables and are fundamental for exploratory data analysis in machine learning.

import numpy as np

import matplotlib.pyplot as plt

# Generate synthetic data with different correlation patterns

np.random.seed(42)

n = 200

# Strong positive correlation

x1 = np.random.randn(n)

y1 = 2 * x1 + np.random.randn(n) * 0.5

# No correlation

x2 = np.random.randn(n)

y2 = np.random.randn(n)

# Non-linear relationship

x3 = np.random.uniform(-3, 3, n)

y3 = x3**2 + np.random.randn(n) * 2

# Create visualization

fig, axes = plt.subplots(1, 3, figsize=(16, 5))

# Plot 1: Strong correlation

axes[0].scatter(x1, y1, alpha=0.6, s=50, c='blue', edgecolors='black', linewidth=0.5)

axes[0].set_title('Strong Positive Correlation', fontsize=12, fontweight='bold')

axes[0].set_xlabel('X', fontsize=11)

axes[0].set_ylabel('Y', fontsize=11)

axes[0].grid(True, alpha=0.3)

# Add correlation coefficient

corr1 = np.corrcoef(x1, y1)[0, 1]

axes[0].text(0.05, 0.95, f'r = {corr1:.3f}', transform=axes[0].transAxes,

fontsize=12, verticalalignment='top',

bbox=dict(boxstyle='round', facecolor='wheat', alpha=0.5))

# Plot 2: No correlation

axes[1].scatter(x2, y2, alpha=0.6, s=50, c='green', edgecolors='black', linewidth=0.5)

axes[1].set_title('No Correlation', fontsize=12, fontweight='bold')

axes[1].set_xlabel('X', fontsize=11)

axes[1].set_ylabel('Y', fontsize=11)

axes[1].grid(True, alpha=0.3)

corr2 = np.corrcoef(x2, y2)[0, 1]

axes[1].text(0.05, 0.95, f'r = {corr2:.3f}', transform=axes[1].transAxes,

fontsize=12, verticalalignment='top',

bbox=dict(boxstyle='round', facecolor='wheat', alpha=0.5))

# Plot 3: Non-linear relationship

axes[2].scatter(x3, y3, alpha=0.6, s=50, c='red', edgecolors='black', linewidth=0.5)

axes[2].set_title('Non-linear Relationship', fontsize=12, fontweight='bold')

axes[2].set_xlabel('X', fontsize=11)

axes[2].set_ylabel('Y', fontsize=11)

axes[2].grid(True, alpha=0.3)

corr3 = np.corrcoef(x3, y3)[0, 1]

axes[2].text(0.05, 0.95, f'r = {corr3:.3f}', transform=axes[2].transAxes,

fontsize=12, verticalalignment='top',

bbox=dict(boxstyle='round', facecolor='wheat', alpha=0.5))

plt.tight_layout()

plt.show()

# Classification data visualization

fig, axes = plt.subplots(1, 2, figsize=(14, 6))

# Generate classification data

n_per_class = 100

class_1 = np.random.randn(n_per_class, 2) + np.array([2, 2])

class_2 = np.random.randn(n_per_class, 2) + np.array([-2, -2])

class_3 = np.random.randn(n_per_class, 2) + np.array([2, -2])

# Plot 1: Three classes

axes[0].scatter(class_1[:, 0], class_1[:, 1], alpha=0.6, s=50, c='red',

label='Class 1', edgecolors='black', linewidth=0.5)

axes[0].scatter(class_2[:, 0], class_2[:, 1], alpha=0.6, s=50, c='blue',

label='Class 2', edgecolors='black', linewidth=0.5)

axes[0].scatter(class_3[:, 0], class_3[:, 1], alpha=0.6, s=50, c='green',

label='Class 3', edgecolors='black', linewidth=0.5)

axes[0].set_title('Multi-class Classification Data', fontsize=12, fontweight='bold')

axes[0].set_xlabel('Feature 1', fontsize=11)

axes[0].set_ylabel('Feature 2', fontsize=11)

axes[0].legend()

axes[0].grid(True, alpha=0.3)

# Plot 2: Overlapping classes (harder classification problem)

class_1_hard = np.random.randn(n_per_class, 2) + np.array([0, 0])

class_2_hard = np.random.randn(n_per_class, 2) + np.array([1, 1])

axes[1].scatter(class_1_hard[:, 0], class_1_hard[:, 1], alpha=0.6, s=50,

c='purple', label='Class 1', edgecolors='black', linewidth=0.5)

axes[1].scatter(class_2_hard[:, 0], class_2_hard[:, 1], alpha=0.6, s=50,

c='orange', label='Class 2', edgecolors='black', linewidth=0.5)

axes[1].set_title('Overlapping Classes', fontsize=12, fontweight='bold')

axes[1].set_xlabel('Feature 1', fontsize=11)

axes[1].set_ylabel('Feature 2', fontsize=11)

axes[1].legend()

axes[1].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Feature distribution visualization

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

# Histogram

data = np.random.randn(1000)

axes[0, 0].hist(data, bins=30, alpha=0.7, color='skyblue', edgecolor='black')

axes[0, 0].set_title('Histogram: Normal Distribution', fontsize=12, fontweight='bold')

axes[0, 0].set_xlabel('Value', fontsize=11)

axes[0, 0].set_ylabel('Frequency', fontsize=11)

axes[0, 0].grid(True, alpha=0.3, axis='y')

# Multiple histograms

feature_1 = np.random.randn(1000) * 2 + 5

feature_2 = np.random.randn(1000) * 1 + 3

axes[0, 1].hist(feature_1, bins=30, alpha=0.5, label='Feature 1', color='blue', edgecolor='black')

axes[0, 1].hist(feature_2, bins=30, alpha=0.5, label='Feature 2', color='red', edgecolor='black')

axes[0, 1].set_title('Comparing Feature Distributions', fontsize=12, fontweight='bold')

axes[0, 1].set_xlabel('Value', fontsize=11)

axes[0, 1].set_ylabel('Frequency', fontsize=11)

axes[0, 1].legend()

axes[0, 1].grid(True, alpha=0.3, axis='y')

# Box plot

data_boxplot = [np.random.randn(100) for _ in range(5)]

axes[1, 0].boxplot(data_boxplot, labels=['F1', 'F2', 'F3', 'F4', 'F5'])

axes[1, 0].set_title('Box Plot: Feature Comparison', fontsize=12, fontweight='bold')

axes[1, 0].set_ylabel('Value', fontsize=11)

axes[1, 0].grid(True, alpha=0.3, axis='y')

# Violin plot

parts = axes[1, 1].violinplot(data_boxplot, positions=range(1, 6),

showmeans=True, showmedians=True)

axes[1, 1].set_title('Violin Plot: Distribution Shape', fontsize=12, fontweight='bold')

axes[1, 1].set_xlabel('Feature', fontsize=11)

axes[1, 1].set_ylabel('Value', fontsize=11)

axes[1, 1].set_xticks(range(1, 6))

axes[1, 1].set_xticklabels(['F1', 'F2', 'F3', 'F4', 'F5'])

axes[1, 1].grid(True, alpha=0.3, axis='y')

plt.tight_layout()

plt.show()

print("\nData Visualization Best Practices:")

print("=" * 60)

print("Scatter plots: Show relationships between continuous variables")

print("Histograms: Display distribution of single variable")

print("Box plots: Show median, quartiles, and outliers")

print("Violin plots: Combine box plot with kernel density estimation")

print("\nKey insights from visualizations:")

print("- Correlation doesn't capture non-linear relationships")

print("- Overlapping classes indicate difficult classification")

print("- Distribution shape informs preprocessing choices")These visualization techniques are essential for understanding your data before building models. They reveal whether features are normally distributed, whether classes are separable, and whether relationships between variables are linear or non-linear.

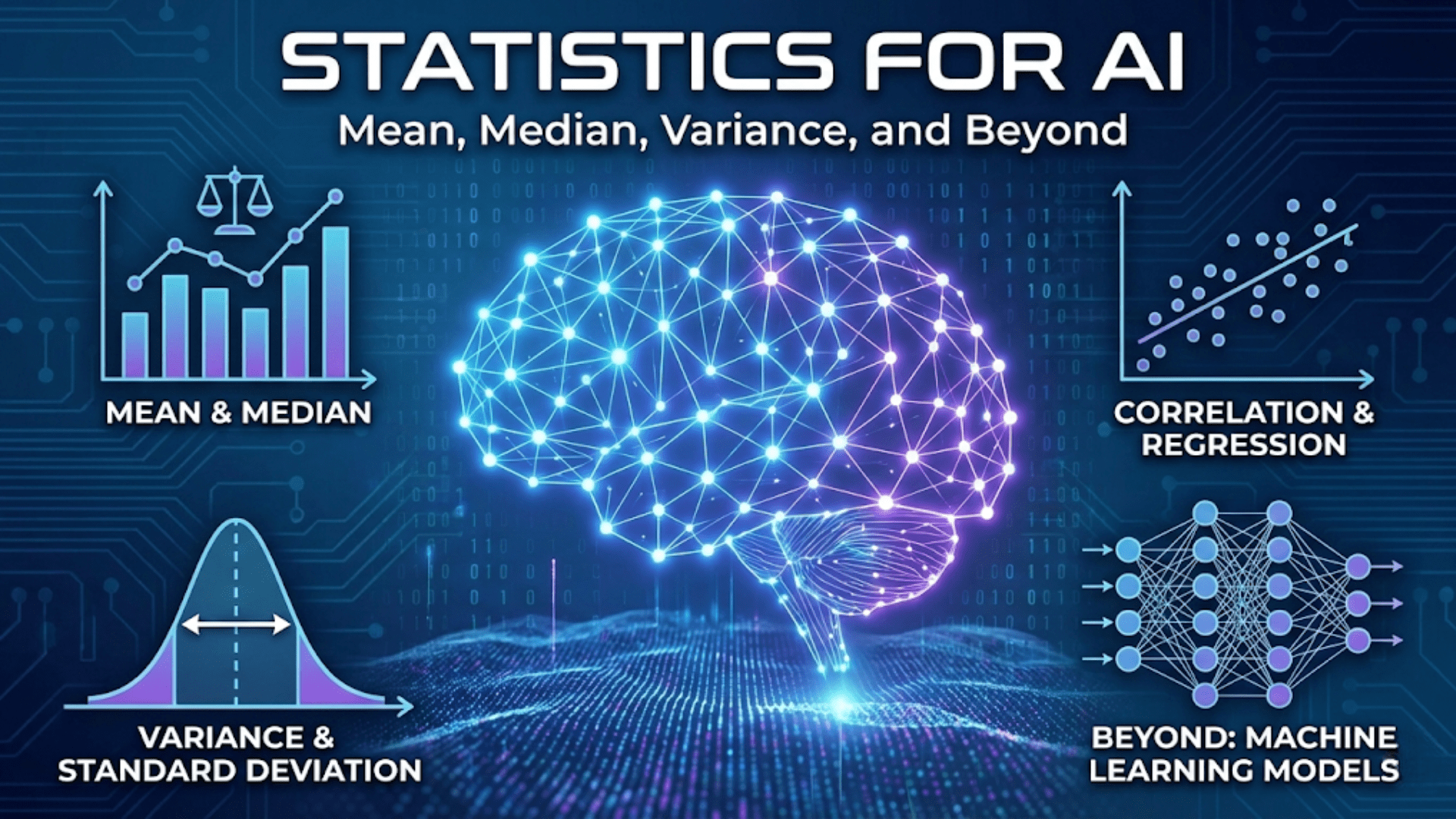

Visualizing Probability Distributions

Understanding probability distributions is fundamental to machine learning, and visualization makes these abstract concepts tangible.

import numpy as np

import matplotlib.pyplot as plt

from scipy import stats

fig, axes = plt.subplots(3, 2, figsize=(14, 14))

# Normal distribution

x_normal = np.linspace(-5, 5, 200)

for mu, sigma in [(0, 1), (0, 2), (2, 1)]:

pdf = stats.norm.pdf(x_normal, mu, sigma)

axes[0, 0].plot(x_normal, pdf, linewidth=2,

label=rf'$\mu={mu}, \sigma={sigma}$')

axes[0, 0].set_title(r'Normal Distribution: $f(x) = \frac{1}{\sigma\sqrt{2\pi}} e^{-\frac{(x-\mu)^2}{2\sigma^2}}$',

fontsize=11, fontweight='bold')

axes[0, 0].set_xlabel('x', fontsize=10)

axes[0, 0].set_ylabel('Probability Density', fontsize=10)

axes[0, 0].legend()

axes[0, 0].grid(True, alpha=0.3)

# Binomial distribution

n, p = 10, 0.5

x_binom = np.arange(0, n+1)

for p_val in [0.3, 0.5, 0.7]:

pmf = stats.binom.pmf(x_binom, n, p_val)

axes[0, 1].plot(x_binom, pmf, marker='o', linewidth=2, markersize=6,

label=f'p={p_val}')

axes[0, 1].set_title(r'Binomial: $P(X=k) = \binom{n}{k} p^k (1-p)^{n-k}$',

fontsize=11, fontweight='bold')

axes[0, 1].set_xlabel('Number of Successes', fontsize=10)

axes[0, 1].set_ylabel('Probability', fontsize=10)

axes[0, 1].legend()

axes[0, 1].grid(True, alpha=0.3)

# Poisson distribution

x_poisson = np.arange(0, 20)

for lambda_val in [1, 4, 8]:

pmf = stats.poisson.pmf(x_poisson, lambda_val)

axes[1, 0].plot(x_poisson, pmf, marker='o', linewidth=2, markersize=5,

label=rf'$\lambda={lambda_val}$')

axes[1, 0].set_title(r'Poisson: $P(X=k) = \frac{\lambda^k e^{-\lambda}}{k!}$',

fontsize=11, fontweight='bold')

axes[1, 0].set_xlabel('Number of Events', fontsize=10)

axes[1, 0].set_ylabel('Probability', fontsize=10)

axes[1, 0].legend()

axes[1, 0].grid(True, alpha=0.3)

# Exponential distribution

x_exp = np.linspace(0, 5, 200)

for lambda_val in [0.5, 1, 2]:

pdf = stats.expon.pdf(x_exp, scale=1/lambda_val)

axes[1, 1].plot(x_exp, pdf, linewidth=2,

label=rf'$\lambda={lambda_val}$')

axes[1, 1].set_title(r'Exponential: $f(x) = \lambda e^{-\lambda x}$',

fontsize=11, fontweight='bold')

axes[1, 1].set_xlabel('x', fontsize=10)

axes[1, 1].set_ylabel('Probability Density', fontsize=10)

axes[1, 1].legend()

axes[1, 1].grid(True, alpha=0.3)

# Beta distribution

x_beta = np.linspace(0, 1, 200)

param_sets = [(0.5, 0.5), (2, 2), (5, 2), (2, 5)]

for alpha, beta in param_sets:

pdf = stats.beta.pdf(x_beta, alpha, beta)

axes[2, 0].plot(x_beta, pdf, linewidth=2,

label=rf'$\alpha={alpha}, \beta={beta}$')

axes[2, 0].set_title(r'Beta: $f(x) = \frac{x^{\alpha-1}(1-x)^{\beta-1}}{B(\alpha,\beta)}$',

fontsize=11, fontweight='bold')

axes[2, 0].set_xlabel('x', fontsize=10)

axes[2, 0].set_ylabel('Probability Density', fontsize=10)

axes[2, 0].legend()

axes[2, 0].grid(True, alpha=0.3)

# Uniform distribution

x_uniform = np.linspace(-0.5, 3.5, 200)

for a, b in [(0, 1), (0, 2), (1, 3)]:

pdf = stats.uniform.pdf(x_uniform, a, b-a)

axes[2, 1].plot(x_uniform, pdf, linewidth=2,

label=f'[{a}, {b}]')

axes[2, 1].set_title(r'Uniform: $f(x) = \frac{1}{b-a}$ for $a \leq x \leq b$',

fontsize=11, fontweight='bold')

axes[2, 1].set_xlabel('x', fontsize=10)

axes[2, 1].set_ylabel('Probability Density', fontsize=10)

axes[2, 1].legend()

axes[2, 1].grid(True, alpha=0.3)

axes[2, 1].set_ylim(0, 1.5)

plt.suptitle('Common Probability Distributions in Machine Learning',

fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

# Demonstrate Central Limit Theorem

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

# Original distribution (uniform)

n_samples = 10000

original = np.random.uniform(0, 1, n_samples)

axes[0, 0].hist(original, bins=50, alpha=0.7, color='skyblue', edgecolor='black', density=True)

axes[0, 0].set_title('Original Distribution (Uniform)', fontsize=12, fontweight='bold')

axes[0, 0].set_ylabel('Density', fontsize=10)

axes[0, 0].grid(True, alpha=0.3, axis='y')

# Sampling distributions with different sample sizes

sample_sizes = [2, 10, 30]

for idx, n in enumerate(sample_sizes):

sample_means = []

for _ in range(10000):

sample = np.random.uniform(0, 1, n)

sample_means.append(np.mean(sample))

ax_idx = idx + 1

row = ax_idx // 2

col = ax_idx % 2

axes[row, col].hist(sample_means, bins=50, alpha=0.7, color='lightcoral',

edgecolor='black', density=True)

# Overlay theoretical normal

mean_theory = 0.5

std_theory = np.sqrt(1/12/n)

x_theory = np.linspace(min(sample_means), max(sample_means), 100)

axes[row, col].plot(x_theory, stats.norm.pdf(x_theory, mean_theory, std_theory),

'b-', linewidth=2, label='Theoretical Normal')

axes[row, col].set_title(f'Sample Means (n={n})', fontsize=12, fontweight='bold')

axes[row, col].set_ylabel('Density', fontsize=10)

axes[row, col].legend()

axes[row, col].grid(True, alpha=0.3, axis='y')

plt.suptitle('Central Limit Theorem: Sampling Distribution Approaches Normal',

fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

print("\nProbability Distribution Insights:")

print("=" * 60)

print("Normal: Symmetric, bell-shaped, most common in nature")

print("Binomial: Count of successes in fixed trials")

print("Poisson: Count of rare events in interval")

print("Exponential: Time between events")

print("Beta: Probabilities and proportions (bounded [0,1])")

print("Uniform: Equal probability over range")

print("\nCentral Limit Theorem:")

print("Sample means approach normal distribution regardless of")

print("original distribution shape (with sufficient sample size)")These visualizations demonstrate how different distributions have distinct shapes and properties. Understanding these distributions helps you choose appropriate probability models for your data and understand the theoretical foundations of machine learning algorithms.

3D Visualization and Surface Plots

Three-dimensional plots are particularly valuable for visualizing optimization landscapes and functions of two variables, which appear frequently in machine learning theory.

import numpy as np

import matplotlib.pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

# Create optimization landscape

fig = plt.figure(figsize=(16, 12))

# Surface plot 1: Simple quadratic (convex)

ax1 = fig.add_subplot(221, projection='3d')

x = np.linspace(-3, 3, 50)

y = np.linspace(-3, 3, 50)

X, Y = np.meshgrid(x, y)

Z1 = X**2 + Y**2

surf1 = ax1.plot_surface(X, Y, Z1, cmap='viridis', alpha=0.8, edgecolor='none')

ax1.set_xlabel('$x$', fontsize=11)

ax1.set_ylabel('$y$', fontsize=11)

ax1.set_zlabel('$f(x,y)$', fontsize=11)

ax1.set_title(r'Convex Function: $f(x,y) = x^2 + y^2$', fontsize=12, fontweight='bold')

fig.colorbar(surf1, ax=ax1, shrink=0.5, aspect=5)

# Surface plot 2: Saddle point

ax2 = fig.add_subplot(222, projection='3d')

Z2 = X**2 - Y**2

surf2 = ax2.plot_surface(X, Y, Z2, cmap='plasma', alpha=0.8, edgecolor='none')

ax2.set_xlabel('$x$', fontsize=11)

ax2.set_ylabel('$y$', fontsize=11)

ax2.set_zlabel('$f(x,y)$', fontsize=11)

ax2.set_title(r'Saddle Point: $f(x,y) = x^2 - y^2$', fontsize=12, fontweight='bold')

fig.colorbar(surf2, ax=ax2, shrink=0.5, aspect=5)

# Surface plot 3: Rosenbrock function (banana function)

ax3 = fig.add_subplot(223, projection='3d')

x3 = np.linspace(-2, 2, 50)

y3 = np.linspace(-1, 3, 50)

X3, Y3 = np.meshgrid(x3, y3)

Z3 = (1 - X3)**2 + 100 * (Y3 - X3**2)**2

surf3 = ax3.plot_surface(X3, Y3, Z3, cmap='coolwarm', alpha=0.8, edgecolor='none')

ax3.set_xlabel('$x$', fontsize=11)

ax3.set_ylabel('$y$', fontsize=11)

ax3.set_zlabel('$f(x,y)$', fontsize=11)

ax3.set_title(r'Rosenbrock: $f(x,y) = (1-x)^2 + 100(y-x^2)^2$', fontsize=12, fontweight='bold')

fig.colorbar(surf3, ax=ax3, shrink=0.5, aspect=5)

# Surface plot 4: Himmelblau's function (multiple minima)

ax4 = fig.add_subplot(224, projection='3d')

x4 = np.linspace(-5, 5, 50)

y4 = np.linspace(-5, 5, 50)

X4, Y4 = np.meshgrid(x4, y4)

Z4 = (X4**2 + Y4 - 11)**2 + (X4 + Y4**2 - 7)**2

surf4 = ax4.plot_surface(X4, Y4, Z4, cmap='terrain', alpha=0.8, edgecolor='none')

ax4.set_xlabel('$x$', fontsize=11)

ax4.set_ylabel('$y$', fontsize=11)

ax4.set_zlabel('$f(x,y)$', fontsize=11)

ax4.set_title("Himmelblau's Function (Multiple Minima)", fontsize=12, fontweight='bold')

fig.colorbar(surf4, ax=ax4, shrink=0.5, aspect=5)

plt.suptitle('Optimization Landscapes in 3D', fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

# Contour plots (alternative representation)

fig, axes = plt.subplots(2, 2, figsize=(14, 12))

# Contour 1: Convex

contour1 = axes[0, 0].contour(X, Y, Z1, levels=20, cmap='viridis')

axes[0, 0].clabel(contour1, inline=True, fontsize=8)

axes[0, 0].set_xlabel('$x$', fontsize=11)

axes[0, 0].set_ylabel('$y$', fontsize=11)

axes[0, 0].set_title(r'Contour: $f(x,y) = x^2 + y^2$', fontsize=12, fontweight='bold')

axes[0, 0].plot(0, 0, 'r*', markersize=15, label='Global minimum')

axes[0, 0].legend()

axes[0, 0].grid(True, alpha=0.3)

# Contour 2: Saddle

contour2 = axes[0, 1].contour(X, Y, Z2, levels=20, cmap='plasma')

axes[0, 1].clabel(contour2, inline=True, fontsize=8)

axes[0, 1].set_xlabel('$x$', fontsize=11)

axes[0, 1].set_ylabel('$y$', fontsize=11)

axes[0, 1].set_title(r'Contour: $f(x,y) = x^2 - y^2$', fontsize=12, fontweight='bold')

axes[0, 1].plot(0, 0, 'r*', markersize=15, label='Saddle point')

axes[0, 1].legend()

axes[0, 1].grid(True, alpha=0.3)

# Contour 3: Rosenbrock

Z3_log = np.log(Z3 + 1) # Log scale for better visualization

contour3 = axes[1, 0].contour(X3, Y3, Z3_log, levels=30, cmap='coolwarm')

axes[1, 0].clabel(contour3, inline=True, fontsize=8)

axes[1, 0].set_xlabel('$x$', fontsize=11)

axes[1, 0].set_ylabel('$y$', fontsize=11)

axes[1, 0].set_title('Rosenbrock (log scale)', fontsize=12, fontweight='bold')

axes[1, 0].plot(1, 1, 'r*', markersize=15, label='Global minimum (1,1)')

axes[1, 0].legend()

axes[1, 0].grid(True, alpha=0.3)

# Contour 4: Himmelblau

Z4_log = np.log(Z4 + 1)

contour4 = axes[1, 1].contour(X4, Y4, Z4_log, levels=30, cmap='terrain')

axes[1, 1].clabel(contour4, inline=True, fontsize=8)

axes[1, 1].set_xlabel('$x$', fontsize=11)

axes[1, 1].set_ylabel('$y$', fontsize=11)

axes[1, 1].set_title("Himmelblau (log scale)", fontsize=12, fontweight='bold')

axes[1, 1].grid(True, alpha=0.3)

plt.suptitle('Contour Plots: Top-Down View of Landscapes', fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

print("\nOptimization Landscape Types:")

print("=" * 60)

print("Convex: Single global minimum, easy to optimize")

print("Saddle point: Gradient is zero but not minimum/maximum")

print("Rosenbrock: Narrow valley, challenging for optimization")

print("Himmelblau: Multiple local minima, hard problem")

print("\nVisualization techniques:")

print("3D surface plots: Show full shape of function")

print("Contour plots: Top-down view, easier to read exact values")

print("Log scale: Better visualization for functions with large range")These 3D visualizations and contour plots make the geometry of optimization problems visible. Understanding the shape of the optimization landscape helps explain why certain algorithms work better than others for different problems. Convex functions are easy to optimize because any local minimum is global. Non-convex functions with multiple minima or saddle points require more sophisticated optimization strategies.

Visualizing Machine Learning Models and Decision Boundaries

Visualization of model predictions and decision boundaries provides crucial insights into how models make decisions.

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import make_classification, make_regression

from sklearn.linear_model import LogisticRegression, LinearRegression

from sklearn.tree import DecisionTreeClassifier

from sklearn.svm import SVC

# Generate classification data

X_class, y_class = make_classification(n_samples=200, n_features=2, n_redundant=0,

n_informative=2, n_clusters_per_class=1,

random_state=42)

# Train different classifiers

models = {

'Logistic Regression': LogisticRegression(),

'Decision Tree': DecisionTreeClassifier(max_depth=3),

'SVM (RBF kernel)': SVC(kernel='rbf', gamma=2)

}

fig, axes = plt.subplots(1, 3, figsize=(18, 5))

for idx, (name, model) in enumerate(models.items()):

# Train model

model.fit(X_class, y_class)

# Create mesh for decision boundary

x_min, x_max = X_class[:, 0].min() - 1, X_class[:, 0].max() + 1

y_min, y_max = X_class[:, 1].min() - 1, X_class[:, 1].max() + 1

xx, yy = np.meshgrid(np.linspace(x_min, x_max, 200),

np.linspace(y_min, y_max, 200))

# Predict on mesh

Z = model.predict(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

# Plot decision boundary

axes[idx].contourf(xx, yy, Z, alpha=0.3, cmap='RdYlBu')

# Plot data points

scatter = axes[idx].scatter(X_class[:, 0], X_class[:, 1], c=y_class,

cmap='RdYlBu', edgecolors='black', s=50, alpha=0.7)

axes[idx].set_title(f'{name}\nDecision Boundary', fontsize=12, fontweight='bold')

axes[idx].set_xlabel('Feature 1', fontsize=11)

axes[idx].set_ylabel('Feature 2', fontsize=11)

axes[idx].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Linear regression visualization with confidence interval

X_reg = np.random.rand(100, 1) * 10

y_reg = 2.5 * X_reg.ravel() + 3 + np.random.randn(100) * 2

model_reg = LinearRegression()

model_reg.fit(X_reg, y_reg)

y_pred = model_reg.predict(X_reg)

# Calculate prediction intervals (simplified)

residuals = y_reg - y_pred

std_error = np.std(residuals)

fig, axes = plt.subplots(1, 2, figsize=(14, 5))

# Plot 1: Scatter with regression line

axes[0].scatter(X_reg, y_reg, alpha=0.5, s=50, edgecolors='black', linewidth=0.5)

axes[0].plot(X_reg, y_pred, 'r-', linewidth=2, label='Fitted line')

axes[0].fill_between(X_reg.ravel(), y_pred - 2*std_error, y_pred + 2*std_error,

alpha=0.2, color='red', label='95% prediction interval')

axes[0].set_xlabel('X', fontsize=11)

axes[0].set_ylabel('y', fontsize=11)

axes[0].set_title(rf'Linear Regression: $y = {model_reg.coef_[0]:.2f}x + {model_reg.intercept_:.2f}$',

fontsize=12, fontweight='bold')

axes[0].legend()

axes[0].grid(True, alpha=0.3)

# Plot 2: Residual plot

residuals = y_reg - y_pred

axes[1].scatter(y_pred, residuals, alpha=0.5, s=50, edgecolors='black', linewidth=0.5)

axes[1].axhline(y=0, color='red', linestyle='--', linewidth=2)

axes[1].set_xlabel('Predicted values', fontsize=11)

axes[1].set_ylabel('Residuals', fontsize=11)

axes[1].set_title('Residual Plot', fontsize=12, fontweight='bold')

axes[1].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Learning curves

from sklearn.model_selection import learning_curve

train_sizes = np.linspace(0.1, 1.0, 10)

train_sizes_abs, train_scores, val_scores = learning_curve(

LogisticRegression(), X_class, y_class, train_sizes=train_sizes,

cv=5, scoring='accuracy', n_jobs=-1

)

train_mean = np.mean(train_scores, axis=1)

train_std = np.std(train_scores, axis=1)

val_mean = np.mean(val_scores, axis=1)

val_std = np.std(val_scores, axis=1)

fig, ax = plt.subplots(figsize=(10, 6))

ax.plot(train_sizes_abs, train_mean, 'o-', linewidth=2, label='Training score', color='blue')

ax.fill_between(train_sizes_abs, train_mean - train_std, train_mean + train_std,

alpha=0.2, color='blue')

ax.plot(train_sizes_abs, val_mean, 'o-', linewidth=2, label='Validation score', color='red')

ax.fill_between(train_sizes_abs, val_mean - val_std, val_mean + val_std,

alpha=0.2, color='red')

ax.set_xlabel('Training Set Size', fontsize=12)

ax.set_ylabel('Accuracy', fontsize=12)

ax.set_title('Learning Curves: Training vs Validation Performance', fontsize=14, fontweight='bold')

ax.legend(loc='lower right', fontsize=11)

ax.grid(True, alpha=0.3)

ax.set_ylim(0.6, 1.05)

plt.tight_layout()

plt.show()

print("\nModel Visualization Insights:")

print("=" * 60)

print("Decision boundaries: Show how models partition feature space")

print("Linear models: Create straight/flat decision boundaries")

print("Non-linear models: Can create complex, curved boundaries")

print("Residual plots: Check for patterns indicating model problems")

print("Learning curves: Diagnose underfitting vs overfitting")

print(" - Gap between curves: overfitting")

print(" - Both curves low: underfitting")

print(" - Converging curves: good generalization")These visualizations reveal how different algorithms make decisions and how well they generalize. Decision boundary plots show the fundamental differences between linear and non-linear models. Residual plots help diagnose problems like heteroscedasticity or non-linearity. Learning curves indicate whether adding more data would improve performance.

Creating Publication-Quality Figures

Professional visualizations require attention to aesthetics, clarity, and effective communication of insights.

import numpy as np

import matplotlib.pyplot as plt

# Set style for publication-quality plots

plt.style.use('seaborn-v0_8-darkgrid')

# Create comprehensive figure with multiple elements

fig = plt.figure(figsize=(16, 10))

# Define grid for subplots

gs = fig.add_gridspec(3, 3, hspace=0.3, wspace=0.3)

# Main plot: Large subplot spanning 2x2

ax_main = fig.add_subplot(gs[0:2, 0:2])

# Generate data for main plot

x = np.linspace(0, 10, 100)

y1 = np.sin(x) * np.exp(-x/5)

y2 = np.cos(x) * np.exp(-x/5)

ax_main.plot(x, y1, linewidth=2.5, label='Damped Sine', color='#2E86AB', alpha=0.8)

ax_main.plot(x, y2, linewidth=2.5, label='Damped Cosine', color='#A23B72', alpha=0.8)

ax_main.fill_between(x, y1, alpha=0.2, color='#2E86AB')

ax_main.fill_between(x, y2, alpha=0.2, color='#A23B72')

ax_main.set_xlabel('Time (seconds)', fontsize=13, fontweight='bold')

ax_main.set_ylabel('Amplitude', fontsize=13, fontweight='bold')

ax_main.set_title('Damped Oscillations', fontsize=15, fontweight='bold', pad=20)

ax_main.legend(fontsize=11, frameon=True, shadow=True, loc='upper right')

ax_main.grid(True, alpha=0.3, linestyle='--')

# Add equation as text

ax_main.text(0.05, 0.95, r'$f(x) = \sin(x) \cdot e^{-x/5}$',

transform=ax_main.transAxes, fontsize=12,

verticalalignment='top',

bbox=dict(boxstyle='round', facecolor='wheat', alpha=0.7))

# Histogram

ax_hist = fig.add_subplot(gs[0, 2])

data_hist = np.random.randn(1000)

n, bins, patches = ax_hist.hist(data_hist, bins=30, alpha=0.7, color='#F18F01',

edgecolor='black', linewidth=0.5)

# Color bars by height

cm = plt.cm.viridis

norm = plt.Normalize(vmin=n.min(), vmax=n.max())

for i, patch in enumerate(patches):

patch.set_facecolor(cm(norm(n[i])))

ax_hist.set_xlabel('Value', fontsize=10, fontweight='bold')

ax_hist.set_ylabel('Frequency', fontsize=10, fontweight='bold')

ax_hist.set_title('Distribution', fontsize=11, fontweight='bold')

# Scatter plot

ax_scatter = fig.add_subplot(gs[1, 2])

x_scatter = np.random.randn(100)

y_scatter = 2 * x_scatter + np.random.randn(100) * 0.5

scatter = ax_scatter.scatter(x_scatter, y_scatter, c=x_scatter,

cmap='coolwarm', s=80, alpha=0.6,

edgecolors='black', linewidth=0.5)

ax_scatter.set_xlabel('X', fontsize=10, fontweight='bold')

ax_scatter.set_ylabel('Y', fontsize=10, fontweight='bold')

ax_scatter.set_title('Correlation Plot', fontsize=11, fontweight='bold')

# Add colorbar

cbar = plt.colorbar(scatter, ax=ax_scatter)

cbar.set_label('X value', fontsize=9)

# Box plot

ax_box = fig.add_subplot(gs[2, 0])

data_box = [np.random.normal(0, std, 100) for std in range(1, 5)]

bp = ax_box.boxplot(data_box, labels=['A', 'B', 'C', 'D'],

patch_artist=True, notch=True)

# Color boxes

colors = ['#FFB4A2', '#E5989B', '#B5838D', '#6D6875']

for patch, color in zip(bp['boxes'], colors):

patch.set_facecolor(color)

ax_box.set_ylabel('Value', fontsize=10, fontweight='bold')

ax_box.set_title('Group Comparison', fontsize=11, fontweight='bold')

ax_box.grid(True, alpha=0.3, axis='y')

# Bar plot

ax_bar = fig.add_subplot(gs[2, 1])

categories = ['Model A', 'Model B', 'Model C', 'Model D']

performance = [0.85, 0.92, 0.88, 0.95]

colors_bar = ['#E63946', '#F1FAEE', '#A8DADC', '#457B9D']

bars = ax_bar.bar(categories, performance, color=colors_bar,

edgecolor='black', linewidth=1.5, alpha=0.8)

# Add value labels on bars

for bar in bars:

height = bar.get_height()

ax_bar.text(bar.get_x() + bar.get_width()/2., height,

f'{height:.2f}',

ha='center', va='bottom', fontweight='bold')

ax_bar.set_ylabel('Accuracy', fontsize=10, fontweight='bold')

ax_bar.set_title('Model Performance', fontsize=11, fontweight='bold')

ax_bar.set_ylim(0, 1.1)

ax_bar.grid(True, alpha=0.3, axis='y')

# Heatmap

ax_heatmap = fig.add_subplot(gs[2, 2])

data_heatmap = np.random.randn(10, 10)

im = ax_heatmap.imshow(data_heatmap, cmap='RdYlBu_r', aspect='auto')

ax_heatmap.set_title('Correlation Matrix', fontsize=11, fontweight='bold')

cbar = plt.colorbar(im, ax=ax_heatmap)

cbar.set_label('Correlation', fontsize=9)

plt.suptitle('Comprehensive Data Visualization Dashboard',

fontsize=16, fontweight='bold', y=0.98)

plt.savefig('/mnt/user-data/outputs/publication_quality_figure.png',

dpi=300, bbox_inches='tight')

plt.show()

print("\nPublication-Quality Figure Guidelines:")

print("=" * 60)

print("1. Use consistent color schemes across related plots")

print("2. Include clear, descriptive titles and axis labels")

print("3. Add legends for multiple data series")

print("4. Use appropriate font sizes (readable but not overwhelming)")

print("5. Include mathematical equations using LaTeX formatting")

print("6. Add annotations to highlight important features")

print("7. Use colorbars for color-mapped data")

print("8. Save at high DPI (300+) for publication")

print("9. Use tight_layout() to prevent overlap")

print("10. Choose appropriate plot types for your data")

print("\nColor palette considerations:")

print("- Use colorblind-friendly palettes")

print("- Maintain sufficient contrast")

print("- Be consistent within a publication")

print("- Consider grayscale printing")Creating publication-quality figures requires careful attention to every element. Font sizes should be large enough to read when the figure is printed or included in a presentation. Colors should be distinguishable even when printed in grayscale. Legends, titles, and labels should clearly communicate what is being shown. Mathematical notation using LaTeX makes equations clear and professional.

Conclusion: Visualization as a Core Machine Learning Skill

Visualization is not merely a tool for creating pretty pictures to include in reports and presentations. It is a fundamental analytical technique that enables understanding, discovery, and communication throughout the machine learning workflow. From the earliest stages of exploratory data analysis through final model evaluation and deployment, visualization provides insights that numerical summaries alone cannot reveal.

The skills developed in this comprehensive guide form the foundation for effective data science communication. Understanding how to create basic plots gives you the building blocks for more complex visualizations. Knowing how to customize appearance ensures your visualizations communicate clearly and professionally. Mastering different plot types enables you to choose the right visualization for each purpose, whether exploring data distributions, comparing model performance, or communicating results to stakeholders.

Matplotlib’s flexibility allows you to create virtually any visualization you can envision. The pyplot interface provides quick, convenient plotting for interactive work. The object-oriented interface offers precise control for complex multi-panel figures. The extensive customization options enable you to match your organization’s style guidelines or publication requirements. Integration with NumPy, Pandas, and scikit-learn makes Matplotlib the natural choice for machine learning visualization.

As you continue developing your machine learning expertise, treat visualization as an integral part of your analysis, not an afterthought. Before training models, visualize your data to understand distributions, identify outliers, and discover relationships. During model development, visualize learning curves, decision boundaries, and performance metrics to diagnose problems and guide improvements. When presenting results, use carefully designed visualizations to communicate insights effectively to both technical and non-technical audiences.

The visualization techniques covered here represent foundational skills that you will use throughout your career. As you encounter new types of data and problems, you will adapt these techniques and develop new ones. The key is to always ask yourself: what visualization would make this concept, pattern, or result clearer? With practice, you will develop intuition for which visualizations reveal the most insight for each situation, and you will become proficient at creating them efficiently using Matplotlib’s powerful capabilities.