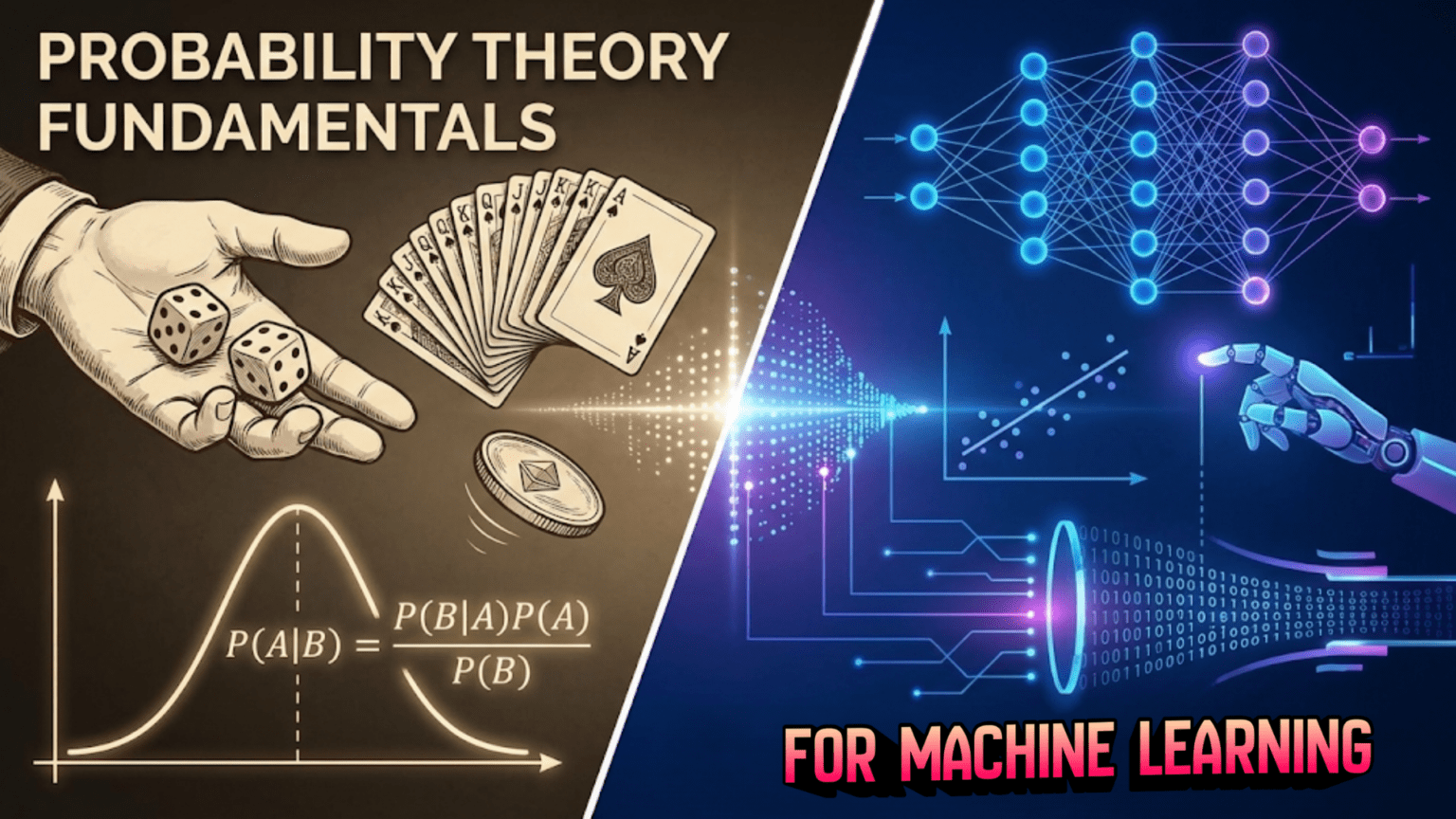

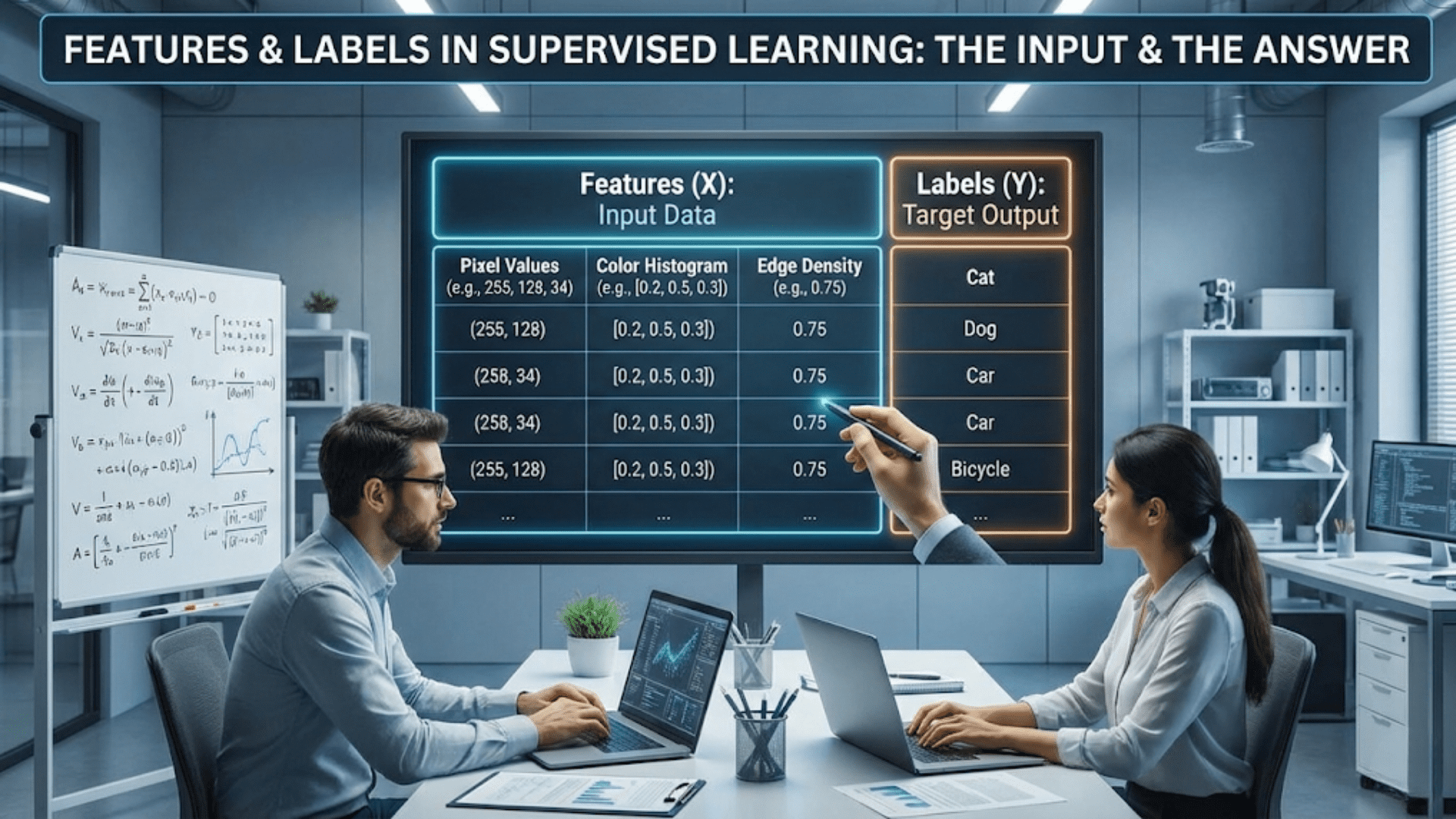

Introduction: Why Probability is the Language of Uncertainty

Imagine you’re a doctor trying to diagnose a patient who comes in with a fever and a cough. These symptoms could indicate many different conditions, from a common cold to something more serious. You can’t be absolutely certain about the diagnosis, but you need to make informed decisions based on incomplete information. How do you reason about this uncertainty? How do you weigh the evidence of different symptoms against the likelihood of various diseases? The answer lies in probability theory.

Probability theory is the mathematical framework for reasoning about uncertainty, and it’s absolutely fundamental to machine learning. While we often think of computers as precise, deterministic machines that always produce the same output for the same input, machine learning is fundamentally about making predictions and decisions in the face of uncertainty. We work with noisy data, incomplete information, and inherent randomness in the world. Probability theory gives us the tools to handle all of this systematically and rigorously.

In this comprehensive guide, we’ll explore the foundations of probability theory and discover why it’s so crucial for understanding machine learning. You’ll learn about random variables, probability distributions, conditional probability, and Bayes’ theorem. We’ll build your intuition with everyday examples before diving into the mathematical details, and we’ll see how these concepts directly apply to real machine learning algorithms. Whether you’re completely new to probability or need a refresh with a machine learning focus, this article will provide you with the essential knowledge you need to understand how uncertainty is handled in artificial intelligence.

By the end of this journey, you’ll understand not just the mechanics of probability calculations, but the deeper insight that probability provides. You’ll see why Naive Bayes classifiers work, how we can quantify our uncertainty in predictions, why probabilistic graphical models are so powerful, and how concepts like maximum likelihood estimation underpin many learning algorithms. Most importantly, you’ll develop the probabilistic thinking that’s essential for understanding modern machine learning.

What is Probability? Understanding Randomness and Uncertainty

At its core, probability is a number between zero and one that represents how likely something is to happen. A probability of zero means an event is impossible, a probability of one means it’s certain, and values in between represent various degrees of likelihood. When you flip a fair coin, the probability of getting heads is 0.5, meaning that if you flipped the coin many times, you’d expect to see heads about half the time.

But probability is more subtle than just counting outcomes. It’s a framework for quantifying our uncertainty about events, and there are actually two major philosophical interpretations of what probability means. The frequentist interpretation views probability as a long-run frequency. If we say the probability of rain tomorrow is 0.3, a frequentist interpretation means that out of many days with similar weather conditions, about thirty percent of them would see rain. This interpretation works well for repeatable events like coin flips or dice rolls.

The Bayesian interpretation, on the other hand, views probability as a degree of belief or confidence. When we say there’s a 0.3 probability of rain tomorrow, we’re expressing our subjective confidence based on available information. This interpretation is particularly useful for one-off events that can’t be repeated, like “What’s the probability that this email is spam?” or “What’s the probability that this patient has disease X?” Both interpretations have their place in machine learning, and understanding both will deepen your grasp of probabilistic reasoning.

Let’s start with some fundamental concepts. An experiment or trial is any process that can produce different outcomes. Rolling a die is an experiment. The sample space is the set of all possible outcomes. For a standard six-sided die, the sample space is the set containing one, two, three, four, five, and six. An event is any subset of the sample space. For instance, “rolling an even number” is an event that includes the outcomes two, four, and six.

The probability of an event is denoted as P(A), where A is the event. For equally likely outcomes, which is often the case with games of chance, we can calculate probability using a simple formula:

P(A) = (Number of outcomes in A) / (Total number of outcomes)

For example, the probability of rolling an even number on a fair die is three divided by six, which equals 0.5, since there are three even numbers out of six total possibilities.

However, not all events have equally likely outcomes. The probability that it rains tomorrow is not simply one outcome out of two possibilities. This is where we need more sophisticated approaches, and where the connection to machine learning becomes clear. Machine learning algorithms learn probabilities from data, estimating how likely different outcomes are based on patterns they observe.

The Axioms of Probability: The Foundation Rules

Like any mathematical system, probability theory is built on a small set of fundamental rules called axioms. These axioms, formalized by the Russian mathematician Andrey Kolmogorov in 1933, provide the foundation for all probability reasoning.

Axiom 1: Non-negativity The probability of any event is always greater than or equal to zero. You can’t have a negative probability. Mathematically, for any event A:

P(A) >= 0

This makes intuitive sense. Probability represents likelihood, and it doesn’t make sense to talk about something being “negatively likely.”

Axiom 2: Normalization The probability of the entire sample space (the event that something happens) equals one. If S represents all possible outcomes:

P(S) = 1

This axiom captures the idea that when you perform an experiment, something must happen. One of the outcomes in your sample space will occur with certainty.

Axiom 3: Additivity If two events A and B are mutually exclusive (they can’t both happen at the same time), then the probability that either A or B occurs is the sum of their individual probabilities:

P(A or B) = P(A) + P(B) [when A and B are mutually exclusive]

For example, when rolling a die, the events “rolling a 1” and “rolling a 6” are mutually exclusive because you can’t roll both simultaneously. If we want the probability of rolling either a one or a six, we simply add the probabilities: 1/6 plus 1/6 equals 2/6, which simplifies to 1/3.

From these three simple axioms, all of probability theory follows. Every theorem, every formula, every probability calculation ultimately traces back to these fundamental rules.

The Complement Rule and Basic Probability Laws

From the axioms, we can derive several useful rules that make probability calculations easier. The first is the complement rule. The complement of an event A, written as A’ or Ā or A^c (depending on notation preference), represents the event that A does not occur. Since either A happens or it doesn’t, and these are mutually exclusive events that cover all possibilities:

P(A) + P(A') = 1

Therefore:

P(A') = 1 - P(A)

This rule is incredibly useful. Sometimes it’s easier to calculate the probability that something doesn’t happen and then subtract from one. For example, if you want to know the probability of rolling at least one six in four rolls of a die, it’s much easier to calculate the probability of not rolling any sixes and subtract that from one.

Let’s work through this example. The probability of not rolling a six on a single roll is 5/6. For four independent rolls, the probability of not rolling a six on any of them is:

P(no sixes in 4 rolls) = (5/6) × (5/6) × (5/6) × (5/6) = (5/6)^4 ≈ 0.482

Therefore, the probability of rolling at least one six is:

P(at least one six) = 1 - 0.482 ≈ 0.518

Another important rule is the addition rule for events that aren’t mutually exclusive. When two events can occur simultaneously, we need to account for their overlap:

P(A or B) = P(A) + P(B) - P(A and B)

We subtract P(A and B) because when we add P(A) and P(B), we count the overlap twice, so we need to remove it once. Think of this like a Venn diagram where two circles overlap. To get the total area covered by either circle, you can’t just add their areas because you’d count the overlap region twice.

For example, suppose you’re drawing a card from a standard deck. What’s the probability of drawing either a heart or a face card? There are thirteen hearts and twelve face cards (three per suit), but three of those face cards are hearts. So:

P(heart or face card) = 13/52 + 12/52 - 3/52 = 22/52 ≈ 0.423

Random Variables: Connecting Outcomes to Numbers

In many situations, we’re not just interested in abstract events, but in numerical outcomes. A random variable is a function that maps outcomes from the sample space to real numbers. Random variables allow us to work with probabilities in a more mathematical and structured way.

There are two types of random variables. Discrete random variables can take on only specific, countable values. The number of heads in ten coin flips is a discrete random variable that can be 0, 1, 2, up to 10. The number of emails you receive in a day is discrete. Continuous random variables can take on any value within a range. The height of a randomly selected person is continuous. The exact time until the next earthquake occurs is continuous.

Let’s focus first on discrete random variables. Suppose X is a discrete random variable representing the outcome when rolling a fair die. X can take values 1, 2, 3, 4, 5, or 6, each with probability 1/6. We write this as:

P(X = 1) = 1/6

P(X = 2) = 1/6

...

P(X = 6) = 1/6

The collection of all these probabilities is called the probability mass function (PMF) for discrete random variables. The PMF tells us the probability that the random variable equals each specific value.

For continuous random variables, things are slightly different. Since there are infinitely many possible values in any continuous range, the probability of any single exact value is zero. Instead, we work with probabilities over intervals, and we use a probability density function (PDF). The PDF describes the relative likelihood of different values, and the probability of the variable falling within an interval is the area under the PDF curve over that interval.

Let’s implement a simple discrete random variable in Python to make this concrete:

import numpy as np

import matplotlib.pyplot as plt

# Simulate rolling a die many times

np.random.seed(42)

n_rolls = 10000

dice_rolls = np.random.randint(1, 7, size=n_rolls)

# Calculate the empirical probability mass function

values, counts = np.unique(dice_rolls, return_counts=True)

empirical_pmf = counts / n_rolls

# Plot the results

plt.figure(figsize=(10, 6))

plt.bar(values, empirical_pmf, alpha=0.7, color='blue', edgecolor='black')

plt.axhline(y=1/6, color='red', linestyle='--', label='Theoretical probability (1/6)')

plt.xlabel('Die Value')

plt.ylabel('Probability')

plt.title('Empirical Probability Mass Function from 10,000 Die Rolls')

plt.xticks(values)

plt.legend()

plt.grid(True, alpha=0.3)

plt.show()

# Print the empirical probabilities

print("Empirical probabilities from simulation:")

for value, prob in zip(values, empirical_pmf):

print(f"P(X = {value}) = {prob:.4f}")This code simulates rolling a die ten thousand times and calculates the empirical probability mass function. As the number of rolls increases, the empirical probabilities converge to the theoretical value of 1/6 for each outcome. This is an illustration of the law of large numbers, a fundamental result in probability theory.

Expected Value: The Average Outcome

One of the most important concepts for a random variable is its expected value, also called the mean or expectation. The expected value represents the average outcome you’d expect if you repeated the random experiment many times. It’s denoted as E[X] or sometimes μ (mu).

For a discrete random variable, the expected value is calculated as:

E[X] = Σ x × P(X = x)

This sum is taken over all possible values x that the random variable can take. In words, you multiply each possible value by its probability and sum them all up.

For our die-rolling example, the expected value is:

E[X] = 1×(1/6) + 2×(1/6) + 3×(1/6) + 4×(1/6) + 5×(1/6) + 6×(1/6)

= (1 + 2 + 3 + 4 + 5 + 6) / 6

= 21 / 6

= 3.5

Notice that the expected value is 3.5, even though you can never actually roll a 3.5 on a die. The expected value doesn’t have to be a value that the random variable can actually take. It represents the long-run average, the center of mass of the probability distribution.

The expected value is crucial in machine learning for several reasons. When we train models, we often want to minimize the expected loss or error over all possible data points. When we make predictions, we might choose the outcome with the highest expected value (or lowest expected cost). Expected value provides a principled way to make decisions under uncertainty.

The concept extends naturally to functions of random variables. If g(X) is some function of a random variable X, then:

E[g(X)] = Σ g(x) × P(X = x)

This is particularly useful because many machine learning objectives can be expressed as expected values of functions. For instance, the mean squared error, a common loss function, is the expected value of the squared difference between predictions and true values.

Here’s a Python implementation showing expected value in action:

import numpy as np

# Define a discrete random variable: number of heads in 3 coin flips

# Possible values: 0, 1, 2, 3

# Probabilities from binomial distribution

def binomial_probability(n, k, p):

"""Calculate binomial probability: P(k successes in n trials with probability p)"""

from math import comb

return comb(n, k) * (p ** k) * ((1 - p) ** (n - k))

n_flips = 3

p_heads = 0.5

# Calculate probabilities for each possible outcome

values = range(0, n_flips + 1)

probabilities = [binomial_probability(n_flips, k, p_heads) for k in values]

print("Probability Mass Function for number of heads in 3 coin flips:")

for k, prob in zip(values, probabilities):

print(f"P(X = {k}) = {prob:.4f}")

# Calculate expected value analytically

expected_value = sum(k * prob for k, prob in zip(values, probabilities))

print(f"\nExpected value (analytical): {expected_value:.4f}")

# Verify with simulation

np.random.seed(42)

n_experiments = 100000

simulated_outcomes = [np.sum(np.random.rand(n_flips) < p_heads) for _ in range(n_experiments)]

simulated_mean = np.mean(simulated_outcomes)

print(f"Expected value (simulated): {simulated_mean:.4f}")

# For binomial distribution, E[X] = n * p

theoretical_expectation = n_flips * p_heads

print(f"Expected value (formula): {theoretical_expectation:.4f}")This code demonstrates three ways to calculate expected value: directly from the probability mass function, through simulation, and using the theoretical formula for the binomial distribution. All three approaches converge to the same answer, illustrating the consistency of probability theory.

Variance and Standard Deviation: Measuring Spread

While the expected value tells us the center of a distribution, it doesn’t tell us anything about the spread or variability. Consider two random variables: one that always equals 10 (no randomness at all), and one that equals either 0 or 20 with equal probability. Both have an expected value of 10, but they behave very differently.

The variance measures how spread out a distribution is. It’s defined as the expected value of the squared deviation from the mean:

Var(X) = E[(X - μ)²]

where μ is the expected value of X. This can also be computed using an equivalent but often more convenient formula:

Var(X) = E[X²] - (E[X])²

The variance is always non-negative (since it’s an expected value of squared quantities), and a larger variance indicates greater spread in the distribution.

For our die example, let’s calculate the variance. We already know E[X] = 3.5. We need to find E[X²]:

E[X²] = 1²×(1/6) + 2²×(1/6) + 3²×(1/6) + 4²×(1/6) + 5²×(1/6) + 6²×(1/6)

= (1 + 4 + 9 + 16 + 25 + 36) / 6

= 91 / 6

≈ 15.167

Therefore:

Var(X) = 15.167 - (3.5)² = 15.167 - 12.25 = 2.917

The standard deviation is simply the square root of the variance:

σ = √Var(X) ≈ 1.708

Standard deviation has the same units as the original random variable (unlike variance, which has squared units), making it more interpretable. The standard deviation gives us a sense of the typical distance between a value and the mean.

In machine learning, variance and standard deviation are everywhere. We use them to normalize features (making different features have comparable scales), to quantify uncertainty in predictions, to measure how much our model’s predictions vary, and to understand the bias-variance tradeoff that affects model performance.

Here’s a Python implementation that visualizes variance for different distributions:

import numpy as np

import matplotlib.pyplot as plt

# Create three distributions with the same mean but different variances

np.random.seed(42)

n_samples = 10000

# Distribution 1: Low variance

mean = 50

low_var = np.random.normal(mean, 5, n_samples) # std dev = 5

# Distribution 2: Medium variance

medium_var = np.random.normal(mean, 15, n_samples) # std dev = 15

# Distribution 3: High variance

high_var = np.random.normal(mean, 30, n_samples) # std dev = 30

# Calculate statistics

distributions = {

'Low Variance (σ=5)': low_var,

'Medium Variance (σ=15)': medium_var,

'High Variance (σ=30)': high_var

}

# Plot histograms

fig, axes = plt.subplots(3, 1, figsize=(10, 10))

for idx, (name, data) in enumerate(distributions.items()):

calculated_mean = np.mean(data)

calculated_std = np.std(data)

calculated_var = np.var(data)

axes[idx].hist(data, bins=50, alpha=0.7, edgecolor='black', density=True)

axes[idx].axvline(calculated_mean, color='red', linestyle='--', linewidth=2,

label=f'Mean = {calculated_mean:.2f}')

axes[idx].set_title(f'{name}\nVariance = {calculated_var:.2f}, Std Dev = {calculated_std:.2f}')

axes[idx].set_xlabel('Value')

axes[idx].set_ylabel('Density')

axes[idx].legend()

axes[idx].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Show how variance affects predictions

print("\nVariance in Machine Learning Context:")

print("=" * 50)

for name, data in distributions.items():

# In a prediction scenario, higher variance means more uncertainty

sample_predictions = np.random.choice(data, 5)

print(f"\n{name}")

print(f"Sample predictions: {sample_predictions}")

print(f"Range of predictions: {np.max(sample_predictions) - np.min(sample_predictions):.2f}")This visualization clearly shows how variance affects the spread of a distribution. All three distributions are centered at the same mean, but the high-variance distribution has values spread much more widely.

Joint, Marginal, and Conditional Probability: Relationships Between Events

So far, we’ve mostly considered single events or single random variables in isolation. But in practice, we often need to reason about multiple events or variables simultaneously. This is where joint, marginal, and conditional probability come in, and these concepts are absolutely central to machine learning.

Joint probability is the probability that two or more events occur together. We write P(A and B) or P(A, B) for the joint probability of events A and B. For example, what’s the probability that a randomly selected person is both tall and plays basketball? This is a joint probability.

For two independent events (events where one doesn’t affect the probability of the other), the joint probability is simply the product of the individual probabilities:

P(A and B) = P(A) × P(B) [only when A and B are independent]

For instance, the probability of flipping heads on a coin and rolling a six on a die is (1/2) × (1/6) = 1/12, assuming the coin flip and die roll are independent.

However, many events are not independent, and this is where conditional probability becomes crucial. Conditional probability is the probability of one event occurring given that another event has occurred. We write this as P(A|B), pronounced “the probability of A given B.”

The formal definition of conditional probability is:

P(A|B) = P(A and B) / P(B)

provided that P(B) is greater than zero. This formula captures an intuitive idea: to find the probability of A given that B has occurred, we look at the subset of outcomes where B occurs and ask what fraction of those also include A.

Let’s work through a concrete example. Suppose we have a deck of cards. What’s the probability that a card is an ace given that it’s a spade? There are thirteen spades and only one is an ace, so P(Ace|Spade) = 1/13. We can verify this using the formula:

P(Ace and Spade) = 1/52 (there's one ace of spades)

P(Spade) = 13/52 (thirteen spades total)

P(Ace|Spade) = (1/52) / (13/52) = 1/13 ✓

Rearranging the conditional probability formula gives us the multiplication rule:

P(A and B) = P(A|B) × P(B) = P(B|A) × P(A)

This is extremely useful because it often lets us compute joint probabilities from conditional probabilities, which may be easier to estimate or reason about.

Marginal probability refers to the probability of a single event regardless of the outcomes of other events. If we have a joint distribution over two variables, we can get the marginal probability of one variable by summing (or integrating) over all possible values of the other variable:

P(A) = Σ P(A, B) = Σ P(A|B) × P(B)

This process is called marginalization, and it’s how we go from a joint distribution to a distribution over a single variable.

Here’s a practical example that ties these concepts together:

import numpy as np

import pandas as pd

# Create a dataset of students with grades and study hours

np.random.seed(42)

n_students = 1000

# Generate synthetic data

# High study hours increases probability of good grades

study_hours = np.random.choice(['Low', 'Medium', 'High'], n_students,

p=[0.3, 0.5, 0.2])

grades = []

for hours in study_hours:

if hours == 'Low':

grade = np.random.choice(['A', 'B', 'C'], p=[0.1, 0.3, 0.6])

elif hours == 'Medium':

grade = np.random.choice(['A', 'B', 'C'], p=[0.3, 0.5, 0.2])

else: # High

grade = np.random.choice(['A', 'B', 'C'], p=[0.6, 0.3, 0.1])

grades.append(grade)

# Create DataFrame

df = pd.DataFrame({'StudyHours': study_hours, 'Grade': grades})

# Calculate joint probabilities

joint_prob = pd.crosstab(df['StudyHours'], df['Grade'], normalize='all')

print("Joint Probability P(StudyHours, Grade):")

print(joint_prob)

print("\n")

# Calculate marginal probabilities

marginal_study = pd.crosstab(df['StudyHours'], df['Grade'], normalize='all').sum(axis=1)

marginal_grade = pd.crosstab(df['StudyHours'], df['Grade'], normalize='all').sum(axis=0)

print("Marginal Probability P(StudyHours):")

print(marginal_study)

print("\n")

print("Marginal Probability P(Grade):")

print(marginal_grade)

print("\n")

# Calculate conditional probabilities

conditional_grade_given_study = pd.crosstab(df['StudyHours'], df['Grade'],

normalize='index')

print("Conditional Probability P(Grade|StudyHours):")

print(conditional_grade_given_study)

print("\n")

# Verify the relationship: P(A,B) = P(A|B) × P(B)

print("Verification: Joint = Conditional × Marginal")

print("For StudyHours=High, Grade=A:")

joint_high_a = joint_prob.loc['High', 'A']

conditional_a_given_high = conditional_grade_given_study.loc['High', 'A']

marginal_high = marginal_study['High']

calculated_joint = conditional_a_given_high * marginal_high

print(f"P(High, A) from table: {joint_high_a:.4f}")

print(f"P(A|High) × P(High): {calculated_joint:.4f}")This code demonstrates how joint, marginal, and conditional probabilities relate to each other using a practical dataset. Understanding these relationships is essential for many machine learning algorithms, particularly those based on probabilistic graphical models.

Bayes’ Theorem: The Foundation of Probabilistic Inference

Now we arrive at one of the most important results in all of probability theory and machine learning: Bayes’ theorem. Named after the Reverend Thomas Bayes, this theorem provides a principled way to update our beliefs in light of new evidence. It’s the foundation of Bayesian statistics, Bayesian machine learning, and numerous classification algorithms.

Bayes’ theorem follows directly from the definition of conditional probability. Starting with the multiplication rule, we can write:

P(A and B) = P(A|B) × P(B) = P(B|A) × P(A)

Rearranging this equation gives us Bayes’ theorem:

P(A|B) = [P(B|A) × P(A)] / P(B)

While this formula might look simple, its implications are profound. It tells us how to reverse conditional probabilities. If we know P(B|A), we can calculate P(A|B), provided we also know P(A) and P(B).

In machine learning, we typically write Bayes’ theorem in a slightly different form. Let H represent a hypothesis (like “this email is spam”) and D represent data or evidence (like “the email contains the word ‘discount'”). Then Bayes’ theorem becomes:

P(H|D) = [P(D|H) × P(H)] / P(D)

Each term has a special name and interpretation:

P(H|D) is the posterior probability: the probability of the hypothesis given the data. This is usually what we want to know.

P(D|H) is the likelihood: the probability of observing the data if the hypothesis is true. This measures how well the hypothesis explains the data.

P(H) is the prior probability: our belief about the hypothesis before seeing the data. This encodes our prior knowledge or assumptions.

P(D) is the marginal likelihood or evidence: the overall probability of observing the data. This often serves as a normalizing constant.

The beauty of Bayes’ theorem is that it provides a formal framework for learning from data. We start with a prior belief P(H), observe some data D, and update our belief to get the posterior P(H|D). The likelihood P(D|H) tells us how to weight the evidence.

Let’s work through a classic example: medical diagnosis. Suppose there’s a rare disease that affects 0.1% of the population (P(Disease) = 0.001). There’s a test for this disease that’s 99% accurate. Specifically:

- If you have the disease, the test is positive 99% of the time: P(Positive|Disease) = 0.99

- If you don’t have the disease, the test is negative 99% of the time: P(Negative|No Disease) = 0.99

Now, suppose you test positive. What’s the probability you actually have the disease? Many people’s intuition is that it’s very high, perhaps 99%. But let’s use Bayes’ theorem to find out.

We want P(Disease|Positive). Using Bayes’ theorem:

P(Disease|Positive) = [P(Positive|Disease) × P(Disease)] / P(Positive)

We know P(Positive|Disease) = 0.99 and P(Disease) = 0.001. We need to find P(Positive), which we can calculate using the law of total probability:

P(Positive) = P(Positive|Disease) × P(Disease) + P(Positive|No Disease) × P(No Disease)

= 0.99 × 0.001 + 0.01 × 0.999

= 0.00099 + 0.00999

= 0.01098

Now we can calculate:

P(Disease|Positive) = (0.99 × 0.001) / 0.01098

= 0.00099 / 0.01098

≈ 0.090

Surprisingly, even with a positive test result, the probability you have the disease is only about 9%! This counterintuitive result arises because the disease is so rare. Even though the test is highly accurate, there are many more healthy people than sick people, so most positive tests are false positives.

This example illustrates the power of Bayes’ theorem and the importance of considering base rates (prior probabilities). Here’s a Python implementation:

import numpy as np

import matplotlib.pyplot as plt

def bayes_disease_diagnosis(prior_disease, test_sensitivity, test_specificity):

"""

Calculate posterior probability of disease given positive test

Parameters:

- prior_disease: P(Disease) - prevalence in population

- test_sensitivity: P(Positive|Disease) - true positive rate

- test_specificity: P(Negative|No Disease) - true negative rate

"""

# Calculate P(Positive|No Disease) = 1 - specificity (false positive rate)

false_positive_rate = 1 - test_specificity

# Calculate P(Positive) using law of total probability

prob_positive = (test_sensitivity * prior_disease +

false_positive_rate * (1 - prior_disease))

# Apply Bayes' theorem

posterior = (test_sensitivity * prior_disease) / prob_positive

return posterior

# Original example

prior = 0.001

sensitivity = 0.99

specificity = 0.99

posterior = bayes_disease_diagnosis(prior, sensitivity, specificity)

print(f"Prior probability of disease: {prior:.4f} ({prior*100:.2f}%)")

print(f"Test sensitivity: {sensitivity:.4f} ({sensitivity*100:.2f}%)")

print(f"Test specificity: {specificity:.4f} ({specificity*100:.2f}%)")

print(f"Posterior probability given positive test: {posterior:.4f} ({posterior*100:.2f}%)")

print("\n")

# Visualize how posterior changes with different priors

priors = np.linspace(0.0001, 0.1, 100)

posteriors = [bayes_disease_diagnosis(p, sensitivity, specificity) for p in priors]

plt.figure(figsize=(10, 6))

plt.plot(priors * 100, posteriors * 100, linewidth=2)

plt.xlabel('Prior Probability of Disease (%)')

plt.ylabel('Posterior Probability Given Positive Test (%)')

plt.title('How Prior Probability Affects Posterior (Test Accuracy = 99%)')

plt.grid(True, alpha=0.3)

plt.axhline(y=50, color='red', linestyle='--', alpha=0.5, label='50% threshold')

plt.legend()

plt.show()

# Simulate the scenario

np.random.seed(42)

n_population = 100000

# Generate population: 0 = healthy, 1 = diseased

population = np.random.rand(n_population) < prior

n_diseased = np.sum(population)

n_healthy = n_population - n_diseased

print(f"Simulation with {n_population} people:")

print(f"Number diseased: {n_diseased}")

print(f"Number healthy: {n_healthy}")

# Simulate test results

test_results = np.zeros(n_population, dtype=bool)

# Test diseased people (sensitivity)

diseased_indices = np.where(population)[0]

test_results[diseased_indices] = np.random.rand(len(diseased_indices)) < sensitivity

# Test healthy people (false positives)

healthy_indices = np.where(~population)[0]

test_results[healthy_indices] = np.random.rand(len(healthy_indices)) < (1 - specificity)

# Calculate empirical posterior

positive_tests = np.sum(test_results)

true_positives = np.sum(population & test_results)

empirical_posterior = true_positives / positive_tests if positive_tests > 0 else 0

print(f"\nTest Results:")

print(f"Total positive tests: {positive_tests}")

print(f"True positives: {true_positives}")

print(f"False positives: {positive_tests - true_positives}")

print(f"Empirical P(Disease|Positive): {empirical_posterior:.4f} ({empirical_posterior*100:.2f}%)")

print(f"Theoretical P(Disease|Positive): {posterior:.4f} ({posterior*100:.2f}%)")This implementation not only calculates the posterior probability but also simulates the entire scenario to verify our theoretical calculation empirically.

The Naive Bayes Classifier: Bayes’ Theorem in Action

One of the most popular applications of Bayes’ theorem in machine learning is the Naive Bayes classifier. Despite its simplicity and the “naive” assumption it makes, this classifier is remarkably effective for many tasks, particularly text classification like spam detection.

The Naive Bayes classifier uses Bayes’ theorem to predict the class of a new example based on its features. Suppose we want to classify an email as spam or not spam based on the words it contains. Using Bayes’ theorem:

P(Spam|Words) = [P(Words|Spam) × P(Spam)] / P(Words)

The “naive” part comes from the simplifying assumption that all features (words) are conditionally independent given the class. This means:

P(Words|Spam) = P(Word₁|Spam) × P(Word₂|Spam) × ... × P(Wordₙ|Spam)

While this independence assumption is rarely true in reality (words in emails are clearly related to each other), it dramatically simplifies the calculations and often works surprisingly well in practice.

Let’s implement a simple Naive Bayes classifier for text:

import numpy as np

from collections import defaultdict

class NaiveBayesClassifier:

def __init__(self):

self.class_priors = {}

self.word_likelihoods = {}

self.vocabulary = set()

def train(self, documents, labels):

"""

Train the Naive Bayes classifier

documents: list of documents (each document is a list of words)

labels: list of class labels for each document

"""

# Count documents in each class

class_counts = defaultdict(int)

word_counts = defaultdict(lambda: defaultdict(int))

class_word_totals = defaultdict(int)

for doc, label in zip(documents, labels):

class_counts[label] += 1

for word in doc:

self.vocabulary.add(word)

word_counts[label][word] += 1

class_word_totals[label] += 1

# Calculate prior probabilities P(Class)

total_docs = len(documents)

for label in class_counts:

self.class_priors[label] = class_counts[label] / total_docs

# Calculate likelihoods P(Word|Class) with Laplace smoothing

vocab_size = len(self.vocabulary)

self.word_likelihoods = {}

for label in class_counts:

self.word_likelihoods[label] = {}

for word in self.vocabulary:

# Laplace smoothing: add 1 to numerator and vocab_size to denominator

count = word_counts[label][word]

self.word_likelihoods[label][word] = (count + 1) / (class_word_totals[label] + vocab_size)

def predict(self, document):

"""

Predict the class of a document

Returns: (predicted_class, class_probabilities)

"""

class_scores = {}

for label in self.class_priors:

# Start with log prior to avoid numerical underflow

score = np.log(self.class_priors[label])

# Add log likelihoods for each word

for word in document:

if word in self.vocabulary:

score += np.log(self.word_likelihoods[label][word])

class_scores[label] = score

# Convert log scores to probabilities

max_score = max(class_scores.values())

exp_scores = {label: np.exp(score - max_score) for label, score in class_scores.items()}

total = sum(exp_scores.values())

probabilities = {label: exp_score / total for label, exp_score in exp_scores.items()}

predicted_class = max(class_scores, key=class_scores.get)

return predicted_class, probabilities

# Example: Spam detection

# Training data

spam_emails = [

['buy', 'cheap', 'watches', 'now'],

['discount', 'viagra', 'online'],

['win', 'free', 'money', 'now'],

['cheap', 'discount', 'offer'],

]

ham_emails = [

['meeting', 'scheduled', 'tomorrow'],

['project', 'deadline', 'next', 'week'],

['lunch', 'plans', 'today'],

['please', 'review', 'document'],

]

# Prepare training data

documents = spam_emails + ham_emails

labels = ['spam'] * len(spam_emails) + ['ham'] * len(ham_emails)

# Train classifier

nb = NaiveBayesClassifier()

nb.train(documents, labels)

print("Training Complete!")

print(f"Class priors: {nb.class_priors}")

print(f"Vocabulary size: {len(nb.vocabulary)}\n")

# Test on new emails

test_emails = [

['cheap', 'watches', 'discount'], # Should be spam

['meeting', 'tomorrow', 'please'], # Should be ham

['free', 'money', 'offer'], # Should be spam

['review', 'project', 'deadline'], # Should be ham

]

print("Predictions:")

print("=" * 60)

for email in test_emails:

predicted_class, probabilities = nb.predict(email)

print(f"Email: {' '.join(email)}")

print(f"Predicted: {predicted_class}")

print(f"Probabilities: {probabilities}")

print("-" * 60)This implementation demonstrates how Bayes’ theorem transforms into a practical classifier. The key insights are that we use logarithms to prevent numerical underflow (multiplying many small probabilities), we add smoothing to handle words we haven’t seen before, and despite the naive independence assumption, the classifier can still make accurate predictions.

Common Probability Distributions in Machine Learning

Probability distributions describe how probability is distributed over different values of a random variable. Different distributions are suited to different types of data and problems, and understanding common distributions is essential for machine learning.

Bernoulli Distribution

The Bernoulli distribution models a single binary outcome, like a coin flip. It has one parameter p, the probability of success:

P(X = 1) = p

P(X = 0) = 1 - p

Expected value: E[X] = p Variance: Var(X) = p(1 – p)

In machine learning, Bernoulli distributions model binary classification problems where each instance is either class 0 or class 1.

Binomial Distribution

The binomial distribution describes the number of successes in n independent Bernoulli trials, each with probability p:

P(X = k) = C(n,k) × p^k × (1-p)^(n-k)

where C(n,k) is the binomial coefficient “n choose k”.

Expected value: E[X] = np Variance: Var(X) = np(1 – p)

This distribution is useful when you’re counting binary outcomes, like the number of spam emails in a batch of n emails.

Normal (Gaussian) Distribution

The normal distribution is perhaps the most important distribution in statistics and machine learning. It’s characterized by two parameters: mean μ and variance σ². The probability density function is:

f(x) = (1 / (σ√(2π))) × exp(-(x - μ)² / (2σ²))

The normal distribution has many nice properties. It’s symmetric around the mean, and about 68% of values fall within one standard deviation of the mean, 95% within two standard deviations, and 99.7% within three standard deviations.

Many machine learning algorithms assume that data or errors follow a normal distribution. The central limit theorem tells us that sums of many independent random variables tend toward a normal distribution, which partially explains why normal distributions appear so frequently in nature and data.

Multinomial Distribution

The multinomial distribution generalizes the binomial distribution to more than two categories. If you have k categories and conduct n trials, where each trial results in category i with probability pᵢ, the multinomial distribution gives the probability of getting counts n₁, n₂, …, nₖ:

P(n₁, n₂, ..., nₖ) = (n! / (n₁! × n₂! × ... × nₖ!)) × p₁^n₁ × p₂^n₂ × ... × pₖ^nₖ

This distribution is used in multiclass classification problems and in modeling word occurrences in documents.

Let’s visualize several important distributions:

import numpy as np

import matplotlib.pyplot as plt

from scipy import stats

fig, axes = plt.subplots(2, 3, figsize=(15, 10))

# Bernoulli Distribution

p = 0.7

x_bernoulli = [0, 1]

prob_bernoulli = [1-p, p]

axes[0, 0].bar(x_bernoulli, prob_bernoulli, width=0.3, color='skyblue', edgecolor='black')

axes[0, 0].set_title(f'Bernoulli Distribution (p={p})')

axes[0, 0].set_xlabel('Value')

axes[0, 0].set_ylabel('Probability')

axes[0, 0].set_xticks([0, 1])

# Binomial Distribution

n_trials = 10

p_success = 0.5

x_binomial = np.arange(0, n_trials + 1)

prob_binomial = stats.binom.pmf(x_binomial, n_trials, p_success)

axes[0, 1].bar(x_binomial, prob_binomial, color='lightgreen', edgecolor='black')

axes[0, 1].set_title(f'Binomial Distribution (n={n_trials}, p={p_success})')

axes[0, 1].set_xlabel('Number of Successes')

axes[0, 1].set_ylabel('Probability')

# Normal Distribution

mu = 0

sigma = 1

x_normal = np.linspace(-4, 4, 1000)

prob_normal = stats.norm.pdf(x_normal, mu, sigma)

axes[0, 2].plot(x_normal, prob_normal, linewidth=2, color='coral')

axes[0, 2].fill_between(x_normal, prob_normal, alpha=0.3, color='coral')

axes[0, 2].set_title(f'Normal Distribution (μ={mu}, σ={sigma})')

axes[0, 2].set_xlabel('Value')

axes[0, 2].set_ylabel('Probability Density')

axes[0, 2].grid(True, alpha=0.3)

# Poisson Distribution

lambda_param = 3

x_poisson = np.arange(0, 15)

prob_poisson = stats.poisson.pmf(x_poisson, lambda_param)

axes[1, 0].bar(x_poisson, prob_poisson, color='plum', edgecolor='black')

axes[1, 0].set_title(f'Poisson Distribution (λ={lambda_param})')

axes[1, 0].set_xlabel('Number of Events')

axes[1, 0].set_ylabel('Probability')

# Exponential Distribution

lambda_exp = 1

x_exp = np.linspace(0, 5, 1000)

prob_exp = stats.expon.pdf(x_exp, scale=1/lambda_exp)

axes[1, 1].plot(x_exp, prob_exp, linewidth=2, color='gold')

axes[1, 1].fill_between(x_exp, prob_exp, alpha=0.3, color='gold')

axes[1, 1].set_title(f'Exponential Distribution (λ={lambda_exp})')

axes[1, 1].set_xlabel('Value')

axes[1, 1].set_ylabel('Probability Density')

axes[1, 1].grid(True, alpha=0.3)

# Uniform Distribution

a, b = 0, 10

x_uniform = np.linspace(a - 1, b + 1, 1000)

prob_uniform = stats.uniform.pdf(x_uniform, a, b - a)

axes[1, 2].plot(x_uniform, prob_uniform, linewidth=2, color='lightblue')

axes[1, 2].fill_between(x_uniform, prob_uniform, alpha=0.3, color='lightblue')

axes[1, 2].set_title(f'Uniform Distribution (a={a}, b={b})')

axes[1, 2].set_xlabel('Value')

axes[1, 2].set_ylabel('Probability Density')

axes[1, 2].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Print key statistics for each distribution

print("Distribution Statistics:")

print("=" * 60)

print(f"Bernoulli (p={p}): Mean = {p}, Variance = {p*(1-p):.4f}")

print(f"Binomial (n={n_trials}, p={p_success}): Mean = {n_trials*p_success}, Variance = {n_trials*p_success*(1-p_success):.4f}")

print(f"Normal (μ={mu}, σ={sigma}): Mean = {mu}, Variance = {sigma**2}")

print(f"Poisson (λ={lambda_param}): Mean = {lambda_param}, Variance = {lambda_param}")

print(f"Exponential (λ={lambda_exp}): Mean = {1/lambda_exp}, Variance = {1/lambda_exp**2}")

print(f"Uniform (a={a}, b={b}): Mean = {(a+b)/2}, Variance = {(b-a)**2/12:.4f}")Understanding these distributions helps you choose appropriate models for your data, make correct assumptions in your algorithms, and properly interpret results.

The Law of Large Numbers and Central Limit Theorem

Two fundamental theorems connect probability theory to statistics and practical data analysis: the law of large numbers and the central limit theorem.

The Law of Large Numbers states that as you collect more samples, the sample average converges to the expected value. If you flip a fair coin ten times, you might get seven heads (70%), but if you flip it ten thousand times, you’ll get very close to 50% heads. This theorem justifies using sample means to estimate population means.

The Central Limit Theorem is even more remarkable. It states that the sum (or average) of many independent random variables tends toward a normal distribution, regardless of the original distribution. Even if you’re summing variables from a highly skewed or unusual distribution, their sum will be approximately normal if you have enough variables.

This theorem is profound because it explains why normal distributions appear everywhere. Many real-world quantities are the sum of many small independent effects, and the central limit theorem tells us these sums will be normally distributed.

Let’s demonstrate both theorems with code:

import numpy as np

import matplotlib.pyplot as plt

# Demonstrate Law of Large Numbers

np.random.seed(42)

# Theoretical expected value for a fair die is 3.5

true_mean = 3.5

# Simulate rolling a die many times

n_rolls_max = 10000

rolls = np.random.randint(1, 7, size=n_rolls_max)

# Calculate running average

cumulative_mean = np.cumsum(rolls) / np.arange(1, n_rolls_max + 1)

# Plot

fig, axes = plt.subplots(1, 2, figsize=(15, 5))

axes[0].plot(cumulative_mean, linewidth=1)

axes[0].axhline(y=true_mean, color='red', linestyle='--', linewidth=2, label='True Mean (3.5)')

axes[0].set_xlabel('Number of Rolls')

axes[0].set_ylabel('Running Average')

axes[0].set_title('Law of Large Numbers: Die Rolling')

axes[0].legend()

axes[0].grid(True, alpha=0.3)

# Demonstrate Central Limit Theorem

# Start with a uniform distribution (decidedly non-normal)

n_samples = 10000

sample_sizes = [1, 2, 5, 30]

original_dist = np.random.uniform(0, 1, n_samples)

# Show that means of samples approach normal distribution

fig2, axes2 = plt.subplots(2, 2, figsize=(12, 10))

axes2 = axes2.ravel()

for idx, n in enumerate(sample_sizes):

# Generate many samples and compute their means

sample_means = []

for _ in range(10000):

sample = np.random.uniform(0, 1, n)

sample_means.append(np.mean(sample))

# Plot histogram of sample means

axes2[idx].hist(sample_means, bins=50, density=True, alpha=0.7,

edgecolor='black', color='skyblue')

# Overlay theoretical normal distribution

mean_theory = 0.5 # mean of uniform(0,1)

std_theory = np.sqrt(1/12 / n) # std of mean of n uniform(0,1) variables

x = np.linspace(min(sample_means), max(sample_means), 100)

axes2[idx].plot(x, stats.norm.pdf(x, mean_theory, std_theory),

'r-', linewidth=2, label='Theoretical Normal')

axes2[idx].set_title(f'Sample Size n = {n}')

axes2[idx].set_xlabel('Sample Mean')

axes2[idx].set_ylabel('Density')

axes2[idx].legend()

axes2[idx].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Print statistics

print("Central Limit Theorem Demonstration")

print("=" * 60)

print("Original distribution: Uniform(0, 1)")

print("Theoretical mean: 0.5, Theoretical variance: 1/12 ≈ 0.0833")

print("\nSample means distribution:")

for n in sample_sizes:

sample_means = [np.mean(np.random.uniform(0, 1, n)) for _ in range(10000)]

print(f" n={n:2d}: Mean={np.mean(sample_means):.4f}, "

f"Std={np.std(sample_means):.4f}, "

f"Theoretical Std={np.sqrt(1/12/n):.4f}")These demonstrations show how probability theory connects to practical data analysis. The law of large numbers assures us that we can estimate probabilities and expectations from data, while the central limit theorem explains why normal distributions are so ubiquitous and why many statistical methods assume normality.

Maximum Likelihood Estimation: Learning from Data

One of the most important applications of probability theory in machine learning is maximum likelihood estimation (MLE). This is a method for estimating the parameters of a probability distribution based on observed data.

The core idea is simple: given some data, we want to find the parameters that make the observed data most probable. If we have a dataset and we’re assuming it comes from some distribution with unknown parameters, MLE finds the parameter values that maximize the likelihood of observing that particular dataset.

Mathematically, if we have data points x₁, x₂, …, xₙ and a probability distribution with parameter θ, the likelihood function is:

L(θ) = P(x₁, x₂, ..., xₙ | θ) = P(x₁|θ) × P(x₂|θ) × ... × P(xₙ|θ)

(assuming independence). The MLE estimate is:

θ_MLE = argmax L(θ)

In practice, we usually work with the log-likelihood because it turns products into sums, making calculations easier:

log L(θ) = log P(x₁|θ) + log P(x₂|θ) + ... + log P(xₙ|θ)

Let’s work through a concrete example: estimating the probability of heads for a biased coin. Suppose we flip a coin 100 times and get 60 heads. What’s our MLE estimate for p, the probability of heads?

The likelihood is:

L(p) = p^60 × (1-p)^40

To maximize this, we take the derivative with respect to p, set it to zero, and solve. Or we can work with the log-likelihood:

log L(p) = 60 log(p) + 40 log(1-p)

Taking the derivative:

d/dp log L(p) = 60/p - 40/(1-p)

Setting this to zero and solving:

60/p = 40/(1-p)

60(1-p) = 40p

60 - 60p = 40p

60 = 100p

p = 0.6

The MLE estimate is simply the proportion of heads in our sample, which makes intuitive sense!

Here’s a more sophisticated implementation showing MLE for the normal distribution:

import numpy as np

import matplotlib.pyplot as plt

from scipy import stats

def negative_log_likelihood_normal(params, data):

"""

Negative log-likelihood for normal distribution

We minimize this to find MLE

"""

mu, sigma = params

if sigma <= 0: # sigma must be positive

return np.inf

n = len(data)

nll = n/2 * np.log(2 * np.pi * sigma**2) + np.sum((data - mu)**2) / (2 * sigma**2)

return nll

# Generate synthetic data from a known distribution

np.random.seed(42)

true_mu = 5.0

true_sigma = 2.0

n_samples = 1000

data = np.random.normal(true_mu, true_sigma, n_samples)

# Method 1: Analytical MLE (for normal distribution, MLE has closed form)

mu_mle = np.mean(data)

sigma_mle = np.std(data, ddof=0) # MLE uses n in denominator, not n-1

print("Maximum Likelihood Estimation for Normal Distribution")

print("=" * 60)

print(f"True parameters: μ = {true_mu}, σ = {true_sigma}")

print(f"MLE estimates: μ = {mu_mle:.4f}, σ = {sigma_mle:.4f}")

print(f"Sample mean: {np.mean(data):.4f}")

print(f"Sample std (n-1): {np.std(data, ddof=1):.4f}")

print(f"Sample std (n): {np.std(data, ddof=0):.4f}")

print("\n")

# Method 2: Numerical optimization

from scipy.optimize import minimize

# Initial guess

initial_params = [0, 1]

# Minimize negative log-likelihood

result = minimize(negative_log_likelihood_normal, initial_params, args=(data,),

method='Nelder-Mead')

print("Numerical Optimization Results:")

print(f"MLE estimates: μ = {result.x[0]:.4f}, σ = {result.x[1]:.4f}")

print(f"Negative log-likelihood: {result.fun:.4f}")

print("\n")

# Visualize the likelihood surface

mu_range = np.linspace(4, 6, 50)

sigma_range = np.linspace(1.5, 2.5, 50)

MU, SIGMA = np.meshgrid(mu_range, sigma_range)

# Calculate log-likelihood for each combination

log_likelihood = np.zeros_like(MU)

for i in range(len(mu_range)):

for j in range(len(sigma_range)):

log_likelihood[j, i] = -negative_log_likelihood_normal(

[MU[j, i], SIGMA[j, i]], data)

fig = plt.figure(figsize=(14, 5))

# Contour plot

ax1 = fig.add_subplot(121)

contour = ax1.contour(MU, SIGMA, log_likelihood, levels=20)

ax1.clabel(contour, inline=True, fontsize=8)

ax1.plot(true_mu, true_sigma, 'r*', markersize=15, label='True parameters')

ax1.plot(mu_mle, sigma_mle, 'go', markersize=10, label='MLE estimates')

ax1.set_xlabel('μ')

ax1.set_ylabel('σ')

ax1.set_title('Log-Likelihood Surface')

ax1.legend()

ax1.grid(True, alpha=0.3)

# Fitted distribution vs data

ax2 = fig.add_subplot(122)

ax2.hist(data, bins=30, density=True, alpha=0.7, edgecolor='black', label='Data')

x = np.linspace(data.min(), data.max(), 100)

ax2.plot(x, stats.norm.pdf(x, true_mu, true_sigma), 'r-', linewidth=2,

label=f'True distribution (μ={true_mu}, σ={true_sigma})')

ax2.plot(x, stats.norm.pdf(x, mu_mle, sigma_mle), 'g--', linewidth=2,

label=f'MLE fit (μ={mu_mle:.2f}, σ={sigma_mle:.2f})')

ax2.set_xlabel('Value')

ax2.set_ylabel('Density')

ax2.set_title('Data and Fitted Distributions')

ax2.legend()

ax2.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()Maximum likelihood estimation is fundamental to many machine learning algorithms. Linear regression, logistic regression, and neural networks all use MLE (or variations like MAP – maximum a posteriori) to learn their parameters from data.

Conclusion: The Probabilistic Foundation of Machine Learning

We’ve journeyed through the fundamental concepts of probability theory, building from basic definitions to sophisticated applications in machine learning. Let’s reflect on why probability is so central to artificial intelligence and data science.

Probability theory provides the mathematical framework for reasoning under uncertainty. In the real world, we rarely have perfect information. Data is noisy, observations are incomplete, and the future is uncertain. Probability gives us rigorous tools to quantify this uncertainty, make optimal decisions despite it, and learn from imperfect data.

We started with the basic question “what is probability?” and saw how it quantifies likelihood through numbers between zero and one. We learned the fundamental axioms that form the foundation of all probability theory and saw how simple rules like the complement rule and addition rule follow from these axioms.

We explored random variables, which connect probabilistic events to numerical values, allowing us to apply powerful mathematical tools. We learned about expected value and variance, measures that characterize the center and spread of distributions. These concepts appear everywhere in machine learning, from loss functions to evaluation metrics.

The exploration of joint, marginal, and conditional probability revealed how we can reason about relationships between multiple variables. This led us to Bayes’ theorem, perhaps the most important result in probability for machine learning. Bayes’ theorem provides a principled way to update beliefs based on evidence, and it underlies Bayesian methods, Naive Bayes classifiers, and probabilistic graphical models.

We encountered important probability distributions like the Bernoulli, binomial, and normal distributions. Understanding these distributions helps us choose appropriate models for our data and make valid assumptions in our algorithms. The central limit theorem explained why normal distributions appear so frequently and justified many statistical methods.

Finally, we saw how maximum likelihood estimation connects probability to learning. MLE provides a systematic way to estimate parameters from data by finding values that make the observed data most probable. This principle underlies the training of countless machine learning models.

As you continue your journey in machine learning, you’ll see probability theory appearing again and again. When you train a neural network, you’re typically maximizing likelihood. When you evaluate a classifier, you’re estimating probabilities of correct predictions. When you use regularization, you’re often implicitly assuming a prior distribution over parameters. When you quantify prediction uncertainty, you’re using probability distributions.

The beauty of probability theory is that it provides a unified, coherent framework for all of this. Rather than treating each algorithm or technique as disconnected, probability theory reveals the common principles underlying machine learning. Understanding these probabilistic foundations doesn’t just help you use existing tools better; it enables you to design new methods, understand why techniques work, and develop intuition about when they’ll succeed or fail.

Probability thinking is a skill that develops over time. At first, working with probabilities might feel abstract or counterintuitive. But as you apply these concepts to real problems, solve exercises, and implement algorithms, the ideas will become more natural. You’ll develop the ability to think probabilistically about problems, to recognize when events are independent or dependent, to apply Bayes’ theorem instinctively, and to choose appropriate distributions for your data.

Remember that probability theory is not just mathematics for its own sake. It’s a practical tool for building intelligent systems that can learn from data and make decisions under uncertainty. Every time you see a machine learning algorithm making predictions with confidence scores, classifying emails as spam with probability estimates, or learning from examples to improve its performance, you’re seeing probability theory in action.

The concepts we’ve covered here form the essential foundation, but they’re just the beginning. As you advance in machine learning, you’ll encounter more sophisticated probabilistic methods: Bayesian networks for representing complex dependencies, Markov chains for modeling sequences, probabilistic programming for expressing and inferring complex models, and advanced inference techniques for learning from data. All of these build on the fundamental probability concepts we’ve explored together.

Keep practicing, keep exploring, and most importantly, keep thinking probabilistically about the world around you. The ability to reason rigorously about uncertainty is not just a machine learning skill but a fundamental tool for understanding our uncertain world.