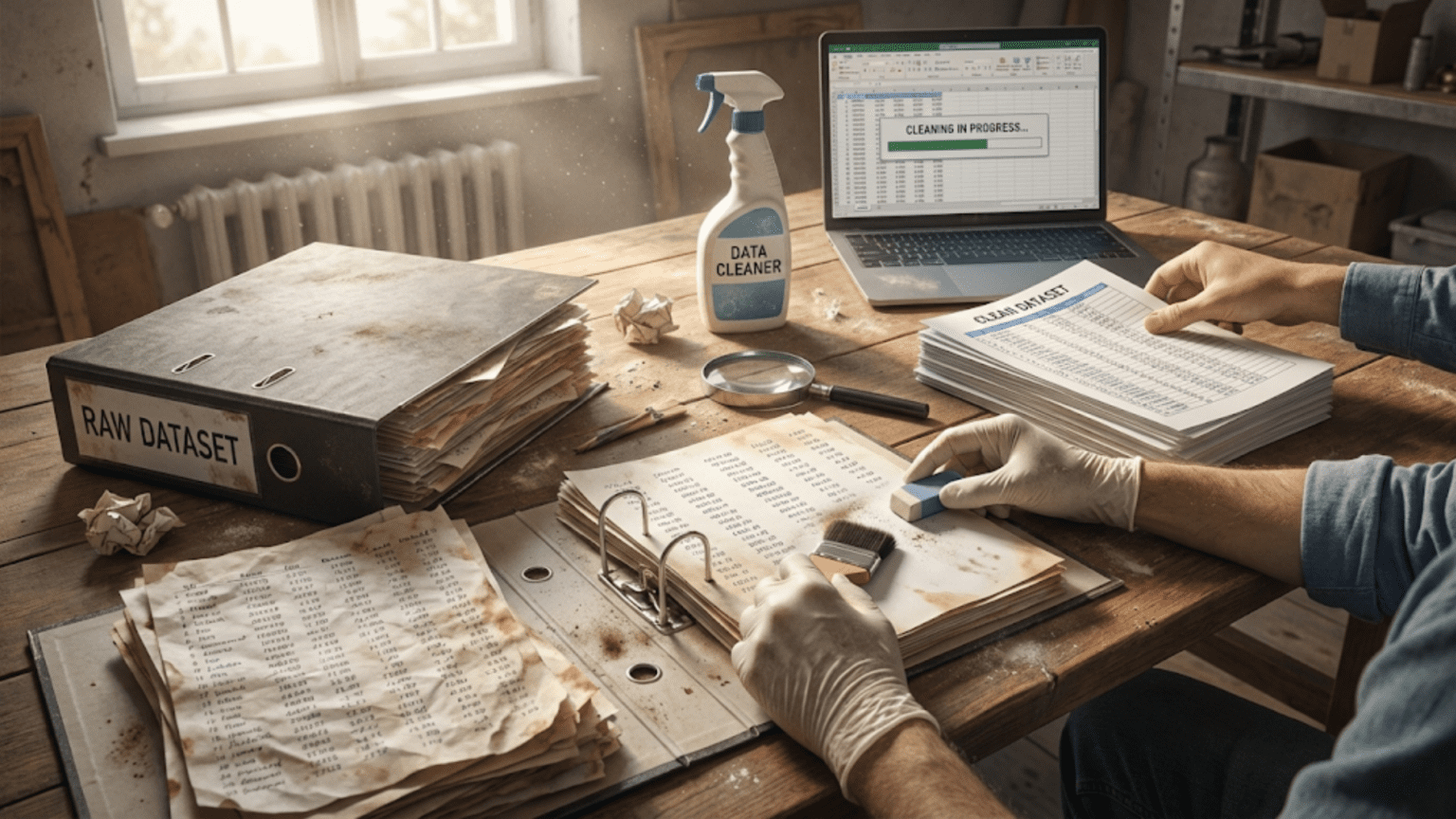

Introduction: Why Data Cleaning Determines Model Success

The most common mistake aspiring machine learning practitioners make is rushing to build models without properly cleaning their data. They download a dataset, import it into Python, immediately start training algorithms, and wonder why results are poor. The truth is uncomfortable but essential: your model’s performance depends more on data quality than algorithm sophistication. Garbage in, garbage out isn’t just a saying—it’s a fundamental law of machine learning.

Professional data scientists spend 60-80% of their time on data preparation, with data cleaning being a significant portion of that work. This time investment isn’t inefficiency; it’s necessity. Real-world data is messy. Values are missing. Duplicates exist. Types are wrong. Formats vary. Outliers skew distributions. Encoding is inconsistent. Categories are misspelled. These issues aren’t occasional annoyances—they’re the norm. Clean datasets ready for analysis are rare exceptions, typically created by previous data cleaning efforts.

Data cleaning transforms raw, messy data into reliable input for machine learning algorithms. It involves detecting and correcting errors, handling missing information, standardizing formats, removing duplicates, and ensuring consistency. Done well, data cleaning prevents model training failures, improves prediction accuracy, reduces bias from data quality issues, and enables meaningful analysis. Done poorly or skipped entirely, even the most sophisticated algorithms will fail.

Understanding why data becomes dirty helps you clean it effectively. Data comes from multiple sources with different standards. Human data entry introduces errors and inconsistencies. Systems fail or malfunction, creating gaps. Formats change over time within the same source. Integration from multiple systems creates duplicates. Measurement errors introduce noise and outliers. Each of these mechanisms creates specific data quality problems requiring specific cleaning approaches.

This comprehensive guide will teach you systematic data cleaning for machine learning projects. We’ll start by understanding what makes data dirty and why cleaning matters. We’ll explore techniques for identifying issues through exploratory data analysis. We’ll master handling missing values with appropriate strategies for different situations. We’ll learn to detect and remove duplicates intelligently. We’ll discover methods for fixing data type issues and standardizing formats. We’ll examine outlier detection and handling techniques. We’ll explore text cleaning for categorical data. Throughout, we’ll use practical examples with real-world messiness, ensuring you develop skills that transfer directly to your projects.

Understanding Data Quality: What Makes Data Dirty?

Before cleaning data, you need to recognize what “dirty” means. Data quality problems fall into several categories, each requiring different cleaning approaches. Understanding these categories helps you systematically identify and address issues.

Missing Values: The Universal Problem

Missing values are the most common data quality issue. They appear in nearly every real-world dataset. A survey respondent skips a question. A sensor fails to record a measurement. A database field is left blank. A value is so implausible it’s better treated as missing. Missing data manifests as NaN (Not a Number) in Pandas, NULL in databases, or empty strings in text files.

Missing values cause immediate problems—many machine learning algorithms can’t handle them and will throw errors if you try to train on data with gaps. Even algorithms that tolerate missing values may make poor assumptions about them. But missing values also carry information: why is this value missing? Is it missing randomly, or does the missingness itself indicate something meaningful?

Three types of missing data require different approaches:

Missing Completely at Random (MCAR): The probability of missing is the same for all observations. A lab technician accidentally spills coffee on random forms, making some values unreadable. This is the easiest type to handle because missingness doesn’t correlate with anything.

Missing at Random (MAR): The probability of missing depends on observed data but not the missing value itself. Higher-income individuals might be less likely to report income, but given other variables like education and occupation, missingness is random. This requires more sophisticated handling.

Missing Not at Random (MNAR): The probability of missing depends on the value itself. People with very high incomes specifically refuse to report income. This is the hardest to handle properly and can introduce bias if mismanaged.

Duplicates: Redundant Information

Duplicate records waste space and bias analysis. If the same customer appears three times in your dataset, their preferences get triple-weighted in any analysis. Duplicates arise from data integration (merging databases with overlapping records), user behavior (same person submitting multiple times), or system errors (database transactions creating multiple entries).

Identifying duplicates sounds simple but has subtleties. Should two records match on all columns to be duplicates? Just key columns? What about records that are almost identical with minor differences—are they duplicates or different records? These questions require domain knowledge and careful consideration.

Data Type Issues: Values in Wrong Forms

Columns often load with incorrect data types. Numeric values might be read as strings because they contain commas or currency symbols. Dates might be text rather than datetime objects. Boolean values might be encoded as “Yes”/”No” strings or 1/0 integers. Categories might be numbers that should be treated as labels, not quantities.

Wrong types prevent proper operations. You can’t calculate the mean of a numeric column if it’s stored as strings. You can’t extract the month from a date if it’s text. You can’t use one-hot encoding on categories if they’re mixed with numeric codes. Type correction is often necessary before any analysis.

Inconsistent Formatting: Same Data, Different Forms

The same information appears in multiple formats within a dataset. Phone numbers might be “(555) 123-4567”, “555-123-4567”, “5551234567”, or “+1-555-123-4567”. Names might be “John Smith”, “Smith, John”, “john smith”, or “SMITH, JOHN”. Countries might be “USA”, “United States”, “US”, or “America”. This inconsistency makes grouping, matching, and analysis difficult.

Dates are particularly problematic. Is “03/04/2024” March 4th or April 3rd? Depends on whether you’re using MM/DD/YYYY (US) or DD/MM/YYYY (most of the world) format. Timestamps might or might not include timezone information. These inconsistencies cause errors and misinterpretation.

Outliers: Extreme Values

Outliers are values far from the bulk of the distribution. A person’s age listed as 200. An income of $50 billion for a typical survey. A temperature of 500°F recorded by a weather station. Outliers arise from measurement errors, data entry mistakes, system malfunctions, or rarely, genuine extreme values.

The challenge with outliers is distinguishing errors from legitimate extreme values. A billionaire’s income of $50 billion is an outlier but accurate. A data entry error recording $50 billion for a teacher is an outlier and inaccurate. Removing legitimate outliers causes bias. Keeping errors corrupts analysis. Context and domain knowledge guide appropriate handling.

Invalid Values: Nonsensical Data

Some values are clearly wrong. Negative ages. Dates in the future for historical events. Grades of 150 on a 100-point scale. Percentages above 100 or below 0. Impossible category combinations. These violations of domain constraints indicate data quality problems requiring correction or removal.

Understanding these categories of data quality issues helps you approach cleaning systematically. You’ll check for missing values, identify duplicates, verify data types, standardize formats, detect outliers, and validate domain constraints. Each check reveals issues that could derail your machine learning project if left unaddressed.

Initial Data Exploration: Identifying Issues

Before cleaning data, you must understand it. Systematic exploration reveals what issues exist and guides your cleaning strategy. This initial assessment prevents wasted effort on non-issues and ensures you address real problems.

Loading and First Inspection

Start by loading your data and performing basic inspection:

import pandas as pd

import numpy as np

# Load the dataset

# In real projects, this would be: df = pd.read_csv('your_data.csv')

# For demonstration, we'll create a deliberately messy dataset

np.random.seed(42)

# Create sample messy data representing customer information

messy_data = {

'customer_id': [1001, 1002, 1003, 1001, 1004, 1005, 1006, 1007, 1008, 1009,

1010, 1011, 1012, 1013, 1014, 1015],

'name': ['John Smith', 'jane doe', 'MIKE JONES', 'John Smith', 'Alice Brown',

'Bob Wilson', np.nan, 'Eve Davis', 'Charlie Miller', 'Dave Lee',

'Frank White', 'Grace Black', 'Henry Green', '', 'Ivy Blue', 'Jack Red'],

'age': ['25', '35', 'thirty', '25', np.nan, '42', '38', '29', '200', '33',

'28', '45', '31', '27', '36', 'unknown'],

'email': ['john@email.com', 'jane@email.com', 'mike@email.com', 'john@email.com',

'alice@email.com', 'bob@email', np.nan, 'eve@email.com',

'charlie@email.com', 'dave@email.com', 'frank@email.com',

'grace@email.com', 'henry@email.com', 'missing', 'ivy@email.com',

'jack@email.com'],

'purchase_amount': [150.50, 250.75, 89.99, 150.50, np.nan, 175.00, 220.30,

195.80, 12000.00, 165.40, 185.90, 205.60, 170.25,

155.00, 190.35, 178.50],

'signup_date': ['2023-01-15', '2023-02-20', '15-03-2023', '2023-01-15',

'2023-04-10', 'invalid', '2023-06-25', '2023-07-30',

'2023-08-15', '2023-09-05', '2023-10-12', '2023-11-20',

'2023-12-01', '2024-01-08', '2024-02-14', '2024-03-22']

}

df = pd.DataFrame(messy_data)

print("=" * 70)

print("INITIAL DATA INSPECTION")

print("=" * 70)

print("\n1. First Look at the Data")

print("-" * 70)

print(df.head(10))

print()

print("2. Dataset Dimensions")

print("-" * 70)

print(f"Number of rows: {df.shape[0]}")

print(f"Number of columns: {df.shape[1]}")

print(f"Total cells: {df.shape[0] * df.shape[1]}")What this code demonstrates: We start by loading data and examining the first few rows. This immediately reveals several issues: duplicate customer_id (1001), inconsistent name formatting (different cases), age as strings including text values, email with inconsistent formats, a suspicious large purchase_amount (12000), and inconsistent date formats. This quick inspection gives us our cleaning roadmap.

Checking Data Types

Understanding current data types reveals type correction needs:

print("\n3. Data Types")

print("-" * 70)

print(df.dtypes)

print()

print("4. Detailed Information")

print("-" * 70)

df.info()

print()This shows which columns need type conversion. Age should be numeric but is currently an object (string). Signup_date should be datetime but is an object. These type issues prevent proper analysis and must be corrected.

Identifying Missing Values

Missing value analysis shows how much data is missing and where:

print("\n5. Missing Values Analysis")

print("-" * 70)

# Count missing values per column

missing_counts = df.isnull().sum()

missing_percentage = (missing_counts / len(df) * 100).round(2)

missing_summary = pd.DataFrame({

'Missing_Count': missing_counts,

'Percentage': missing_percentage

})

print("Missing values per column:")

print(missing_summary[missing_summary['Missing_Count'] > 0])

print()

# Total missing

total_missing = missing_counts.sum()

total_cells = df.shape[0] * df.shape[1]

print(f"Total missing values: {total_missing} out of {total_cells} cells")

print(f"Overall missing percentage: {(total_missing/total_cells*100):.2f}%")

print()This reveals age, email, and purchase_amount have missing values. The percentage helps decide whether to impute or drop. A column missing 90% of values might be dropped entirely. A column missing 5% might be imputed.

Finding Duplicates

Duplicate analysis shows redundant records:

print("\n6. Duplicate Records")

print("-" * 70)

# Check for complete row duplicates

complete_duplicates = df.duplicated()

print(f"Complete duplicate rows: {complete_duplicates.sum()}")

if complete_duplicates.sum() > 0:

print("\nDuplicate rows:")

print(df[complete_duplicates])

print()

# Check for duplicates based on key column (customer_id)

id_duplicates = df.duplicated(subset=['customer_id'], keep=False)

print(f"Duplicate customer_ids: {id_duplicates.sum()}")

if id_duplicates.sum() > 0:

print("\nRecords with duplicate customer_ids:")

print(df[id_duplicates].sort_values('customer_id'))

print()This identifies that customer 1001 appears twice. We need to decide whether to keep the first occurrence, last occurrence, or merge the records intelligently.

Statistical Summary and Outlier Detection

Statistical summaries reveal outliers and distribution characteristics:

print("\n7. Statistical Summary (Numeric Columns)")

print("-" * 70)

# Convert purchase_amount to numeric for statistics (will handle properly later)

df_temp = df.copy()

df_temp['purchase_amount_numeric'] = pd.to_numeric(df_temp['purchase_amount'], errors='coerce')

print(df_temp[['purchase_amount_numeric']].describe())

print()

# Look at value distributions

print("8. Value Distributions")

print("-" * 70)

print("\nAge value counts:")

print(df['age'].value_counts())

print()

print("Email domain analysis:")

# Extract domains from emails (will handle missing/invalid later)

domains = df['email'].str.extract(r'@(.+)', expand=False)

print("Domains found:")

print(domains.value_counts())What this exploration demonstrates: The statistical summary shows purchase_amount ranges from 89.99 to 12000.00—that maximum of 12000 is likely an outlier worth investigating. The age value counts reveal non-numeric values like “thirty” and “unknown” that need handling. The email analysis shows some emails are incomplete or invalid. This systematic exploration creates a comprehensive picture of data quality issues requiring attention.

Handling Missing Values: Strategies and Implementation

Missing values require thoughtful handling. The right approach depends on why data is missing, how much is missing, and what the missing values represent. Different strategies suit different situations.

Strategy 1: Deletion

Deleting missing values is the simplest approach but has tradeoffs. Delete rows with missing values only when few rows are affected and missingness is random. Delete columns with missing values when most values are missing and the column isn’t critical.

print("MISSING VALUE HANDLING")

print("=" * 70)

print("\nOriginal dataset shape:", df.shape)

print()

# Option 1: Drop rows with ANY missing value

df_dropna_any = df.dropna()

print("Option 1 - Drop rows with any missing value:")

print(f" Rows remaining: {len(df_dropna_any)} (removed {len(df) - len(df_dropna_any)} rows)")

print(f" Percentage of data kept: {len(df_dropna_any)/len(df)*100:.1f}%")

print()

# Option 2: Drop rows only if ALL values are missing

df_dropna_all = df.dropna(how='all')

print("Option 2 - Drop rows only if all values are missing:")

print(f" Rows remaining: {len(df_dropna_all)} (removed {len(df) - len(df_dropna_all)} rows)")

print()

# Option 3: Drop rows based on specific columns

df_dropna_subset = df.dropna(subset=['customer_id', 'name'])

print("Option 3 - Drop rows only if customer_id or name is missing:")

print(f" Rows remaining: {len(df_dropna_subset)} (removed {len(df) - len(df_dropna_subset)} rows)")

print()

# Option 4: Drop columns with too many missing values

threshold = 0.5 # Drop if > 50% missing

df_dropcols = df.dropna(axis=1, thresh=int(threshold * len(df)))

print(f"Option 4 - Drop columns with >{threshold*100:.0f}% missing:")

print(f" Columns remaining: {len(df_dropcols.columns)}")

print(f" Columns kept: {df_dropcols.columns.tolist()}")When to use deletion:

- Few rows affected (< 5% of data)

- Missing completely at random

- No pattern to missingness

- Column is mostly missing (> 50%) and not essential

When NOT to use deletion:

- Large portion of data is missing

- Missingness correlates with other variables

- The fact that data is missing contains information

Strategy 2: Imputation with Statistics

Imputation fills missing values with estimates. Common strategies use statistical measures from the available data.

print("\n" + "=" * 70)

print("IMPUTATION STRATEGIES")

print("=" * 70)

df_imputed = df.copy()

# First, handle the age column - convert to numeric, making invalid values NaN

df_imputed['age'] = pd.to_numeric(df_imputed['age'], errors='coerce')

print("\n1. Mean Imputation (for continuous, symmetric distributions)")

print("-" * 70)

age_mean = df_imputed['age'].mean()

print(f"Mean age: {age_mean:.2f}")

df_imputed['age_mean_imputed'] = df_imputed['age'].fillna(age_mean)

print("Missing ages filled with mean")

print()

print("2. Median Imputation (for continuous, skewed distributions)")

print("-" * 70)

age_median = df_imputed['age'].median()

print(f"Median age: {age_median:.2f}")

df_imputed['age_median_imputed'] = df_imputed['age'].fillna(age_median)

print("Missing ages filled with median")

print()

print("3. Mode Imputation (for categorical variables)")

print("-" * 70)

# For demonstration, let's say name categories

# In real scenario, this might be applied to categorical columns

print("Mode is most common value - use for categories")

print("Example: Most common customer type, most frequent region, etc.")

print()

print("4. Forward Fill (for time series data)")

print("-" * 70)

print("Use previous valid value - appropriate when values change slowly")

df_imputed['age_ffill'] = df_imputed['age'].fillna(method='ffill')

print("Each missing value filled with previous valid value")

print()

print("5. Backward Fill (for time series data)")

print("-" * 70)

print("Use next valid value - appropriate when values change slowly")

df_imputed['age_bfill'] = df_imputed['age'].fillna(method='bfill')

print("Each missing value filled with next valid value")

print()

# Show results

print("\nComparison of imputation methods:")

print("-" * 70)

comparison = df_imputed[['customer_id', 'age', 'age_mean_imputed',

'age_median_imputed']].head(10)

print(comparison)What this code demonstrates: Different imputation strategies suit different data characteristics. Mean imputation works well for symmetric distributions but is pulled by outliers. Median imputation is robust to outliers and better for skewed distributions. Mode imputation suits categorical data. Forward/backward fill work for time series where values change gradually. The choice depends on your data’s nature and the column’s distribution.

Strategy 3: Constant or Custom Value

Sometimes the missing value itself has meaning, or a specific value is appropriate:

print("\n" + "=" * 70)

print("CUSTOM VALUE IMPUTATION")

print("=" * 70)

df_custom = df.copy()

# Convert age to numeric first

df_custom['age'] = pd.to_numeric(df_custom['age'], errors='coerce')

# Fill with custom value that makes domain sense

print("\n1. Domain-Specific Constant")

print("-" * 70)

# If missing age means "not provided", might use special code

df_custom['age_custom'] = df_custom['age'].fillna(-1) # -1 indicates "not provided"

print("Missing ages filled with -1 (indicating 'not provided')")

print()

# Fill email with placeholder

print("2. Placeholder for Missing Text")

print("-" * 70)

df_custom['email_filled'] = df_custom['email'].fillna('no_email@unknown.com')

print("Missing emails filled with placeholder")

print()

# Create indicator column

print("3. Missing Indicator Variable")

print("-" * 70)

df_custom['age_was_missing'] = df_custom['age'].isnull().astype(int)

print("Created binary column indicating whether age was originally missing")

print("This preserves information that the value was missing")

print()

print(df_custom[['customer_id', 'age', 'age_custom', 'age_was_missing']].head(10))When to use custom values:

- Missing has specific meaning in your domain

- You want to distinguish missing from other values

- You want to preserve information that data was missing

Choosing the Right Strategy

The decision tree for missing value handling:

print("\n" + "=" * 70)

print("DECISION GUIDE FOR MISSING VALUE HANDLING")

print("=" * 70)

print("""

1. Check how much data is missing:

- < 5%: Can delete or impute

- 5-30%: Impute recommended

- > 30%: Consider if column is necessary

2. Check why data is missing:

- Random/technical error: Impute with statistics

- Systematic pattern: Consider domain knowledge

- Missing = meaningful: Use indicator or special value

3. Consider data type:

- Continuous symmetric: Mean imputation

- Continuous skewed: Median imputation

- Categorical: Mode imputation

- Time series: Forward/backward fill

- Text: Placeholder value

4. For critical columns:

- Consider multiple imputation methods

- Test impact on model performance

- Create missing indicator alongside imputation

5. Document your decisions:

- Record why you chose each strategy

- Note assumptions made

- Track which values were imputed

""")What this demonstrates: Missing value handling requires judgment. You consider multiple factors: amount missing, why it’s missing, data type, domain meaning, and criticality. There’s rarely one “correct” answer—you make informed decisions based on these considerations and document your reasoning for future reference.

Removing Duplicates: Finding and Eliminating Redundancy

Duplicate records create bias and waste resources. Identifying and removing them requires understanding what constitutes a duplicate in your specific context.

Complete Row Duplicates

The simplest case is rows that are identical in all columns:

print("=" * 70)

print("DUPLICATE REMOVAL")

print("=" * 70)

df_dedup = df.copy()

print("\n1. Complete Row Duplicates")

print("-" * 70)

print(f"Original rows: {len(df_dedup)}")

# Check for complete duplicates

complete_dups = df_dedup.duplicated()

print(f"Complete duplicate rows: {complete_dups.sum()}")

if complete_dups.sum() > 0:

print("\nDuplicate rows:")

print(df_dedup[complete_dups])

# Remove duplicates, keeping first occurrence

df_dedup_complete = df_dedup.drop_duplicates()

print(f"\nAfter removing complete duplicates: {len(df_dedup_complete)} rows")Key Column Duplicates

More commonly, duplicates match on key columns but differ in others:

print("\n2. Duplicates Based on Key Column (customer_id)")

print("-" * 70)

# Identify duplicates based on customer_id

id_dups = df_dedup.duplicated(subset=['customer_id'], keep=False)

print(f"Records with duplicate customer_ids: {id_dups.sum()}")

if id_dups.sum() > 0:

print("\nDuplicate records (sorted by customer_id):")

print(df_dedup[id_dups].sort_values('customer_id'))

print()

# Strategy 1: Keep first occurrence

df_keep_first = df_dedup.drop_duplicates(subset=['customer_id'], keep='first')

print(f"Strategy 1 - Keep first: {len(df_keep_first)} rows remaining")

# Strategy 2: Keep last occurrence

df_keep_last = df_dedup.drop_duplicates(subset=['customer_id'], keep='last')

print(f"Strategy 2 - Keep last: {len(df_keep_last)} rows remaining")

# Strategy 3: Remove all duplicates

df_remove_all = df_dedup[~df_dedup.duplicated(subset=['customer_id'], keep=False)]

print(f"Strategy 3 - Remove all duplicates: {len(df_remove_all)} rows remaining")Intelligent Duplicate Handling

Sometimes you need sophisticated logic to handle duplicates:

print("\n3. Intelligent Duplicate Handling")

print("-" * 70)

# For each customer_id, keep the record with most complete information

def keep_most_complete(group):

"""Keep the row with fewest missing values"""

missing_counts = group.isnull().sum(axis=1)

return group.loc[missing_counts.idxmin()]

df_smart_dedup = df_dedup.groupby('customer_id', as_index=False).apply(keep_most_complete)

df_smart_dedup = df_smart_dedup.reset_index(drop=True)

print("Strategy 4 - Keep most complete record for each customer_id:")

print(f"Rows remaining: {len(df_smart_dedup)}")

print()

# Show the result for the duplicate customer_id

print("Example: Customer 1001 (had 2 records)")

print(df_smart_dedup[df_smart_dedup['customer_id'] == 1001])What this code demonstrates: Duplicate handling requires strategy. Simply keeping the first occurrence might lose newer, more complete information. Keeping the last might prefer more recent data but miss older details. Intelligent handling considers which record is most complete, most recent, or most reliable. The right choice depends on your data and problem.

Near-Duplicates: Fuzzy Matching

Sometimes records are similar but not identical—fuzzy matching identifies these:

print("\n4. Near-Duplicates (Similar but not identical)")

print("-" * 70)

print("""

Near-duplicates have slight differences:

- "John Smith" vs "Jon Smith" (typo)

- "john@email.com" vs "JOHN@EMAIL.COM" (case)

- "555-1234" vs "5551234" (formatting)

Tools for finding near-duplicates:

- fuzzywuzzy library for string similarity

- Levenshtein distance for edit distance

- Soundex/Metaphone for phonetic matching

Example approach:

1. Standardize format (lowercase, remove punctuation)

2. Calculate similarity scores

3. Set threshold (e.g., 90% similar = duplicate)

4. Manual review of borderline cases

""")

# Simple example: case-insensitive name comparison

df_dedup['name_lower'] = df_dedup['name'].str.lower().str.strip()

name_dups = df_dedup.duplicated(subset=['name_lower'], keep=False)

print(f"\nPotential near-duplicates based on case-insensitive names: {name_dups.sum()}")

if name_dups.sum() > 0:

print(df_dedup[name_dups][['customer_id', 'name', 'name_lower']])What this demonstrates: Exact matching misses near-duplicates caused by typos, formatting, or case differences. Fuzzy matching and string similarity algorithms identify records that are probably the same despite minor differences. This requires more computational effort but catches duplicates exact matching misses.

Fixing Data Types and Standardizing Formats

Data with correct types and consistent formats enables proper analysis and prevents errors during model training.

Converting Data Types

Type conversion transforms columns to appropriate types for their data:

print("=" * 70)

print("DATA TYPE CORRECTION")

print("=" * 70)

df_types = df.drop_duplicates(subset=['customer_id']).copy()

print("\n1. Original Data Types")

print("-" * 70)

print(df_types.dtypes)

print()

# Age: Convert to numeric

print("2. Converting Age to Numeric")

print("-" * 70)

print("Original age values:", df_types['age'].unique())

# Convert to numeric, invalid values become NaN

df_types['age_numeric'] = pd.to_numeric(df_types['age'], errors='coerce')

print("After conversion:", df_types['age_numeric'].dtype)

print(f"Values that couldn't convert: {df_types['age_numeric'].isnull().sum()}")

print()

# Handle the converted NaN values (from invalid entries)

age_median = df_types['age_numeric'].median()

df_types['age_numeric'] = df_types['age_numeric'].fillna(age_median)

print(f"Filled missing values with median: {age_median}")

print()

# Signup Date: Convert to datetime

print("3. Converting Dates to Datetime")

print("-" * 70)

print("Original date values:", df_types['signup_date'].unique()[:5])

df_types['signup_datetime'] = pd.to_datetime(df_types['signup_date'],

format='%Y-%m-%d',

errors='coerce')

print("After conversion:", df_types['signup_datetime'].dtype)

print(f"Values that couldn't convert: {df_types['signup_datetime'].isnull().sum()}")

print()

# Purchase Amount: Already numeric but verify

print("4. Verifying Numeric Columns")

print("-" * 70)

print(f"Purchase amount type: {df_types['purchase_amount'].dtype}")

print(f"Range: {df_types['purchase_amount'].min():.2f} to {df_types['purchase_amount'].max():.2f}")

print()

print("5. Updated Data Types")

print("-" * 70)

print(df_types[['age_numeric', 'signup_datetime', 'purchase_amount']].dtypes)What this code demonstrates: Type conversion requires error handling. Invalid values that can’t convert should become NaN rather than causing errors. The errors='coerce' parameter makes conversion safe. After conversion, you handle the new NaN values from invalid entries. Dates require format specification for correct parsing.

Standardizing Text Formats

Text data needs standardization for consistency:

print("\n" + "=" * 70)

print("FORMAT STANDARDIZATION")

print("=" * 70)

df_standard = df_types.copy()

# Name standardization

print("\n1. Name Standardization")

print("-" * 70)

print("Original names:")

print(df_standard['name'].head(8))

print()

# Standardize: trim whitespace, title case

df_standard['name_clean'] = df_standard['name'].str.strip().str.title()

print("After standardization (Title Case, trimmed):")

print(df_standard['name_clean'].head(8))

print()

# Email standardization

print("2. Email Standardization")

print("-" * 70)

print("Original emails:")

print(df_standard['email'].head(8))

print()

# Standardize: lowercase, trim whitespace

df_standard['email_clean'] = df_standard['email'].str.lower().str.strip()

# Identify invalid emails (simple check for @ symbol and domain)

df_standard['email_valid'] = df_standard['email_clean'].str.contains('@.+\..+',

regex=True,

na=False)

print("After standardization (lowercase, trimmed):")

print(df_standard[['email', 'email_clean', 'email_valid']].head(8))

print()

invalid_emails = df_standard[~df_standard['email_valid'] & df_standard['email_clean'].notna()]

print(f"Invalid email formats found: {len(invalid_emails)}")

if len(invalid_emails) > 0:

print(invalid_emails[['customer_id', 'email_clean']])What this demonstrates: Text standardization prevents issues from inconsistent formatting. Names with different capitalizations become consistent. Emails in mixed case are lowercased. Validation rules identify values that don’t match expected patterns. This standardization enables proper grouping, deduplication, and analysis.

Category Standardization

Categorical values need consistent labels:

print("\n3. Category Standardization")

print("-" * 70)

print("""

Common category issues:

- Inconsistent case: "male" vs "Male" vs "MALE"

- Spelling variations: "US" vs "USA" vs "United States"

- Extra whitespace: "Small " vs "Small"

- Abbreviations: "CA" vs "California"

Solution: Create mapping dictionary

Example:

""")

# Example with hypothetical region column

regions = ['north', 'NORTH', 'N', 'South', 'south', 's', 'East', 'east']

region_mapping = {

'north': 'North',

'n': 'North',

'NORTH': 'North',

'south': 'South',

's': 'South',

'east': 'East',

}

print("Original values:", regions)

print("\nMapping dictionary:", region_mapping)

print("\nStandardized:")

standardized = [region_mapping.get(r.lower(), r) for r in regions]

print(standardized)What this demonstrates: Category standardization requires domain knowledge to create mappings. You identify all variations of each category and map them to standard labels. This ensures consistent grouping and prevents algorithms from treating “North” and “north” as different categories.

Detecting and Handling Outliers

Outliers can distort analysis and model training. Detecting and appropriately handling them improves data quality.

Statistical Outlier Detection

Common statistical methods identify values far from the distribution center:

print("=" * 70)

print("OUTLIER DETECTION AND HANDLING")

print("=" * 70)

df_outliers = df_types.copy()

# Ensure numeric columns are properly typed

df_outliers['age_numeric'] = pd.to_numeric(df_outliers['age_numeric'], errors='coerce')

df_outliers['purchase_amount'] = pd.to_numeric(df_outliers['purchase_amount'], errors='coerce')

print("\n1. Z-Score Method (Standard Deviations)")

print("-" * 70)

# Calculate z-scores

age_mean = df_outliers['age_numeric'].mean()

age_std = df_outliers['age_numeric'].std()

df_outliers['age_zscore'] = (df_outliers['age_numeric'] - age_mean) / age_std

# Identify outliers (|z-score| > 3)

age_outliers_z = df_outliers[abs(df_outliers['age_zscore']) > 3]

print(f"Age outliers (|z-score| > 3): {len(age_outliers_z)}")

if len(age_outliers_z) > 0:

print(age_outliers_z[['customer_id', 'age_numeric', 'age_zscore']])

print()

print("2. IQR Method (Interquartile Range)")

print("-" * 70)

# Calculate IQR

Q1 = df_outliers['purchase_amount'].quantile(0.25)

Q3 = df_outliers['purchase_amount'].quantile(0.75)

IQR = Q3 - Q1

# Define outlier bounds

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

print(f"Purchase amount Q1: ${Q1:.2f}")

print(f"Purchase amount Q3: ${Q3:.2f}")

print(f"IQR: ${IQR:.2f}")

print(f"Outlier bounds: [${lower_bound:.2f}, ${upper_bound:.2f}]")

print()

# Identify outliers

purchase_outliers = df_outliers[

(df_outliers['purchase_amount'] < lower_bound) |

(df_outliers['purchase_amount'] > upper_bound)

]

print(f"Purchase amount outliers: {len(purchase_outliers)}")

if len(purchase_outliers) > 0:

print(purchase_outliers[['customer_id', 'purchase_amount']])

print()What this code demonstrates: Two common outlier detection methods each have strengths. Z-score (standard deviations from mean) assumes normal distribution and is sensitive to extreme outliers. IQR (interquartile range) is robust to outliers itself and works with any distribution. The choice depends on your data’s characteristics.

Handling Outliers

Once identified, outliers need appropriate treatment:

print("3. Outlier Handling Strategies")

print("-" * 70)

df_handled = df_outliers.copy()

# Strategy 1: Remove outliers

print("\nStrategy 1: Remove Outliers")

df_removed = df_handled[

(df_handled['purchase_amount'] >= lower_bound) &

(df_handled['purchase_amount'] <= upper_bound)

]

print(f"Rows removed: {len(df_handled) - len(df_removed)}")

print(f"Rows remaining: {len(df_removed)}")

print()

# Strategy 2: Cap outliers (Winsorization)

print("Strategy 2: Cap at Bounds (Winsorization)")

df_handled['purchase_capped'] = df_handled['purchase_amount'].clip(

lower=lower_bound,

upper=upper_bound

)

print(f"Values capped: {(df_handled['purchase_amount'] != df_handled['purchase_capped']).sum()}")

print("Before and after capping:")

print(df_handled[df_handled['purchase_amount'] != df_handled['purchase_capped']]

[['customer_id', 'purchase_amount', 'purchase_capped']])

print()

# Strategy 3: Transform (log transformation)

print("Strategy 3: Log Transformation")

# Add small constant to handle any zeros

df_handled['purchase_log'] = np.log1p(df_handled['purchase_amount'])

print("Log transformation reduces impact of extreme values")

print(f"Original range: [{df_handled['purchase_amount'].min():.2f}, {df_handled['purchase_amount'].max():.2f}]")

print(f"Log-transformed range: [{df_handled['purchase_log'].min():.4f}, {df_handled['purchase_log'].max():.4f}]")

print()

# Strategy 4: Flag outliers but keep them

print("Strategy 4: Flag Outliers (Keep but Mark)")

df_handled['is_outlier'] = (

(df_handled['purchase_amount'] < lower_bound) |

(df_handled['purchase_amount'] > upper_bound)

).astype(int)

print("Created binary indicator column")

print(f"Outliers flagged: {df_handled['is_outlier'].sum()}")What this demonstrates: Outlier handling requires judgment about whether values are errors or legitimate extremes. Removal eliminates outliers but loses data. Capping preserves row count while limiting extreme values’ influence. Transformation reduces impact without removing data. Flagging preserves all information while marking suspicious values for special attention. The right choice depends on whether outliers are errors and how your model handles extreme values.

Putting It All Together: Complete Data Cleaning Pipeline

Let’s integrate all cleaning steps into a comprehensive pipeline:

print("=" * 70)

print("COMPLETE DATA CLEANING PIPELINE")

print("=" * 70)

def clean_customer_data(df):

"""

Comprehensive data cleaning pipeline for customer data

Steps:

1. Remove duplicates

2. Fix data types

3. Handle missing values

4. Standardize formats

5. Handle outliers

6. Validate results

"""

print("\n=== STEP 1: REMOVE DUPLICATES ===")

print(f"Starting rows: {len(df)}")

# Remove duplicates based on customer_id, keeping most complete record

def keep_most_complete(group):

missing_counts = group.isnull().sum(axis=1)

return group.loc[missing_counts.idxmin()]

df_clean = df.groupby('customer_id', as_index=False).apply(keep_most_complete)

df_clean = df_clean.reset_index(drop=True)

print(f"After deduplication: {len(df_clean)} rows")

print("\n=== STEP 2: FIX DATA TYPES ===")

# Age to numeric

df_clean['age'] = pd.to_numeric(df_clean['age'], errors='coerce')

print("Age converted to numeric")

# Date to datetime

df_clean['signup_date'] = pd.to_datetime(df_clean['signup_date'], errors='coerce')

print("Signup date converted to datetime")

print("\n=== STEP 3: HANDLE MISSING VALUES ===")

# Age: median imputation

age_median = df_clean['age'].median()

df_clean['age'] = df_clean['age'].fillna(age_median)

print(f"Age missing values filled with median: {age_median}")

# Email: mark as missing

df_clean['email'] = df_clean['email'].fillna('no_email@unknown.com')

print("Email missing values filled with placeholder")

# Purchase amount: mean imputation

purchase_mean = df_clean['purchase_amount'].mean()

df_clean['purchase_amount'] = df_clean['purchase_amount'].fillna(purchase_mean)

print(f"Purchase amount missing values filled with mean: ${purchase_mean:.2f}")

# Date: drop rows (too few to matter)

rows_before = len(df_clean)

df_clean = df_clean.dropna(subset=['signup_date'])

print(f"Rows with invalid dates dropped: {rows_before - len(df_clean)}")

print("\n=== STEP 4: STANDARDIZE FORMATS ===")

# Names: title case, trim

df_clean['name'] = df_clean['name'].str.strip().str.title()

print("Names standardized to Title Case")

# Email: lowercase, trim

df_clean['email'] = df_clean['email'].str.lower().str.strip()

print("Emails standardized to lowercase")

print("\n=== STEP 5: HANDLE OUTLIERS ===")

# Cap extreme ages

df_clean['age'] = df_clean['age'].clip(lower=18, upper=100)

outliers_capped = (df_clean['age'] == 100).sum()

print(f"Age outliers capped at 100: {outliers_capped}")

# Cap extreme purchase amounts

Q1 = df_clean['purchase_amount'].quantile(0.25)

Q3 = df_clean['purchase_amount'].quantile(0.75)

IQR = Q3 - Q1

upper_bound = Q3 + 1.5 * IQR

df_clean['purchase_amount'] = df_clean['purchase_amount'].clip(upper=upper_bound)

print(f"Purchase amounts capped at ${upper_bound:.2f}")

print("\n=== STEP 6: VALIDATE RESULTS ===")

print(f"Final row count: {len(df_clean)}")

print(f"Missing values remaining: {df_clean.isnull().sum().sum()}")

print(f"Duplicate customer_ids: {df_clean.duplicated(subset=['customer_id']).sum()}")

print(f"Age range: [{df_clean['age'].min():.0f}, {df_clean['age'].max():.0f}]")

print(f"Purchase range: [${df_clean['purchase_amount'].min():.2f}, ${df_clean['purchase_amount'].max():.2f}]")

return df_clean

# Apply the cleaning pipeline

df_final = clean_customer_data(df)

print("\n" + "=" * 70)

print("CLEANING COMPLETE - Final Dataset Ready for Machine Learning")

print("=" * 70)

print("\nFinal cleaned data (first 5 rows):")

print(df_final.head())

print("\nData types:")

print(df_final.dtypes)What this pipeline demonstrates: A systematic cleaning pipeline applies all techniques in logical order. Duplicates are removed first to avoid wasted effort on duplicate records. Types are corrected to enable proper operations. Missing values are handled with appropriate strategies. Formats are standardized for consistency. Outliers are addressed to prevent extreme values from distorting analysis. Finally, validation confirms the data is clean and ready for machine learning. This pipeline can be adapted to your specific data and requirements.

Conclusion: Clean Data, Better Models

Data cleaning is not glamorous work, but it’s fundamental to machine learning success. The skills covered in this guide—systematic exploration to identify issues, strategic handling of missing values, intelligent duplicate removal, proper type correction and format standardization, and appropriate outlier treatment—transform raw, messy data into clean inputs that enable effective model training.

The time invested in thorough data cleaning pays off multiplicatively. Clean data trains faster because algorithms don’t fail on type errors or missing values. Models perform better because they learn from quality signals rather than noise and errors. Results are more reliable because they’re not distorted by duplicates or outliers. Analysis is more trustworthy because patterns reflect reality rather than data quality issues.

Remember that data cleaning is iterative, not a one-time task. As you explore data and build models, you’ll discover new quality issues requiring attention. Return to cleaning, fix issues, and continue. This iteration between cleaning, exploration, and modeling is normal and productive. Each cycle improves data quality and model performance.

Develop a systematic cleaning habit. For every new dataset, perform comprehensive exploration first. Identify all issues before attempting to fix anything. Create a cleaning pipeline that addresses issues in logical order. Document your decisions and reasoning. Validate that cleaning actually improved quality. This discipline prevents rushed cleaning that misses problems or introduces new ones.

As you gain experience, you’ll develop intuition for data quality issues. You’ll recognize patterns indicating specific problems. You’ll know which cleaning approaches work best for different situations. You’ll build reusable cleaning pipelines adapted to your domain. This expertise makes you more efficient and your machine learning projects more successful.

Clean data is the foundation of successful machine learning. Invest the time to do it right, and every subsequent step—modeling, validation, deployment—becomes easier and more effective. Your models will be better, your insights more reliable, and your machine learning career more successful.