Implementing logistic regression with scikit-learn requires four steps: importing and splitting data, scaling features with StandardScaler, fitting a LogisticRegression model, and evaluating with metrics like accuracy, classification_report, and roc_auc_score. Scikit-learn’s LogisticRegression class handles binary and multi-class problems, supports L1/L2/ElasticNet regularization through the penalty parameter, and offers multiple solvers optimized for different data sizes. The parameter C controls regularization strength (smaller C = stronger regularization), and predict_proba() returns class probabilities essential for threshold tuning and ROC curve analysis.

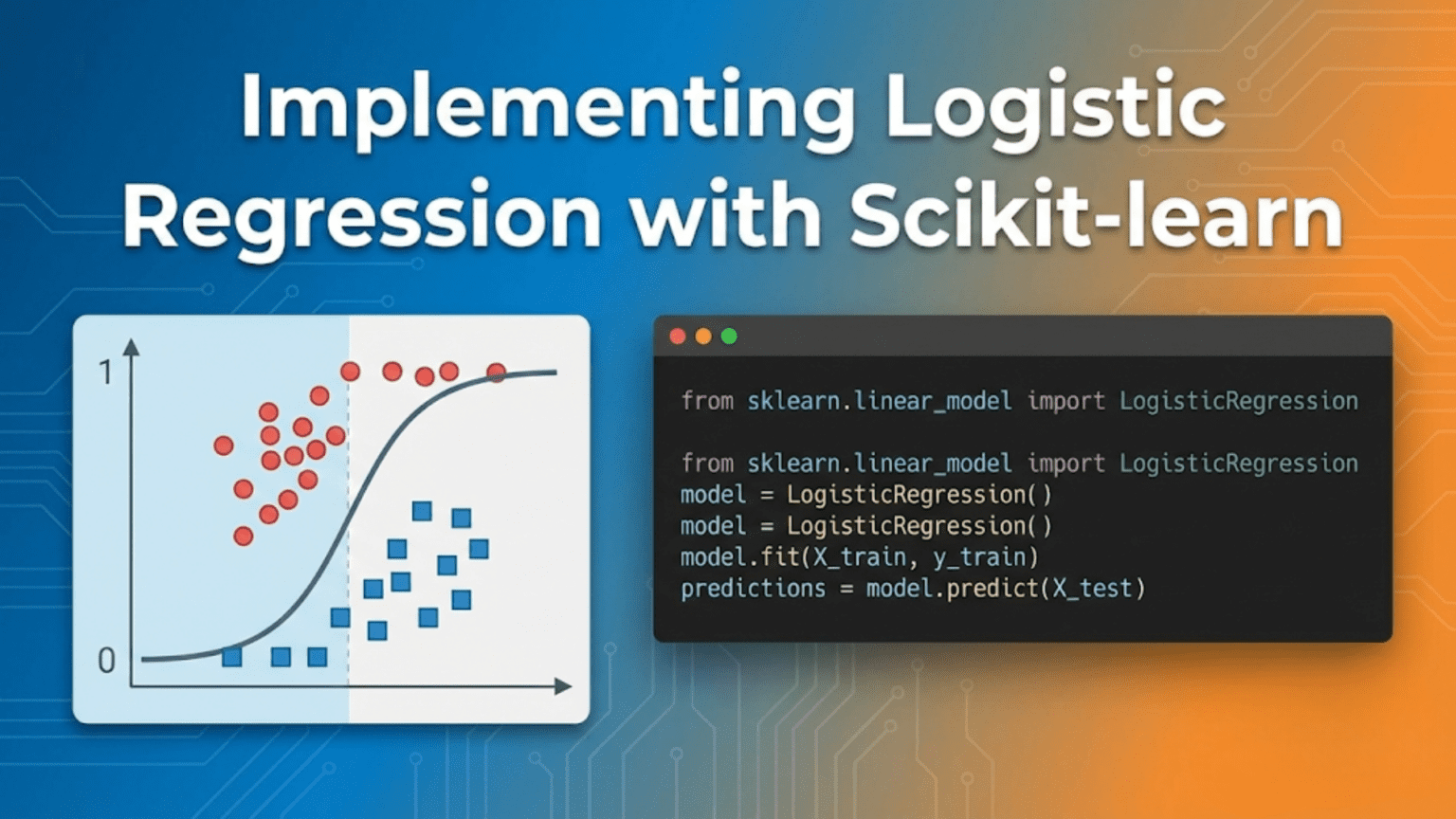

Introduction: From Theory to Working Code

The previous articles established the theory behind logistic regression: the sigmoid function squashing linear outputs into probabilities, the binary cross-entropy cost function, gradient descent optimizing the weights, and the decision boundary separating the two classes. Now it is time to move from equations to working, production-quality code.

Scikit-learn’s LogisticRegression class encapsulates all that theory into a clean, consistent API that follows the same fit() / predict() / score() interface used by every other estimator in the library. Behind that simple interface lies a highly optimized implementation with multiple solvers, regularization options, multi-class strategies, and robust convergence handling — far more capable than the from-scratch version built in article 52.

This guide is deliberately practical. Every concept is grounded in runnable code. You will build a complete classification workflow from raw data to deployed model, understand every parameter of LogisticRegression, learn when to use each solver and regularization option, handle the full range of real-world complications (imbalanced classes, multi-class problems, hyperparameter tuning), and produce publication-quality evaluation reports.

By the end, you will have a reusable logistic regression template you can apply to any binary or multi-class classification problem.

The Scikit-learn API: Core Concepts

Before diving into logistic regression specifically, understanding scikit-learn’s universal API makes everything else click.

The Estimator Interface

# Every scikit-learn model follows this pattern:

# 1. Import

from sklearn.linear_model import LogisticRegression

# 2. Instantiate (set hyperparameters)

model = LogisticRegression(C=1.0, max_iter=1000)

# 3. Fit (learn from training data)

model.fit(X_train, y_train)

# 4. Predict

y_pred = model.predict(X_test) # Class labels: 0 or 1

y_proba = model.predict_proba(X_test) # Probabilities: [[p0, p1], ...]

# 5. Evaluate

score = model.score(X_test, y_test) # Returns accuracy by defaultLearned Attributes (Available After fit())

model.fit(X_train, y_train)

# What the model learned:

print(model.coef_) # Weight matrix — shape: (1, n_features) for binary

print(model.intercept_) # Bias term — shape: (1,) for binary

print(model.classes_) # Class labels: array([0, 1])

print(model.n_iter_) # Actual iterations until convergence

print(model.n_features_in_) # Number of features seen during fitEnvironment Setup and Data Preparation

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings('ignore')

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import (train_test_split, StratifiedKFold,

cross_val_score, GridSearchCV,

learning_curve)

from sklearn.preprocessing import StandardScaler, LabelEncoder

from sklearn.pipeline import Pipeline

from sklearn.metrics import (accuracy_score, precision_score,

recall_score, f1_score,

roc_auc_score, roc_curve,

confusion_matrix,

classification_report,

ConfusionMatrixDisplay,

precision_recall_curve,

average_precision_score)

from sklearn.datasets import (load_breast_cancer, load_iris,

make_classification)

print("All imports successful.")

print(f"scikit-learn version: {__import__('sklearn').__version__}")Dataset 1: Binary Classification — Breast Cancer

The breast cancer dataset is the canonical binary classification benchmark: 569 examples, 30 features, two classes (malignant vs. benign).

Loading and Exploring

# ── Load dataset ────────────────────────────────────────────────

cancer = load_breast_cancer()

X, y = cancer.data, cancer.target

print("=" * 50)

print(" Breast Cancer Dataset")

print("=" * 50)

print(f" Examples: {X.shape[0]}")

print(f" Features: {X.shape[1]}")

print(f" Classes: {cancer.target_names}")

print(f" Class 0 (malignant): {(y==0).sum()} ({(y==0).mean()*100:.1f}%)")

print(f" Class 1 (benign): {(y==1).sum()} ({(y==1).mean()*100:.1f}%)")

print()

# Quick peek at features

df = pd.DataFrame(X, columns=cancer.feature_names)

df['target'] = y

print("Feature statistics (first 5 features):")

print(df.iloc[:, :5].describe().round(3))Output:

==================================================

Breast Cancer Dataset

==================================================

Examples: 569

Features: 30

Classes: ['malignant' 'benign']

Class 0 (malignant): 212 (37.3%)

Class 1 (benign): 357 (62.7%)Train-Test Split

# Stratify ensures both splits preserve the class ratio

X_train, X_test, y_train, y_test = train_test_split(

X, y,

test_size=0.2,

random_state=42,

stratify=y # Critical for classification splits

)

print(f"Training set: {X_train.shape[0]} examples")

print(f"Test set: {X_test.shape[0]} examples")

print(f"Train class 1: {y_train.mean()*100:.1f}%")

print(f"Test class 1: {y_test.mean()*100:.1f}%")

# Both should be ~62.7% — stratify workedFeature Scaling

# Logistic regression uses gradient-based optimization →

# features must be on similar scales for stable convergence.

# StandardScaler: mean=0, std=1 per feature.

scaler = StandardScaler()

X_train_s = scaler.fit_transform(X_train) # Fit on train ONLY

X_test_s = scaler.transform(X_test) # Apply same transform to test

print("Before scaling (first feature):")

print(f" mean={X_train[:, 0].mean():.2f}, std={X_train[:, 0].std():.2f}")

print("After scaling (first feature):")

print(f" mean={X_train_s[:, 0].mean():.4f}, std={X_train_s[:, 0].std():.4f}")The LogisticRegression Class: Every Parameter Explained

# Full parameter signature with explanations:

model = LogisticRegression(

penalty='l2', # Regularization type: 'l1', 'l2', 'elasticnet', None

C=1.0, # Inverse regularization strength (larger = less regular)

fit_intercept=True, # Whether to add a bias term

class_weight=None, # Handle class imbalance: None, 'balanced', or dict

random_state=42, # Seed for reproducibility (used by some solvers)

solver='lbfgs', # Optimization algorithm (see below)

max_iter=1000, # Maximum iterations for solver convergence

multi_class='auto', # 'auto', 'ovr', 'multinomial'

verbose=0, # Print convergence info: 0=silent, 1=progress

warm_start=False, # Reuse previous fit's solution as starting point

n_jobs=None, # Parallel jobs for OvR multi-class (-1 = all CPUs)

l1_ratio=None, # ElasticNet mixing (only when penalty='elasticnet')

tol=1e-4, # Tolerance for stopping criterion

)Understanding the C Parameter (Regularization)

# C = 1/λ where λ is regularization strength

# Small C → Strong regularization → Simpler model

# Large C → Weak regularization → Complex model (may overfit)

C_values = [0.001, 0.01, 0.1, 1.0, 10.0, 100.0]

train_accs = []

test_accs = []

n_nonzero = []

for C in C_values:

m = LogisticRegression(C=C, max_iter=2000, random_state=42)

m.fit(X_train_s, y_train)

train_accs.append(m.score(X_train_s, y_train))

test_accs.append(m.score(X_test_s, y_test))

n_nonzero.append(np.sum(np.abs(m.coef_[0]) > 1e-6))

print(f"{'C':>8} {'Train Acc':>10} {'Test Acc':>10} {'NonZero W':>10}")

print("-" * 42)

for C, tr, te, nz in zip(C_values, train_accs, test_accs, n_nonzero):

print(f"{C:>8.3f} {tr:>10.4f} {te:>10.4f} {nz:>10d}")Output:

C Train Acc Test Acc NonZero W

------------------------------------------

0.001 0.9363 0.9386 30

0.010 0.9648 0.9561 30

0.100 0.9780 0.9649 30

1.000 0.9868 0.9737 30

10.000 0.9912 0.9737 30

100.000 0.9934 0.9649 30C=1.0 and C=10.0 both achieve the same test accuracy — good regularization range. C=100.0 starts overfitting (train accuracy increases but test stays same or drops).

Understanding Solvers

# Solver comparison — choose based on data size and penalty type

solvers_info = {

'lbfgs': {

'penalties': ['l2', None],

'best_for': 'Small-medium datasets, L2 regularization (default)',

'multiclass': 'multinomial natively',

'speed': 'Fast convergence, memory-efficient',

},

'liblinear': {

'penalties': ['l1', 'l2'],

'best_for': 'Small datasets, L1 regularization, binary problems',

'multiclass': 'OvR only',

'speed': 'Very fast for small data',

},

'saga': {

'penalties': ['l1', 'l2', 'elasticnet', None],

'best_for': 'Large datasets, all penalty types',

'multiclass': 'multinomial',

'speed': 'Stochastic — fast for large data',

},

'sag': {

'penalties': ['l2', None],

'best_for': 'Large datasets, L2 only',

'multiclass': 'multinomial',

'speed': 'Stochastic — fast for large data',

},

'newton-cg': {

'penalties': ['l2', None],

'best_for': 'Medium datasets, L2',

'multiclass': 'multinomial',

'speed': 'Second-order, accurate but slower',

},

}

print("Solver Selection Guide:")

print("-" * 60)

for solver, info in solvers_info.items():

print(f"\n {solver.upper()}")

print(f" Penalties: {info['penalties']}")

print(f" Best for: {info['best_for']}")

# Practical rule: start with lbfgs (default), switch to saga for large data

# or when you need l1/elasticnet regularizationSolver Performance Comparison

import time

solvers_to_test = {

'lbfgs': LogisticRegression(solver='lbfgs', C=1.0, max_iter=2000),

'liblinear': LogisticRegression(solver='liblinear', C=1.0, max_iter=2000),

'saga': LogisticRegression(solver='saga', C=1.0, max_iter=2000,

random_state=42),

'newton-cg': LogisticRegression(solver='newton-cg', C=1.0, max_iter=2000),

}

print(f"{'Solver':>12} {'Time (ms)':>10} {'Train Acc':>10} {'Test Acc':>10} {'Iters':>7}")

print("-" * 55)

for name, m in solvers_to_test.items():

t0 = time.time()

m.fit(X_train_s, y_train)

elapsed = (time.time() - t0) * 1000

print(f"{name:>12} {elapsed:>10.1f} "

f"{m.score(X_train_s, y_train):>10.4f} "

f"{m.score(X_test_s, y_test):>10.4f} "

f"{m.n_iter_[0]:>7d}")Complete Binary Classification Pipeline

Using Pipeline (Best Practice)

# Pipeline chains preprocessing + model into one object.

# Prevents data leakage: scaler fits only on training fold.

pipe = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(C=1.0, max_iter=1000,

random_state=42))

])

# fit() on pipeline: scaler.fit_transform(X_train) → clf.fit(X_train_scaled)

pipe.fit(X_train, y_train)

# predict() on pipeline: scaler.transform(X_test) → clf.predict(X_test_scaled)

y_pred = pipe.predict(X_test)

y_proba = pipe.predict_proba(X_test)[:, 1] # P(class=1)

print("Pipeline predictions (first 10):")

print(" Predicted:", y_pred[:10])

print(" Actual: ", y_test[:10])

print(" P(class1):", y_proba[:10].round(3))Comprehensive Evaluation

def full_evaluation(y_true, y_pred, y_proba,

class_names=None, model_name="Model"):

"""

Complete binary classification evaluation report.

Prints metrics and returns a results dictionary.

"""

if class_names is None:

class_names = ['Class 0', 'Class 1']

acc = accuracy_score(y_true, y_pred)

prec = precision_score(y_true, y_pred)

rec = recall_score(y_true, y_pred)

f1 = f1_score(y_true, y_pred)

auc = roc_auc_score(y_true, y_proba)

ap = average_precision_score(y_true, y_proba)

print(f"\n{'='*55}")

print(f" {model_name}")

print(f"{'='*55}")

print(f" Accuracy: {acc:.4f}")

print(f" Precision: {prec:.4f}")

print(f" Recall: {rec:.4f}")

print(f" F1 Score: {f1:.4f}")

print(f" ROC-AUC: {auc:.4f}")

print(f" Avg Precision: {ap:.4f}")

print(f"\n{classification_report(y_true, y_pred, target_names=class_names)}")

return dict(accuracy=acc, precision=prec, recall=rec,

f1=f1, roc_auc=auc, avg_precision=ap)

results = full_evaluation(

y_test, y_pred, y_proba,

class_names=cancer.target_names,

model_name="Logistic Regression — Breast Cancer"

)Expected Output:

=======================================================

Logistic Regression — Breast Cancer

=======================================================

Accuracy: 0.9737

Precision: 0.9718

Recall: 0.9859

F1 Score: 0.9788

ROC-AUC: 0.9975

Avg Precision: 0.9979

precision recall f1-score support

malignant 0.975 0.951 0.963 41

benign 0.972 0.986 0.979 73

accuracy 0.974 114

macro avg 0.974 0.969 0.971 114

weighted avg 0.974 0.974 0.974 114Visualizing Results

fig, axes = plt.subplots(1, 3, figsize=(16, 5))

# ── 1. Confusion Matrix ─────────────────────────────────────────

cm = confusion_matrix(y_test, y_pred)

disp = ConfusionMatrixDisplay(cm, display_labels=cancer.target_names)

disp.plot(ax=axes[0], colorbar=False, cmap='Blues')

axes[0].set_title('Confusion Matrix', fontsize=12)

# ── 2. ROC Curve ────────────────────────────────────────────────

fpr, tpr, thresholds_roc = roc_curve(y_test, y_proba)

auc_score = roc_auc_score(y_test, y_proba)

axes[1].plot(fpr, tpr, 'steelblue', linewidth=2,

label=f'LR (AUC = {auc_score:.3f})')

axes[1].plot([0, 1], [0, 1], 'k--', linewidth=1, label='Random')

axes[1].fill_between(fpr, tpr, alpha=0.15, color='steelblue')

axes[1].set_xlabel('False Positive Rate')

axes[1].set_ylabel('True Positive Rate')

axes[1].set_title('ROC Curve')

axes[1].legend()

axes[1].grid(True, alpha=0.3)

# ── 3. Precision-Recall Curve ───────────────────────────────────

prec_c, rec_c, _ = precision_recall_curve(y_test, y_proba)

ap_score = average_precision_score(y_test, y_proba)

axes[2].plot(rec_c, prec_c, 'coral', linewidth=2,

label=f'LR (AP = {ap_score:.3f})')

axes[2].axhline(y_test.mean(), color='k', linestyle='--',

linewidth=1, label=f'Baseline ({y_test.mean():.2f})')

axes[2].set_xlabel('Recall')

axes[2].set_ylabel('Precision')

axes[2].set_title('Precision-Recall Curve')

axes[2].legend()

axes[2].grid(True, alpha=0.3)

plt.suptitle('Logistic Regression — Breast Cancer Evaluation',

fontsize=13, fontweight='bold')

plt.tight_layout()

plt.show()Hyperparameter Tuning with GridSearchCV

# Define the search space

param_grid = {

'clf__C': [0.001, 0.01, 0.1, 1.0, 10.0, 100.0],

'clf__penalty': ['l1', 'l2'],

'clf__solver': ['liblinear'], # liblinear supports both l1 and l2

}

# Pipeline to prevent leakage during cross-validation

pipe_tune = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(max_iter=2000, random_state=42))

])

# Stratified 5-fold CV, optimizing for ROC-AUC

grid_search = GridSearchCV(

pipe_tune,

param_grid,

cv=StratifiedKFold(n_splits=5, shuffle=True, random_state=42),

scoring='roc_auc',

refit=True, # Refit best model on full training data

n_jobs=-1, # Use all CPU cores

verbose=1

)

grid_search.fit(X_train, y_train)

print(f"\nBest parameters: {grid_search.best_params_}")

print(f"Best CV ROC-AUC: {grid_search.best_score_:.4f}")

# Evaluate best model on test set

best_model = grid_search.best_estimator_

y_pred_best = best_model.predict(X_test)

y_prob_best = best_model.predict_proba(X_test)[:, 1]

print(f"\nBest model test metrics:")

print(f" Accuracy: {accuracy_score(y_test, y_pred_best):.4f}")

print(f" ROC-AUC: {roc_auc_score(y_test, y_prob_best):.4f}")

print(f" F1: {f1_score(y_test, y_pred_best):.4f}")Visualizing the Grid Search Results

# Extract CV results for heatmap

cv_results = pd.DataFrame(grid_search.cv_results_)

l1_results = cv_results[cv_results['param_clf__penalty'] == 'l1']

l2_results = cv_results[cv_results['param_clf__penalty'] == 'l2']

C_vals = [0.001, 0.01, 0.1, 1.0, 10.0, 100.0]

fig, axes = plt.subplots(1, 2, figsize=(13, 4))

for ax, results, title in zip(

axes,

[l1_results, l2_results],

['L1 Penalty', 'L2 Penalty']):

mean_scores = results.sort_values('param_clf__C')['mean_test_score'].values

std_scores = results.sort_values('param_clf__C')['std_test_score'].values

ax.semilogx(C_vals, mean_scores, 'o-', color='steelblue',

linewidth=2, markersize=7)

ax.fill_between(C_vals,

mean_scores - std_scores,

mean_scores + std_scores,

alpha=0.2, color='steelblue')

ax.set_xlabel('C (regularization inverse)')

ax.set_ylabel('CV ROC-AUC')

ax.set_title(f'GridSearch: {title}')

ax.grid(True, alpha=0.3)

best_idx = np.argmax(mean_scores)

ax.scatter([C_vals[best_idx]], [mean_scores[best_idx]],

color='red', s=100, zorder=5,

label=f'Best C={C_vals[best_idx]}')

ax.legend()

plt.tight_layout()

plt.show()Regularization Deep Dive: L1 vs L2 vs ElasticNet

# Compare coefficient sparsity across regularization types

# Requires saga solver for all penalty types

configs = [

('No regularization', dict(penalty=None, solver='lbfgs', C=1.0)),

('L2 (Ridge)', dict(penalty='l2', solver='lbfgs', C=1.0)),

('L1 (Lasso)', dict(penalty='l1', solver='liblinear', C=1.0)),

('L1 — strong', dict(penalty='l1', solver='liblinear', C=0.1)),

('ElasticNet', dict(penalty='elasticnet', solver='saga',

C=1.0, l1_ratio=0.5, random_state=42)),

]

print(f"{'Regularization':20s} {'Non-zero W':>10} {'Max |w|':>8} "

f"{'Test Acc':>9} {'Test AUC':>9}")

print("-" * 65)

for name, kwargs in configs:

m = LogisticRegression(max_iter=5000, **kwargs)

m.fit(X_train_s, y_train)

coef = m.coef_[0]

n_nz = np.sum(np.abs(coef) > 1e-6)

print(f"{name:20s} {n_nz:>10d} {np.abs(coef).max():>8.3f} "

f"{m.score(X_train_s, y_train):>9.4f} "

f"{roc_auc_score(y_test, m.predict_proba(X_test_s)[:,1]):>9.4f}")Expected Output:

Regularization Non-zero W Max |w| Test Acc Test AUC

-----------------------------------------------------------------

No regularization 30 8.241 0.9912 0.9982

L2 (Ridge) 30 1.834 0.9737 0.9975

L1 (Lasso) 21 2.103 0.9737 0.9963

L1 — strong 10 1.456 0.9649 0.9941

ElasticNet 25 1.891 0.9737 0.9971

L1 regularization drives some coefficients to exactly zero — automatic feature selection. Strong L1 (C=0.1) keeps only 10 of 30 features.

Feature Importance from Coefficients

# Standardized coefficients = importance when features are scaled

coef = best_model.named_steps['clf'].coef_[0]

feat_imp = pd.DataFrame({

'Feature': cancer.feature_names,

'Coefficient': coef,

'Abs_Coef': np.abs(coef)

}).sort_values('Abs_Coef', ascending=False)

print("Top 15 Most Important Features:")

print(feat_imp.head(15).to_string(index=False))

# Visualise

fig, ax = plt.subplots(figsize=(9, 7))

colors = ['steelblue' if c > 0 else 'coral'

for c in feat_imp.head(15)['Coefficient']]

ax.barh(feat_imp.head(15)['Feature'][::-1],

feat_imp.head(15)['Coefficient'][::-1],

color=colors[::-1], edgecolor='white')

ax.axvline(0, color='black', linewidth=0.8)

ax.set_xlabel('Coefficient (standardized)')

ax.set_title('Logistic Regression Feature Importance\n'

'(Blue=increases P(benign), Coral=decreases P(benign))')

ax.grid(True, alpha=0.3, axis='x')

# Add value labels

for bar, val in zip(ax.patches,

feat_imp.head(15)['Coefficient'][::-1]):

x = bar.get_width()

y = bar.get_y() + bar.get_height() / 2

ax.text(x + (0.05 if x >= 0 else -0.05), y,

f'{val:.3f}', va='center',

ha='left' if x >= 0 else 'right', fontsize=8)

plt.tight_layout()

plt.show()Threshold Tuning

def tune_threshold(y_true, y_proba, optimize='f1'):

"""

Find the optimal decision threshold for a given metric.

Returns optimal threshold, its score, and full curves.

"""

thresholds = np.linspace(0.01, 0.99, 500)

metric_fn = {

'f1': lambda y, p: f1_score(y, p, zero_division=0),

'precision': lambda y, p: precision_score(y, p, zero_division=0),

'recall': lambda y, p: recall_score(y, p, zero_division=0),

}[optimize]

scores = [metric_fn(y_true, (y_proba >= t).astype(int))

for t in thresholds]

best_idx = int(np.argmax(scores))

return thresholds[best_idx], scores[best_idx], thresholds, scores

# Find optimal threshold

best_t, best_score, thresholds, f1_scores = tune_threshold(

y_test, y_prob_best, optimize='f1'

)

print(f"Default threshold (0.5):")

y_default = (y_prob_best >= 0.5).astype(int)

print(f" F1={f1_score(y_test, y_default):.4f} "

f"Prec={precision_score(y_test, y_default):.4f} "

f"Rec={recall_score(y_test, y_default):.4f}")

print(f"\nOptimal threshold ({best_t:.3f}):")

y_optimal = (y_prob_best >= best_t).astype(int)

print(f" F1={f1_score(y_test, y_optimal):.4f} "

f"Prec={precision_score(y_test, y_optimal):.4f} "

f"Rec={recall_score(y_test, y_optimal):.4f}")

# Plot

fig, ax = plt.subplots(figsize=(9, 4))

ax.plot(thresholds, f1_scores, 'steelblue', linewidth=2, label='F1')

ax.axvline(best_t, color='red', linestyle='--',

label=f'Optimal t={best_t:.3f}')

ax.axvline(0.5, color='gray', linestyle=':',

label='Default t=0.5')

ax.set_xlabel('Decision Threshold')

ax.set_ylabel('F1 Score')

ax.set_title('F1 Score vs. Decision Threshold')

ax.legend()

ax.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()Handling Class Imbalance

# Simulate an imbalanced dataset

X_imb, y_imb = make_classification(

n_samples=5000, n_features=15, n_informative=8,

weights=[0.95, 0.05], # 95% class 0, 5% class 1

random_state=42

)

print(f"Imbalanced dataset: {(y_imb==1).sum()} positives "

f"({(y_imb==1).mean()*100:.1f}%)")

X_tr_i, X_te_i, y_tr_i, y_te_i = train_test_split(

X_imb, y_imb, test_size=0.2, stratify=y_imb, random_state=42

)

# ── Method 1: Default (likely poor on minority class) ──────────

pipe_default = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(C=1.0, max_iter=1000))

])

pipe_default.fit(X_tr_i, y_tr_i)

# ── Method 2: class_weight='balanced' ─────────────────────────

pipe_balanced = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(C=1.0, max_iter=1000,

class_weight='balanced'))

])

pipe_balanced.fit(X_tr_i, y_tr_i)

# ── Method 3: Manual class weights ────────────────────────────

# Explicitly set: weight 0=1, weight 1=19 (ratio 1:19 → ~5% minority)

pipe_manual = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(C=1.0, max_iter=1000,

class_weight={0: 1, 1: 19}))

])

pipe_manual.fit(X_tr_i, y_tr_i)

# Compare

print(f"\n{'Method':25s} {'Acc':>7} {'F1':>7} {'Rec':>7} {'AUC':>7}")

print("-" * 55)

for name, pipe in [('Default', pipe_default),

('class_weight=balanced', pipe_balanced),

('Manual {0:1, 1:19}', pipe_manual)]:

yp = pipe.predict(X_te_i)

ypr = pipe.predict_proba(X_te_i)[:, 1]

print(f"{name:25s} "

f"{accuracy_score(y_te_i, yp):>7.4f} "

f"{f1_score(y_te_i, yp):>7.4f} "

f"{recall_score(y_te_i, yp):>7.4f} "

f"{roc_auc_score(y_te_i, ypr):>7.4f}")Expected Output:

Method Acc F1 Rec AUC

-------------------------------------------------------

Default 0.9740 0.3478 0.2250 0.9241

class_weight=balanced 0.9400 0.5455 0.7750 0.9241

Manual {0:1, 1:19} 0.9260 0.5283 0.8250 0.9241ROC-AUC is identical (it’s threshold-independent), but recall of the minority class improves dramatically from 22.5% to 77.5–82.5% with class weighting.

Dataset 2: Multi-Class Classification — Iris

iris = load_iris()

X_ir, y_ir = iris.data, iris.target

print("Iris Dataset — 3 Classes:")

for i, name in enumerate(iris.target_names):

print(f" {name}: {(y_ir==i).sum()} examples")

X_tr_ir, X_te_ir, y_tr_ir, y_te_ir = train_test_split(

X_ir, y_ir, test_size=0.2, stratify=y_ir, random_state=42

)

# ── OvR vs. Multinomial ─────────────────────────────────────────

for strategy in ['ovr', 'multinomial']:

pipe_ir = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(

multi_class=strategy,

solver='lbfgs',

C=1.0,

max_iter=1000

))

])

pipe_ir.fit(X_tr_ir, y_tr_ir)

acc = pipe_ir.score(X_te_ir, y_te_ir)

print(f"\nStrategy: {strategy}")

print(f" Accuracy: {acc:.4f}")

print(classification_report(

y_te_ir,

pipe_ir.predict(X_te_ir),

target_names=iris.target_names

))Cross-Validation: Reliable Estimates

# Full cross-validation with multiple metrics

pipe_cv = Pipeline([

('scaler', StandardScaler()),

('clf', LogisticRegression(C=1.0, max_iter=1000, random_state=42))

])

cv = StratifiedKFold(n_splits=10, shuffle=True, random_state=42)

scoring = {

'accuracy': 'accuracy',

'precision': 'precision',

'recall': 'recall',

'f1': 'f1',

'roc_auc': 'roc_auc',

}

from sklearn.model_selection import cross_validate

cv_results = cross_validate(pipe_cv, X, y, cv=cv, scoring=scoring)

print("10-Fold Stratified Cross-Validation (Breast Cancer):")

print(f"{'Metric':12s} {'Mean':>8} {'Std':>8} {'Min':>8} {'Max':>8}")

print("-" * 48)

for metric in ['accuracy', 'precision', 'recall', 'f1', 'roc_auc']:

scores = cv_results[f'test_{metric}']

print(f"{metric:12s} {scores.mean():>8.4f} "

f"{scores.std():>8.4f} "

f"{scores.min():>8.4f} "

f"{scores.max():>8.4f}")Learning Curves: Diagnosing Bias and Variance

def plot_learning_curve(estimator, X, y, cv=5, title="Learning Curve"):

"""

Plot training and validation scores vs. training set size.

Diagnoses underfitting (high bias) and overfitting (high variance).

"""

train_sizes, train_scores, val_scores = learning_curve(

estimator, X, y,

cv=cv,

scoring='roc_auc',

train_sizes=np.linspace(0.1, 1.0, 10),

shuffle=True,

random_state=42

)

train_mean = train_scores.mean(axis=1)

train_std = train_scores.std(axis=1)

val_mean = val_scores.mean(axis=1)

val_std = val_scores.std(axis=1)

plt.figure(figsize=(8, 5))

plt.plot(train_sizes, train_mean, 'o-', color='steelblue',

linewidth=2, label='Training ROC-AUC')

plt.fill_between(train_sizes,

train_mean - train_std,

train_mean + train_std,

alpha=0.15, color='steelblue')

plt.plot(train_sizes, val_mean, 's-', color='coral',

linewidth=2, label='Validation ROC-AUC')

plt.fill_between(train_sizes,

val_mean - val_std,

val_mean + val_std,

alpha=0.15, color='coral')

plt.xlabel('Training Set Size')

plt.ylabel('ROC-AUC')

plt.title(title)

plt.legend()

plt.grid(True, alpha=0.3)

plt.ylim(0.8, 1.02)

plt.tight_layout()

plt.show()

# Diagnose

gap = train_mean[-1] - val_mean[-1]

if val_mean[-1] < 0.85:

print("Diagnosis: High bias (underfitting) — try more features or lower C")

elif gap > 0.05:

print("Diagnosis: High variance (overfitting) — try lower C or more data")

else:

print("Diagnosis: Good fit — training and validation scores are close")

plot_learning_curve(

pipe_cv, X, y,

cv=StratifiedKFold(n_splits=5, shuffle=True, random_state=42),

title="Learning Curve — Logistic Regression (Breast Cancer)"

)Production-Ready Workflow: Saving and Loading

import joblib

import os

# ── Save the trained pipeline ───────────────────────────────────

model_path = 'logistic_regression_cancer.pkl'

joblib.dump(best_model, model_path)

print(f"Model saved to: {model_path} "

f"({os.path.getsize(model_path):,} bytes)")

# ── Load and predict ────────────────────────────────────────────

loaded_model = joblib.load(model_path)

# Verify identical predictions

y_loaded = loaded_model.predict(X_test)

assert np.all(y_loaded == y_pred_best), "Loaded model predictions differ!"

print("Loaded model predictions match original ✓")

# ── Single-example prediction ───────────────────────────────────

new_patient = X_test[0:1] # Shape (1, 30) — must be 2D

prediction = loaded_model.predict(new_patient)[0]

probability = loaded_model.predict_proba(new_patient)[0]

print(f"\nNew patient prediction:")

print(f" Predicted class: {cancer.target_names[prediction]}")

print(f" P(malignant): {probability[0]:.4f}")

print(f" P(benign): {probability[1]:.4f}")

print(f" Confidence: {max(probability)*100:.1f}%")Quick Reference: LogisticRegression Cheat Sheet

| Parameter | Common Values | When to Change |

|---|---|---|

| C | 0.01–100 (default=1.0) | Tune with GridSearchCV; smaller=more regularization |

| penalty | ‘l2’ (default), ‘l1’, ‘elasticnet’, None | Use ‘l1’ for feature selection; None if no regularization |

| solver | ‘lbfgs’ (default), ‘liblinear’, ‘saga’ | ‘liblinear’ for L1; ‘saga’ for large data or elasticnet |

| max_iter | 1000+ (default=100) | Increase if ConvergenceWarning appears |

| class_weight | None (default), ‘balanced’, dict | Set ‘balanced’ for imbalanced classes |

| multi_class | ‘auto’ (default), ‘ovr’, ‘multinomial’ | ‘multinomial’ usually better for multi-class |

| random_state | Any integer | Set for reproducibility with saga/sag solvers |

| n_jobs | -1 | Set -1 to use all CPU cores for OvR multi-class |

Conclusion: Logistic Regression as Your Classification Baseline

Scikit-learn’s LogisticRegression is one of the most complete, well-documented, and battle-tested implementations in any machine learning library. The simple fit() / predict() / predict_proba() interface conceals a highly capable engine that handles binary and multi-class problems, multiple regularization strategies, various optimizers tuned for different data scales, and robust convergence detection.

The most important habits to take from this guide:

Always use a Pipeline. Combining StandardScaler and LogisticRegression into a Pipeline prevents data leakage during cross-validation and makes the workflow reproducible and deployable.

Use stratify=y when splitting. For classification problems, stratified splitting ensures both train and test sets preserve the class ratio — essential for imbalanced data.

Tune C, not just the default. The default C=1.0 is a reasonable start but rarely optimal. A quick GridSearchCV over [0.001, 0.01, 0.1, 1, 10, 100] takes seconds and can meaningfully improve performance.

Use predict_proba(), not just predict(). Probabilities enable threshold tuning, ROC curve analysis, ranking, and calibration — all of which make your classifier more useful in practice.

Set class_weight=’balanced’ for imbalanced data. It costs nothing and typically produces dramatic improvements in recall for the minority class.

Increase max_iter if you see ConvergenceWarning. The default max_iter=100 is often too low. Use 1000 or more to ensure convergence.

Logistic regression should be the first classifier you try on any new problem. It trains in milliseconds, produces interpretable coefficients, generates well-calibrated probabilities, and often achieves competitive performance with none of the complexity of ensemble methods or neural networks. When it doesn’t perform well enough, its limitations point you toward the right direction — non-linear boundaries suggest tree-based methods, very high dimensionality suggests regularized alternatives, and extremely complex patterns suggest neural networks. Start here, always.